Gordon Wetzstein

Most Recent Affiliation(s):

- Stanford University, Bauhaus-University Weimar, MIT Media Lab, Research Scientist

Other / Past Affiliation(s):

- MIT Media Lab

Bio:

SIGGRAPH 2020

Gordon Wetzstein is an Assistant Professor of Electrical Engineering and, by courtesy, of Computer Science at Stanford University. His research has widespread applications in next- generation imaging, display, wearable computing, and microscopy systems. Prior to joining Stanford, he was a Research Scientist in the Camera Culture Group at MIT. He received a Ph.D. in Computer Science from UBC. He is the recipient of an NSF CAREER Award, an Alfred P. Sloan Fellowship, an ACM SIGGRAPH Significant New Researcher Award, a Presidential Early Career Award for Scientists and Engineers, an SPIE Early Career Achievement Award, and several other awards.

SIGGRAPH 2014

Gordon is a Research Scientist in the Camera Culture Group at the MIT Media Lab. His research focuses on computational imaging and display systems as well as computational light transport. His research has been funded by DARPA, NSF, Samsung, and other grants from industry sponsors and research councils. In 2006, Gordon graduated with Honors from the Bauhaus in Weimar, Germany, and he received a Ph.D. in Computer Science from the University of British Columbia in 2011. His doctoral dissertation focuses on computational light modulation for image acquisition and display and won the Alain Fournier Ph.D. Dissertation Annual Award. He organized the IEEE 2012 and 2013 International Workshops on Computational Cameras and Displays, founded displayblocks.org as a forum for sharing computational display design instructions with the DIY community, and presented a number of courses on Computational Displays and Computational Photography at ACM SIGGRAPH. Gordon won the best paper award for ”Hand-Held Schlieren Photography with Light Field Probes” at ICCP 2011 and a Laval Virtual Award in 2005.

SIGGRAPH 2012

Gordon Wetzstein is a Postdoctoral Associate at the MIT Media Lab. His research interests include light field and high dynamic range displays, projector-camera systems, computational optics, computational photography, computer vision, computer graphics, and augmented reality. Gordon received a Diploma in Media System Science with Honors from the Bauhaus-University Weimar in 2006 and a Ph.D. in Computer Science at the University of British Columbia in 2011. His doctoral dissertation focuses on computational light modulation for image acquisition and dis- play. He is co-chairing the first workshop on Computational Cameras and Displays at CVPR 2012, is serving in the general submissions committee at SIGGRAPH 2012, has served on the program committees of IEEE ProCams 2007 and IEEE ISMAR 2010, won a Laval Virtual Award in 2005 for his work on projector-camera systems, and a best paper award for “Hand- Held Schlieren Photography with Light Field Probes” at the International Conference on Computational Photography in 2011, introducing light field probes as computational displays for computer vision and fluid mechanics applications.

Learning Category: Organizing Committee Chair:

Course Organizer:

- SIGGRAPH 2012, "Computational Displays"

- SIGGRAPH 2017, "Build Your Own VR Display: An Introduction to VR Display Systems for Hobbyists and Educators"

Experience Category: Jury Member:

Learning Category: Jury Member:

Award(s):

Experience(s):

Type: [E-Tech]

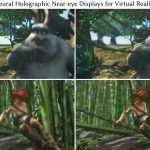

Neural Holographic Near-eye Displays for Virtual Reality

Organizer(s): [Choi] [Gopakumar] [Chao] [Lee] [Kim] [Wetzstein]

Entry No.: [12]

[SIGGRAPH 2023]

Type: [E-Tech]

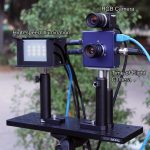

Autofocals: Gaze-Contingent Eyeglasses for Presbyopes

Organizer(s): [Padmanaban] [Konrad] [Wetzstein]

Entry No.: [03]

[SIGGRAPH 2018]

Type: [E-Tech]

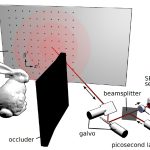

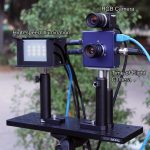

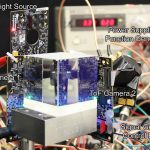

Real-time Non-line-of-sight Imaging

Organizer(s): [O’Toole] [Lindell] [Wetzstein]

Entry No.: [15]

[SIGGRAPH 2018]

Type: [E-Tech]

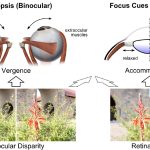

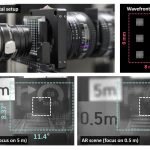

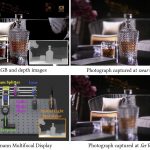

Computational Focus-Tunable Near-eye Displays

Organizer(s): [Konrad] [Padmanaban] [Cooper] [Wetzstein]

Entry No.: [03]

[SIGGRAPH 2016]

Type: [E-Tech]

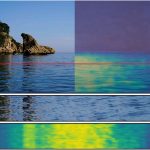

Doppler Time-of-Flight Imaging

Organizer(s): [Heide] [Wetzstein] [Hullin] [Heidrich]

Entry No.: [09]

[SIGGRAPH 2015]

Type: [E-Tech]

The Light Field Stereoscope

Organizer(s): [Huang] [Luebke] [Wetzstein]

Entry No.: [24]

[SIGGRAPH 2015]

Type: [E-Tech]

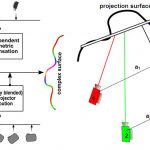

A Compressive Light Field Projection System

Organizer(s): [Hirsch] [Wetzstein] [Raskar]

Entry No.: [02]

[SIGGRAPH 2014]

Learning Category: Presentation(s):

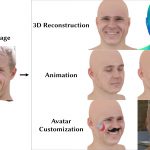

Type: [Technical Papers]

Single-Shot Implicit Morphable Faces with Consistent Texture Parameterization Presenter(s): [Lin] [Nagano] [Kautz] [Chan] [Guibas] [Wetzstein] [Khamis]

[SIGGRAPH 2023]

Type: [Technical Papers]

Towards Attention–Aware Foveated Rendering Presenter(s): [Krajancich] [Kellnhofer] [Wetzstein]

[SIGGRAPH 2023]

Type: [Technical Papers]

A perceptual model for eccentricity-dependent spatio-temporal flicker fusion and its applications to foveated graphics Presenter(s): [Krajancich] [Kellnhofer] [Wetzstein]

[SIGGRAPH 2021]

Type: [Technical Papers]

Acorn: adaptive coordinate networks for neural scene representation Presenter(s): [Martel] [Lindell] [Lin] [Chan] [Monteiro] [Wetzstein]

[SIGGRAPH 2021]

Type: [Courses]

Advances in Neural Rendering Organizer(s): [Tewari]

Presenter(s): [Sitzmann] [Fried] [Thies] [Xu] [Tretschk] [Mildenhall] [Pandey] [Orts-Escolano] [Fanello] [Guo] [Wetzstein] [Zhu] [Theobalt] [Agrawala] [Zollhöfer]

Entry No.: [01]

[SIGGRAPH 2021]

Type: [Courses]

Deep Optics: Joint Design of Optics and Image Recovery Algorithms for Domain Specific Cameras Organizer(s): [Peng]

Presenter(s): [Veeraraghavan] [Heidrich] [Wetzstein]

Entry No.: [18]

[SIGGRAPH 2020]

Type: [Technical Papers]

Gaze-Contingent Ocular Parallax Rendering for Virtual Reality Presenter(s): [Konrad] [Angelopoulos] [Wetzstein]

[SIGGRAPH 2020]

Type: [Talks (Sketches)]

Autofocals: evaluating gaze-contingent eyeglasses for presbyopes Presenter(s): [Padmanaban] [Konrad] [Wetzstein]

Entry No.: [55]

[SIGGRAPH 2019]

Type: [Talks (Sketches)]

Gaze-Contingent Ocular Parallax Rendering for Virtual Reality Presenter(s): [Konrad] [Angelopoulos] [Wetzstein]

Entry No.: [56]

[SIGGRAPH 2019]

Type: [Technical Papers]

Non-Line-of-Sight Imaging With Partial Occluders and Surface Normals Presenter(s): [Heide] [O'Toole] [Zang] [Lindell] [Diamond] [Wetzstein]

[SIGGRAPH 2019]

Type: [Technical Papers]

Wave-based non-line-of-sight imaging using fast f-k migration Presenter(s): [Lindell] [Wetzstein] [O’Toole]

[SIGGRAPH 2019]

Type: [Talks (Sketches)]

Confocal Non-Line-of-Sight Imaging Presenter(s): [O'Toole] [Lindell] [Wetzstein]

Entry No.: [01]

[SIGGRAPH 2018]

Type: [Technical Papers]

End-to-end optimization of optics and image processing for achromatic extended depth of field and super-resolution imaging Presenter(s): [Sitzmann] [Diamond] [Peng] [Dun] [Boyd] [Heidrich] [Heide] [Wetzstein]

Entry No.: [114]

[SIGGRAPH 2018]

Type: [Technical Papers]

Single-photon 3D imaging with deep sensor fusion Presenter(s): [Lindell] [O’Toole] [Wetzstein]

Entry No.: [113]

[SIGGRAPH 2018]

Type: [Technical Papers]

Accommodation-invariant computational near-eye displays Presenter(s): [Konrad] [Padmanaban] [Molner] [Cooper] [Wetzstein]

[SIGGRAPH 2017]

Type: [Courses]

Applications of Visual Perception to Virtual Reality Rendering Organizer(s): [Patney]

Presenter(s): [Kim] [Zannoli] [Koulieris] [Wetzstein] [Steinicke]

Entry No.: [01]

[SIGGRAPH 2017]

Type: [Technical Papers]

Movie editing and cognitive event segmentation in virtual reality video Presenter(s): [Serrano] [Sitzmann] [Ruiz-Borau] [Wetzstein] [Gutierrez] [Masia]

[SIGGRAPH 2017]

Type: [Talks (Sketches)]

Optimizing VR for all users through adaptive focus displays Presenter(s): [Padmanaban] [Konrad] [Cooper] [Wetzstein]

Entry No.: [77]

[SIGGRAPH 2017]

Type: [Technical Papers]

Computational imaging with multi-camera time-of-flight systems Presenter(s): [Shrestha] [Heide] [Heidrich] [Wetzstein]

[SIGGRAPH 2016]

Type: [Technical Papers]

ProxImaL: efficient image optimization using proximal algorithms Presenter(s): [Heide] [Diamond] [Nießner] [Ragan-Kelley] [Heidrich] [Wetzstein]

[SIGGRAPH 2016]

Type: [Technical Papers]

Doppler time-of-flight imaging Presenter(s): [Heide] [Heidrich] [Wetzstein] [Hullin]

[SIGGRAPH 2015]

Type: [Technical Papers]

The light field stereoscope: immersive computer graphics via factored near-eye light field displays with focus cues Presenter(s): [Huang] [Chen] [Wetzstein]

[SIGGRAPH 2015]

Type: [Technical Papers]

A compressive light field projection system Presenter(s): [Hirsch] [Wetzstein] [Raskar]

[SIGGRAPH 2014]

Type: [Courses]

Computational Cameras and Displays Organizer(s): [O’Toole]

Presenter(s): [O’Toole] [Wetzstein]

Entry No.: [09]

[SIGGRAPH 2014]

Type: [Technical Papers]

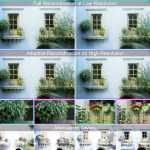

Eyeglasses-free display: towards correcting visual aberrations with computational light field displays Presenter(s): [Huang] [Wetzstein] [Barsky] [Raskar]

[SIGGRAPH 2014]

Type: [Technical Papers]

Focus 3D: Compressive Accommodation Display Presenter(s): [Maimone] [Wetzstein] [Hirsch] [Lanman] [Raskar] [Fuchs]

[SIGGRAPH 2014]

Type: [Technical Papers]

Adaptive image synthesis for compressive displays Presenter(s): [Heide] [Wetzstein] [Raskar] [Heidrich]

[SIGGRAPH 2013]

Type: [Technical Papers]

Compressive light field photography using overcomplete dictionaries and optimized projections Presenter(s): [Marwah] [Wetzstein] [Bando] [Raskar]

[SIGGRAPH 2013]

Type: [Posters]

Computational Light Field Display for Correcting Visual Aberrations Presenter(s): [Huang] [Wetzstein] [Barsky] [Raskar]

Entry No.: [29]

[SIGGRAPH 2013]

Type: [Posters]

Compressive light field photography Presenter(s): [Marwah] [Wetzstein] [Veeraraghavan] [Raskar]

[SIGGRAPH 2012]

Type: [Talks (Sketches)]

Compressive light field photography Presenter(s): [Marwah] [Wetzstein] [Veeraraghavan] [Raskar]

[SIGGRAPH 2012]

Type: [Posters]

Computational cellphone microscopy Presenter(s): [Arpa] [Wetzstein] [Lanman] [Raskar]

[SIGGRAPH 2012]

Type: [Posters]

Computational retinal imaging via binocular coupling and indirect illumination Presenter(s): [Lawson] [Boggess] [Khullar] [Olwal] [Wetzstein] [Raskar]

[SIGGRAPH 2012]

Type: [Talks (Sketches)]

Computational retinal imaging via binocular coupling and indirect illumination Presenter(s): [Lawson] [Boggess] [Khullar] [Olwal] [Wetzstein] [Raskar]

[SIGGRAPH 2012]

Type: [Posters]

Perceptually-optimized content remapping for automultiscopic displays Presenter(s): [Masia] [Wetzstein] [Aliaga] [Raskar] [Gutierrez]

[SIGGRAPH 2012]

Type: [Technical Papers]

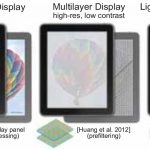

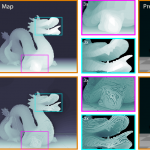

Tensor displays: compressive light field synthesis using multilayer displays with directional backlighting Presenter(s): [Wetzstein] [Lanman] [Hirsch] [Raskar]

[SIGGRAPH 2012]

Type: [Technical Papers]

Layered 3D: tomographic image synthesis for attenuation-based light field and high dynamic range displays Presenter(s): [Wetzstein] [Lanman] [Heidrich] [Raskar]

[SIGGRAPH 2011]

Type: [Technical Papers]

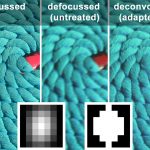

Diffusion Coded Photography forExtended Depth of Field. Presenter(s): [Grosse] [Wetzstein] [Grundhöfer] [Bimber]

[SIGGRAPH 2010]

Type: [Talks (Sketches)]

Adaptive coded aperture projection Presenter(s): [Grosse] [Wetzstein] [Bimber] [Grundhöfer]

[SIGGRAPH 2009]

Type: [Posters]

HECTOR - scripting-based VR system design Presenter(s): [Wetzstein] [Gollner] [Beck] [Weiszig] [Derkau] [Springer] [Fröhlich]

[SIGGRAPH 2007]

Type: [Posters]

Radiometric compensation of global illumination effects with projector-camera systems Presenter(s): [Wetzstein] [Bimber]

[SIGGRAPH 2006]

Learning Category: Moderator:

Type: [Technical Papers]

Étendue Expansion in Holographic Near Eye Displays Through Sparse Eye-box Generation Using Lens Array Eyepiece Presenter(s): [Chae] [Bang] [Yoo] [Jeong]

[SIGGRAPH 2023]

Type: [Technical Papers]

OpenMPD: A Low-level Presentation Engine for Multimodal Particle-based Displays Presenter(s): [Montano-Murillo] [Hirayama] [Plasencia]

[SIGGRAPH 2023]

Type: [Technical Papers]

Perceptual Visibility Model for Temporal Contrast Changes in Periphery Presenter(s): [Tursun] [Didyk]

[SIGGRAPH 2023]

Type: [Technical Papers]

Perspective-correct VR Passthrough Without Reprojection Presenter(s): [Kuo] [Penner] [Moczydlowski] [Ching] [Lanman] [Matsuda]

[SIGGRAPH 2023]

Type: [Technical Papers]

Split-Lohmann Multifocal Displays Presenter(s): [Qin] [Chen] [O'Toole] [Sankaranarayanan]

[SIGGRAPH 2023]

Type: [Technical Papers]

The Statistics of Eye Movements and Binocular Disparities in VR Gaming Headsets Should Drive Headset Design Presenter(s): [Aizenman] [Koulieris] [Gibaldi] [Sehgal] [Levi] [Banks]

[SIGGRAPH 2023]

Type: [Technical Papers]

3DTV at home: eulerian-lagrangian stereo-to-multiview conversion Presenter(s): [Kellnhofer] [Didyk] [Wang] [Sitthi-amorn] [Freeman] [Durand] [Matusik]

[SIGGRAPH 2017]

Type: [Technical Papers]

Hiding of phase-based stereo disparity for ghost-free viewing without glasses Presenter(s): [Fukiage] [Kawabe] [Nishida]

[SIGGRAPH 2017]

Type: [Technical Papers]

Low-cost 360 stereo photography and video capture Presenter(s): [Matzen] [Cohen] [Evans] [Kopf] [Szeliski]

[SIGGRAPH 2017]

Type: [Technical Papers]

Mixed-primary factorization for dual-frame computational displays Presenter(s): [Huang] [Pająk] [Kim] [Kautz] [Luebke]

[SIGGRAPH 2017]

Type: [Technical Papers]

Compressive epsilon photography for post-capture control in digital imaging Presenter(s): [Ito] [Tambe] [Mitra] [Sankaranarayanan] [Veeraraghavan]

[SIGGRAPH 2014]

Type: [Technical Papers]

Learning to be a depth camera for close-range human capture and interaction Presenter(s): [Fanello] [Keskin] [Izadi] [Kohli] [Shotton] [Criminisi] [Kim] [Sweeney] [Kang]

[SIGGRAPH 2014]

Type: [Technical Papers]

Pinlight displays: wide field of view augmented reality eyeglasses using defocused point light sources Presenter(s): [Maimone] [Lanman] [Rathinavel] [Keller] [Luebke] [Fuchs]

[SIGGRAPH 2014]

Role(s):

- Awardee

- Course Organizer

- Course Presenter

- Courses Organizing Committee Chair/Co-Chair

- Emerging Technologies Presenter

- Poster Presenter

- Talk (Sketch) Presenter

- Technical Paper Moderator

- Technical Paper Presenter

- Technical Paper Session Moderator

- Technical Papers Jury Member

- Unified Jury Member