Christian Theobalt

About Christian Theobalt

Affiliations (Current and Past)

- Max Planck Institute for Informatics, Saarland Informatics Campus, Professor of Computer Science

Location

- Saarbrücken, Germany

Bio

SIGGRAPH 2015

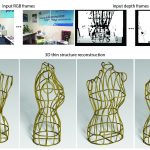

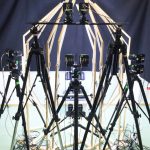

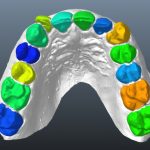

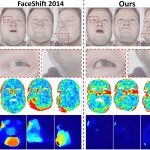

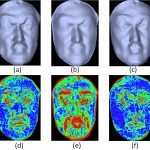

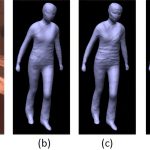

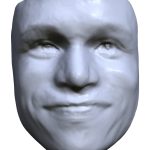

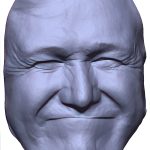

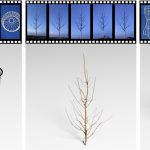

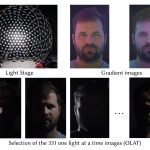

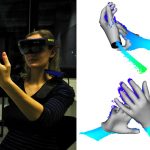

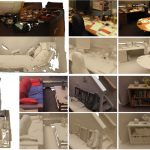

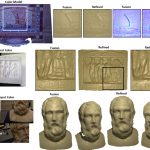

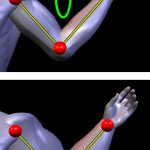

Christian Theobalt is a Professor of Computer Science at the Max-Planck-Institute for Informatics and Saarland University in Saarbrücken, Germany. Most of his research deals with algorithmic problems that lie on the boundary between the fields of Computer Vision and Computer Graphics, such as dynamic 3D scene reconstruction and marker-less motion capture, computer animation, appearance and reflectance modelling, machine learning for graphics and vision, new sensors for 3D acquisition, advanced video processing, as well as image- and physically- based rendering. He received the Otto Hahn Medal of the Max Planck Society in 2007, the EUROGRAPHICS Young Researcher Award in 2009, the German Pattern Recognition Award in 2012, and an ERC Starting Grant in 2013.

Awards and Recognition

- SIGGRAPH 2016 Emerging Technology Best in Show Award

SIGGRAPH Conference Organizing Committee Positions

Jury Member

- SIGGRAPH 2020: Technical Papers

Conference Contributions

Experiences

-

Emerging Technologies

Labs-Studio

Learning

-

Courses

Posters

Talks-Sketches

Technical Papers

Presenter(s):- Abhimitra Meka

- Rohit Pandey

- Christian Häne

- Sergio Orts-Escolano

- Peter C. Barnum

- Daniel Erickson

- Yinda Zhang

- Jonathan Taylor

- Sofien Bouaziz

- Chloe LeGendre

- Wan-Chun Alex Ma

- Ryan S. Overbeck

- Thabo Beeler

- Paul E. Debevec

- Shahram Izadi

- Christian Theobalt

- Christoph Rhemann

- Sean Ryan Fanello

- Philip David-Son

Presenter(s):- Abhimitra Meka

- Christian Häne

- Rohit Pandey

- Michael Zollhöfer

- Sean Ryan Fanello

- Graham Fyffe

- Adarsh Kowdle

- Xueming Yu

- Jay Busch

- Jason Dourgarian

- Peter Denny

- Sofien Bouaziz

- Peter Lincoln

- Matt Whalen

- Geoff Harvey

- Jonathan Taylor

- Shahram Izadi

- Andrea Tagliasacchi

- Paul E. Debevec

- Christian Theobalt

- Julien P. C. Valentin

- Christoph Rhemann

Other Information

Roles

- Awardee

- Course Presenter

- Emerging Technologies Presenter

- Poster Presenter

- Studio (SIGGRAPH Lab) Presenter

- Talk (Sketch) Presenter

- Technical Paper Presenter

- Technical Papers Jury Member

- Technical Papers Organizing Committee Member

Submit a Story

- If you would like to submit a story about this person (please keep it funny, informative and true), please contact us: historyarchives@siggraph.org

If you find errors or omissions on your profile page, please contact us: historyarchives@siggraph.org

Did you know you can send us a photo of yourself and a bio and we will post it? Make sure the photo is at least 1000 x 1000 and send it to the email above along with the bio and we will add it to your page.