“Mapping Core Similarity Among Visual Objects Across Image Modalities” by Fan, Yamins, DiCarlo and Turk-Browne

Conference:

Type(s):

Entry Number: 60

Title:

- Mapping Core Similarity Among Visual Objects Across Image Modalities

Presenter(s)/Author(s):

Abstract:

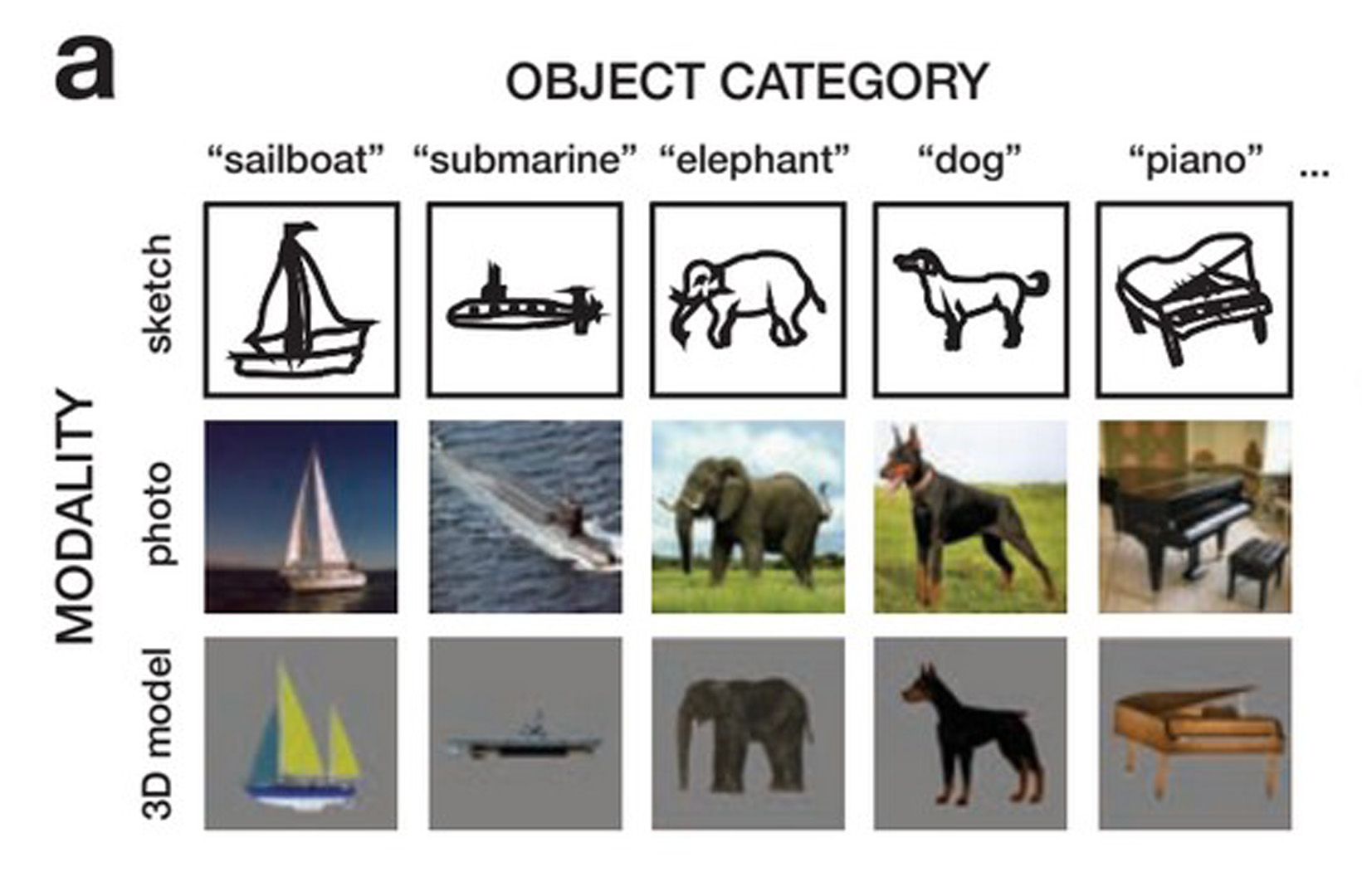

Humans have devised a wide range of technologies for creating visual representations of real-world objects. Some are ancient (e.g., line drawings using a stylus), while others are very modern (e.g., ptography and 3D computer graphics rendering). Despite large differences in the images produced by these differing modalities (e.g., sparse contours in sketches vs. continuous hue variation in photographs), all are effective at evoking the original real-world object.

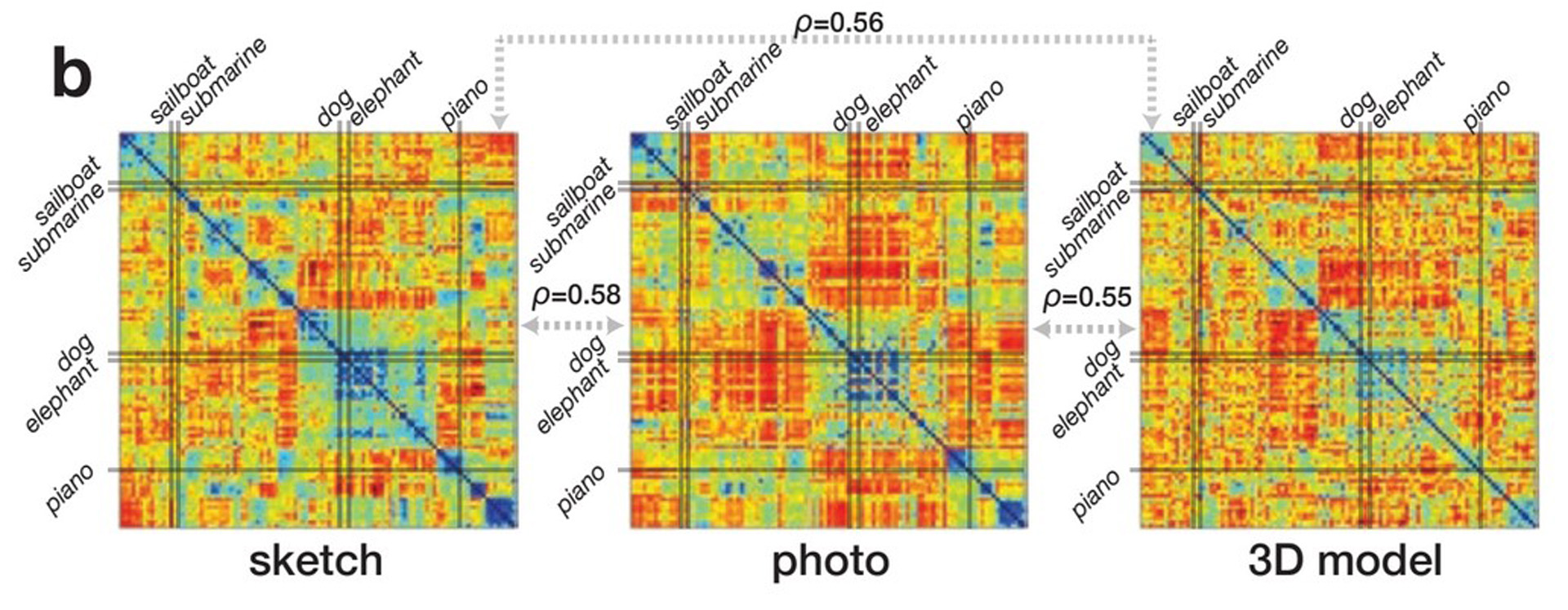

What core visual properties are preserved across these diverse modalities such that reliable recognition is possible? Understanding the specific “pixel invariants” that are common to a photograph, line drawing, and 3D-rendered image of the same object is a challenge that lies at the heart of visual abstraction. In this work, we present a computational approach to extracting and quantifying such similarities.

References:

- Deng, J., Dong, W., Socher, R., Li, L.-J., Li, K., & Fei-Fei, L. 2009. Imagenet: A large-scale hierarchical image database. IEEE Computer Vision and Pattern Recognition (CVPR), 248–255.

- Eitz, M., Hays, J., & Alexa, M. 2012. How do humans sketch objects? ACM Transactions on Graphics (TOG), 31(4), 44.

- Kriegeskorte, N. 2008. Representational similarity analysis — connecting the branches of systems neuroscience. Frontiers in Systems Neuroscience, 2(4): 1–28.

- Yamins, D. L., Hong, H., & Cadieu, C., & DiCarlo, J. 2013. Hierarchical Modular Optimization of Convolutional Networks Achieves Representations Similar to Macaque IT and Human Ventral Stream. Advances in Neural Processing Systems.