“Dynamic terrain traversal skills using reinforcement learning”

Conference:

Type(s):

Title:

- Dynamic terrain traversal skills using reinforcement learning

Session/Category Title: Taking Control

Presenter(s)/Author(s):

Moderator(s):

Abstract:

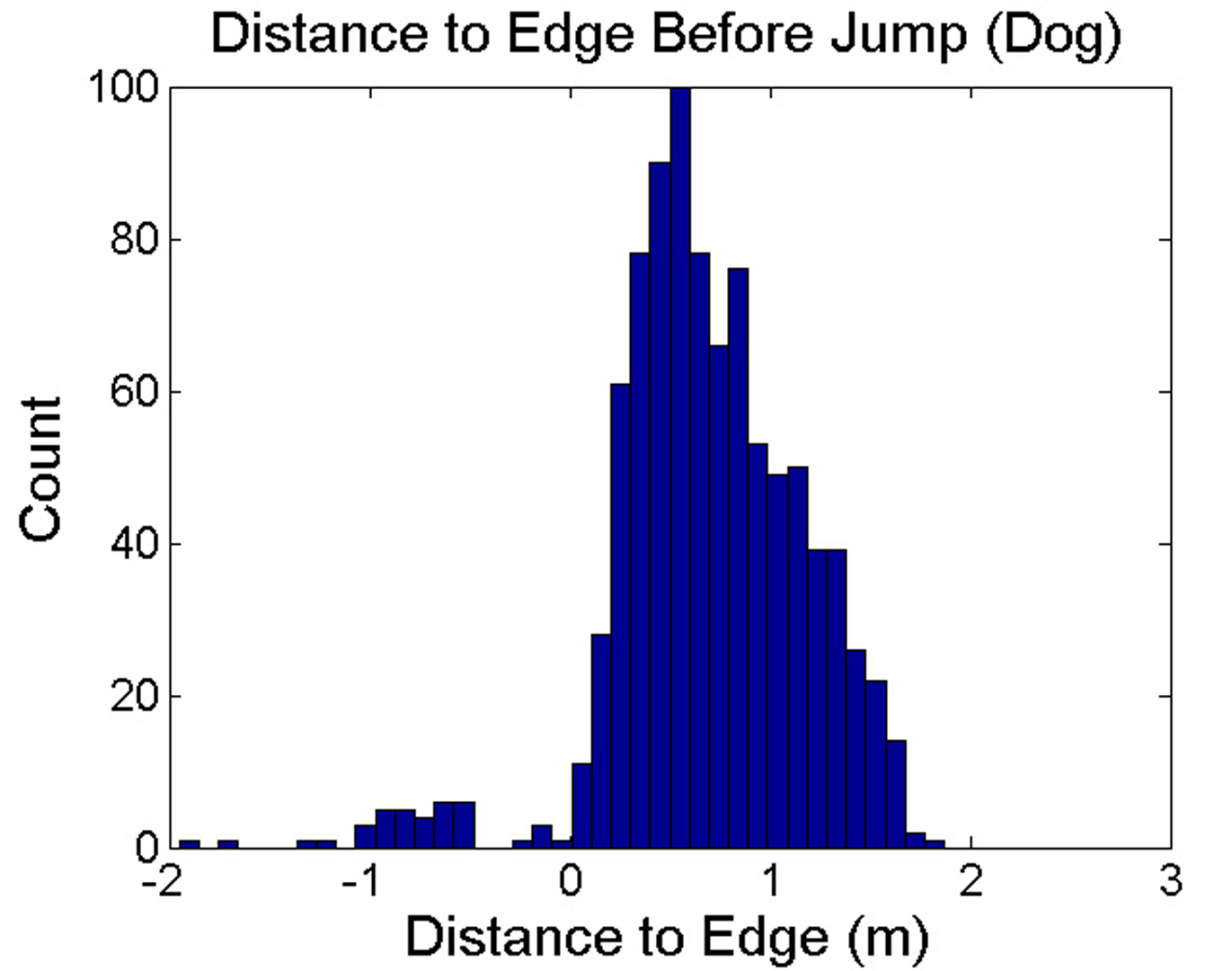

The locomotion skills developed for physics-based characters most often target flat terrain. However, much of their potential lies with the creation of dynamic, momentum-based motions across more complex terrains. In this paper, we learn controllers that allow simulated characters to traverse terrains with gaps, steps, and walls using highly dynamic gaits. This is achieved using reinforcement learning, with careful attention given to the action representation, non-parametric approximation of both the value function and the policy; epsilon-greedy exploration; and the learning of a good state distance metric. The methods enable a 21-link planar dog and a 7-link planar biped to navigate challenging sequences of terrain using bounding and running gaits. We evaluate the impact of the key features of our skill learning pipeline on the resulting performance.

References:

1. Box2D, 2015. Box2d: A 2d physics engine for games, Jan. http://box2d.org.Google Scholar

2. Busoniu, L., Babuska, R., De Schutter, B., and Ernst, D. 2010. Reinforcement learning and dynamic programming using function approximators. CRC press. Google ScholarDigital Library

3. Coros, S., Beaudoin, P., Yin, K. K., and van de Pann, M. 2008. Synthesis of constrained walking skills. ACM Trans. Graph. 27, 5, Article 113. Google ScholarDigital Library

4. Coros, S., Beaudoin, P., and van de Panne, M. 2009. Robust task-based control policies for physics-based characters. ACM Transctions on Graphics 28, 5, Article 170. Google ScholarDigital Library

5. Coros, S., Beaudoin, P., and van de Panne, M. 2010. Generalized biped walking control. ACM Transctions on Graphics 29, 4, Article 130. Google ScholarDigital Library

6. Coros, S., Karpathy, A., Jones, B., Reveret, L., and van de Panne, M. 2011. Locomotion skills for simulated quadrupeds. ACM Transactions on Graphics 30, 4, Article 59. Google ScholarDigital Library

7. de Lasa, M., Mordatch, I., and Hertzmann, A. 2010. Feature-based locomotion controllers. ACM Transactions on Graphics (TOG) 29, 4, 131. Google ScholarDigital Library

8. Engel, Y., Mannor, S., and Meir, R. 2005. Reinforcement learning with gaussian processes. In Proceedings of the 22nd international conference on Machine learning, ACM, 201–208. Google ScholarDigital Library

9. Fonteneau, R., Murphy, S. A., Wehenkel, L., and Ernst, D. 2013. Batch mode reinforcement learning based on the synthesis of artificial trajectories. Annals of operations research 208, 1, 383–416.Google ScholarCross Ref

10. Geijtenbeek, T., and Pronost, N. 2012. Interactive character animation using simulated physics: A state-of-the-art review. In Computer Graphics Forum, vol. 31, 2492–2515. Google ScholarDigital Library

11. Ha, S., and Liu, C. K. 2015. Iterative training of dynamic skills inspired by human coaching techniques. ACM Transactions on Graphics (TOG). to appear. Google ScholarDigital Library

12. Ha, S., Ye, Y., and Liu, C. K. 2012. Falling and Landing Motion Control for Character Animation. ACM Transactions on Graphics 31, 6 (Nov.), 1. Google ScholarDigital Library

13. Hansen, N. 2006. The cma evolution strategy: A comparing review. In Towards a New Evolutionary Computation, 75–102.Google Scholar

14. Jain, S., Ye, Y., and Liu, C. K. 2009. Optimization-based interactive motion synthesis. ACM Transactions on Graphics (TOG) 28, 1, 10. Google ScholarDigital Library

15. Kwon, T., and Hodgins, J. 2010. Control systems for human running using an inverted pendulum model and a reference motion capture sequence. In Proc. of Symposium on Computer Animation, 129–138. Google ScholarDigital Library

16. Lange, S., Gabel, T., and Riedmiller, M. 2012. Batch reinforcement learning. In Reinforcement Learning. Springer, 45–73.Google Scholar

17. Lee, J., and Lee, K. H. 2006. Precomputing avatar behavior from human motion data. Graphical Models 68, 2, 158–174. Google ScholarDigital Library

18. Lee, Y., Lee, S. J., and Popović, Z. 2009. Compact character controllers. ACM Transctions on Graphics 28, 5, Article 169. Google ScholarDigital Library

19. Lee, Y., Wampler, K., Bernstein, G., Popović, J., and Popović, Z. 2010. Motion fields for interactive character locomotion. ACM Transctions on Graphics 29, 6, Article 138. Google ScholarDigital Library

20. Lee, Y., Kim, S., and Lee, J. 2010. Data-driven biped control. ACM Transctions on Graphics 29, 4, Article 129. Google ScholarDigital Library

21. Levine, S., and Koltun, V. 2014. Learning complex neural network policies with trajectory optimization. In Proc. ICML 2014, 829–837.Google Scholar

22. Levine, S., Wang, J. M., Haraux, A., Popović, Z., and Koltun, V. 2012. Continuous character control with low-dimensional embeddings. ACM Transactions on Graphics 31, 4, 28. Google ScholarDigital Library

23. Liu, L., Yin, K., van de Panne, M., and Guo, B. 2012. Terrain runner: control, parameterization, composition, and planning for highly dynamic motions. ACM Trans. Graph. 31, 6, 154. Google ScholarDigital Library

24. Macchietto, A., Zordan, V., and Shelton, C. R. 2009. Momentum control for balance. In ACM Transactions on Graphics (TOG), vol. 28, ACM, 80. Google ScholarDigital Library

25. McCann, J., and Pollard, N. 2007. Responsive characters from motion fragments. ACM Transactions on Graphics 26, 3, Article 6. Google ScholarDigital Library

26. Mordatch, I., de Lasa, M., and Hertzmann, A. 2010. Robust physics-based locomotion using low-dimensional planning. ACM Trans. Graph. 29, 4, Article 71. Google ScholarDigital Library

27. Muja, M., and Lowe, D. G. 2009. Fast approximate nearest neighbors with automatic algorithm configuration. In VISAPP (1), 331–340.Google Scholar

28. Ormoneit, D., and Sen, Ś. 2002. Kernel-based reinforcement learning. Machine learning 49, 2-3, 161–178. Google ScholarDigital Library

29. Raibert, M. H., and Hodgins, J. K. 1991. Animation of dynamic legged locomotion. In ACM SIGGRAPH Computer Graphics, vol. 25, ACM, 349–358. Google ScholarDigital Library

30. Ross, S., Gordon, G., and Bagnell, A. 2011. A reduction of imitation learning and structured prediction to noregret online learning. Journal of Machine Learning Research 15, 627–635.Google Scholar

31. Stewart, A. J., and Cremer, J. F. 1992. Beyond keyframing: an algorithmic approach to animation. In Graphics Interface, 273–281. Google ScholarDigital Library

32. Tan, J., Gu, Y., Liu, C. K., and Turk, G. 2014. Learning bicycle stunts. ACM Trans. Graph. 33, 4, 50:1–50:12. Google ScholarDigital Library

33. Treuille, A., Lee, Y., and Popović, Z. 2007. Near-optimal character animation with continuous control. ACM Transactions on Graphics (TOG) 26, 3, Article 7. Google ScholarDigital Library

34. van Hasselt, H., and Wiering, M. A. 2007. Reinforcement learning in continuous action spaces. In Approximate Dynamic Programming and Reinforcement Learning, 2007. ADPRL 2007. IEEE International Symposium on, IEEE, 272–279.Google Scholar

35. van Hasselt, H. 2012. Reinforcement learning in continuous state and action spaces. In Reinforcement Learning. Springer, 207–251.Google Scholar

36. Wang, J. M., Fleet, D. J., and Hertzmann, A. 2009. Optimizing walking controllers. ACM Transctions on Graphics 28, 5, Article 168. Google ScholarDigital Library

37. Wei, X., Min, J., and Chai, J. 2011. Physically valid statistical models for human motion generation. ACM Transctions on Graphics 30, 3, Article 19. Google ScholarDigital Library

38. Ye, Y., and Liu, C. K. 2010. Optimal feedback control for character animation using an abstract model. ACM Trans. Graph. 29, 4, Article 74. Google ScholarDigital Library

39. Yin, K., Loken, K., and van de Panne, M. 2007. Simbicon: Simple biped locomotion control. ACM Transctions on Graphics 26, 3, Article 105. Google ScholarDigital Library

40. Yin, K., Coros, S., Beaudoin, P., and van de Panne, M. 2008. Continuation methods for adapting simulated skills. ACM Transctions on Graphics 27, 3, Article 81. Google ScholarDigital Library

41. Zordan, V. B., and Hodgins, J. K. 2002. Motion capture-driven simulations that hit and react. In Proc. of Symposium on Computer Animation, 89–96. Google ScholarDigital Library