“Adaptive Local Basis Functions for Shape Completion” by Ying, Shao, Wang, Yang and Zhou

Conference:

Type(s):

Title:

- Adaptive Local Basis Functions for Shape Completion

Session/Category Title: Diffusion For Geometry

Presenter(s)/Author(s):

Moderator(s):

Abstract:

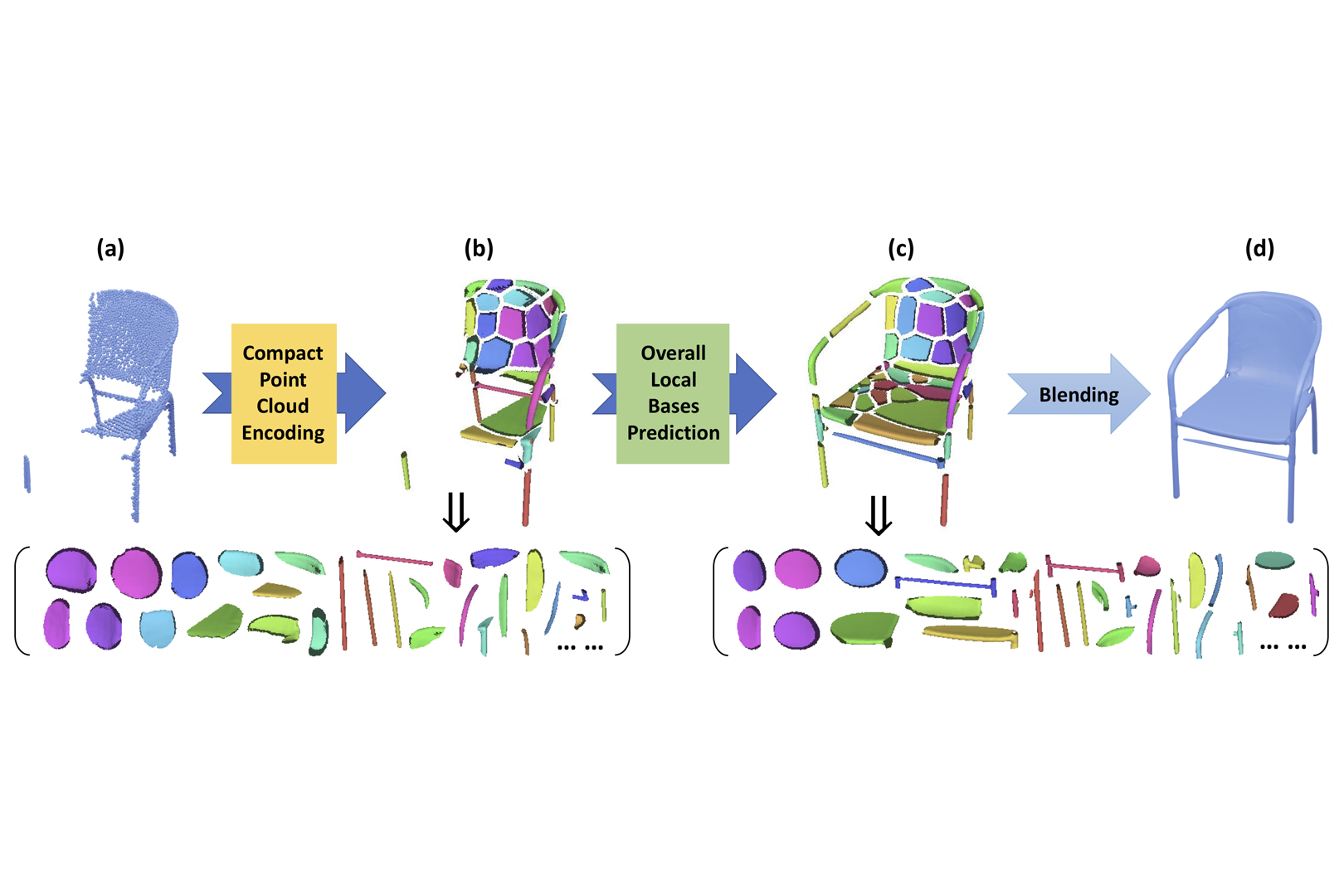

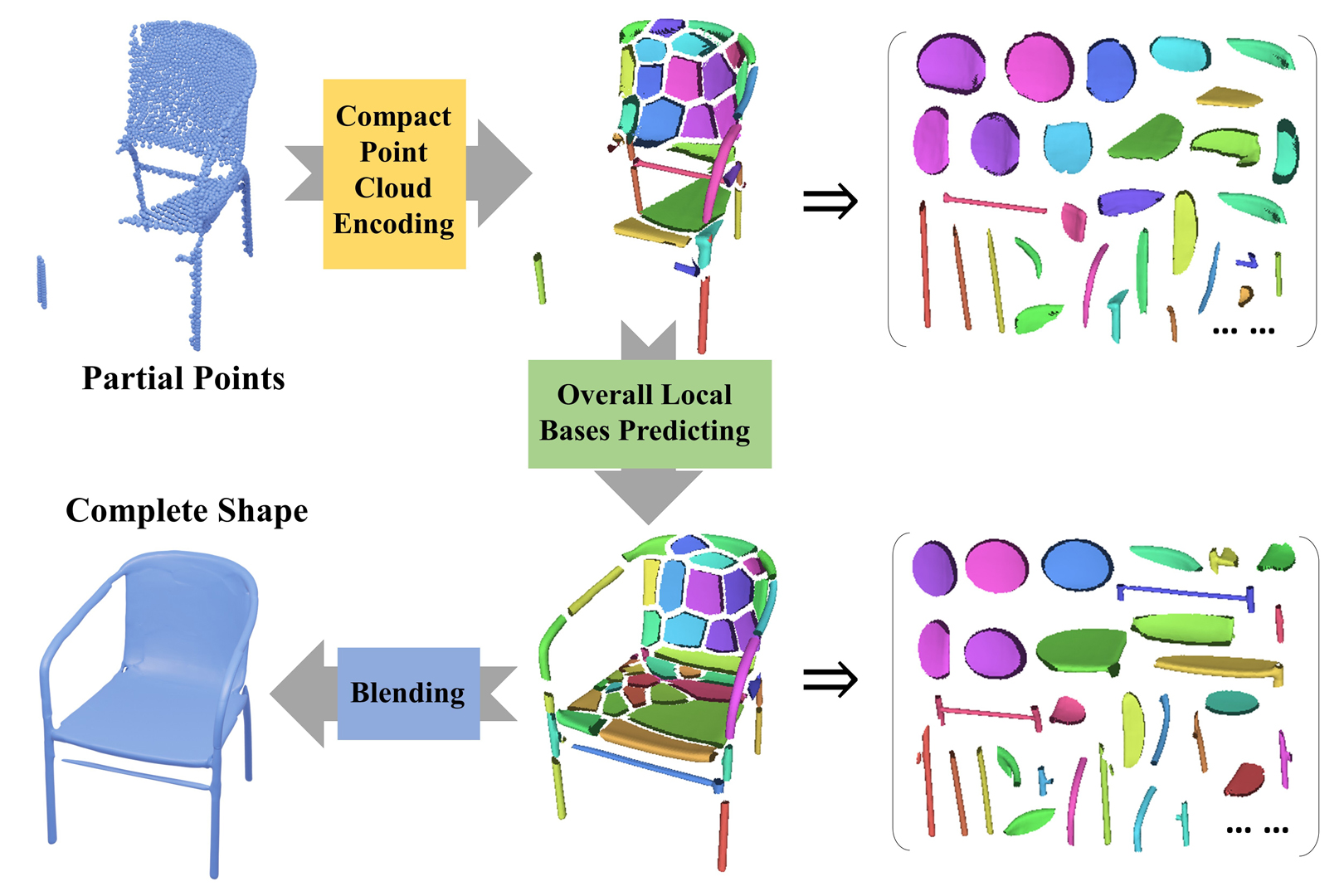

In this paper, we focus on the task of 3D shape completion from partial point clouds using deep implicit functions. Existing methods seek to use voxelized basis functions or the ones from a certain family of functions (e.g., Gaussians), which leads to high computational costs or limited shape expressivity. On the contrary, our method employs adaptive local basis functions, which are learned end-to-end and not restricted in certain forms. Based on those basis functions, a local-to-local shape completion framework is presented. Our algorithm learns sparse parameterization with a small number of basis functions while preserving local geometric details during completion. Quantitative and qualitative experiments demonstrate that our method outperforms the state-of-the-art methods in shape completion, detail preservation, generalization to unseen geometries, and computational cost. Code and data for this paper are at https://github.com/yinghdb/Adaptive-Local-Basis-Functions.

References:

1. Tristan Aumentado-Armstrong, Stavros Tsogkas, Sven Dickinson, and Allan D Jepson. 2022. Representing 3D Shapes with Probabilistic Directed Distance Fields. In CVPR. 19343–19354.

2. Nicolas Carion, Francisco Massa, Gabriel Synnaeve, Nicolas Usunier, Alexander Kirillov, and Sergey Zagoruyko. 2020. End-to-end object detection with transformers. In ECCV. Springer, 213–229.

3. Rohan Chabra, Jan E Lenssen, Eddy Ilg, Tanner Schmidt, Julian Straub, Steven Lovegrove, and Richard Newcombe. 2020. Deep local shapes: Learning local sdf priors for detailed 3d reconstruction. In ECCV. Springer, 608–625.

4. Angel X Chang, Thomas Funkhouser, Leonidas Guibas, Pat Hanrahan, Qixing Huang, Zimo Li, Silvio Savarese, Manolis Savva, Shuran Song, Hao Su, 2015. Shapenet: An information-rich 3d model repository. arXiv preprint arXiv:1512.03012 (2015).

5. Chao Chen, Yu-Shen Liu, and Zhizhong Han. 2022b. Latent partition implicit with surface codes for 3D representation. arXiv preprint arXiv:2207.08631 (2022).

6. Weikai Chen, Cheng Lin, Weiyang Li, and Bo Yang. 2022a. 3PSDF: Three-Pole Signed Distance Function for Learning Surfaces with Arbitrary Topologies. In CVPR. 18522–18531.

7. Zhiqin Chen, Andrea Tagliasacchi, Thomas Funkhouser, and Hao Zhang. 2022c. Neural dual contouring. ACM Transactions on Graphics (TOG) 41, 4 (2022), 1–13.

8. Zhiqin Chen and Hao Zhang. 2019. Learning implicit fields for generative shape modeling. In CVPR. 5939–5948.

9. Zhang Chen, Yinda Zhang, Kyle Genova, Sean Fanello, Sofien Bouaziz, Christian Häne, Ruofei Du, Cem Keskin, Thomas Funkhouser, and Danhang Tang. 2021. Multiresolution deep implicit functions for 3d shape representation. In ICCV. 13087–13096.

10. Julian Chibane, Thiemo Alldieck, and Gerard Pons-Moll. 2020. Implicit functions in feature space for 3d shape reconstruction and completion. In CVPR. 6970–6981.

11. Christopher B Choy, Danfei Xu, JunYoung Gwak, Kevin Chen, and Silvio Savarese. 2016. 3d-r2n2: A unified approach for single and multi-view 3d object reconstruction. In ECCV. Springer, 628–644.

12. Angela Dai, Angel X. Chang, Manolis Savva, Maciej Halber, Thomas Funkhouser, and Matthias Nießner. 2017a. ScanNet: Richly-annotated 3D Reconstructions of Indoor Scenes. In Proc. Computer Vision and Pattern Recognition (CVPR), IEEE.

13. Angela Dai, Charles Ruizhongtai Qi, and Matthias Nießner. 2017b. Shape completion using 3d-encoder-predictor cnns and shape synthesis. In CVPR. 5868–5877.

14. Yu Deng, Jiaolong Yang, and Xin Tong. 2021. Deformed implicit field: Modeling 3d shapes with learned dense correspondence. In CVPR. 10286–10296.

15. Jacob Devlin, Ming-Wei Chang, Kenton Lee, and Kristina Toutanova. 2018. Bert: Pre-training of deep bidirectional transformers for language understanding. arXiv preprint arXiv:1810.04805 (2018).

16. Alexey Dosovitskiy, Lucas Beyer, Alexander Kolesnikov, Dirk Weissenborn, Xiaohua Zhai, Thomas Unterthiner, Mostafa Dehghani, Matthias Minderer, Georg Heigold, Sylvain Gelly, 2020. An image is worth 16×16 words: Transformers for image recognition at scale. arXiv preprint arXiv:2010.11929 (2020).

17. Kyle Genova, Forrester Cole, Avneesh Sud, Aaron Sarna, and Thomas Funkhouser. 2020. Local deep implicit functions for 3d shape. In CVPR. 4857–4866.

18. Kyle Genova, Forrester Cole, Daniel Vlasic, Aaron Sarna, William T Freeman, and Thomas Funkhouser. 2019. Learning shape templates with structured implicit functions. In ICCV. 7154–7164.

19. Xiaoguang Han, Zhen Li, Haibin Huang, Evangelos Kalogerakis, and Yizhou Yu. 2017. High-resolution shape completion using deep neural networks for global structure and local geometry inference. In ICCV. 85–93.

20. Christian Häne, Shubham Tulsiani, and Jitendra Malik. 2017. Hierarchical surface prediction for 3d object reconstruction. In 3DV. IEEE, 412–420.

21. Amir Hertz, Or Perel, Raja Giryes, Olga Sorkine-Hornung, and Daniel Cohen-Or. 2022. SPAGHETTI: Editing Implicit Shapes Through Part Aware Generation. arXiv preprint arXiv:2201.13168 (2022).

22. Ka-Hei Hui, Ruihui Li, Jingyu Hu, and Chi-Wing Fu. 2022. Neural Template: Topology-Aware Reconstruction and Disentangled Generation of 3D Meshes. In CVPR. 18572–18582.

23. Chiyu Jiang, Avneesh Sud, Ameesh Makadia, Jingwei Huang, Matthias Nießner, Thomas Funkhouser, 2020. Local implicit grid representations for 3d scenes. In CVPR. 6001–6010.

24. Tianyang Li, Xin Wen, Yu-Shen Liu, Hua Su, and Zhizhong Han. 2022. Learning deep implicit functions for 3D shapes with dynamic code clouds. In CVPR. 12840–12850.

25. Or Litany, Alex Bronstein, Michael Bronstein, and Ameesh Makadia. 2018. Deformable shape completion with graph convolutional autoencoders. In CVPR. 1886–1895.

26. Minghua Liu, Lu Sheng, Sheng Yang, Jing Shao, and Shi-Min Hu. 2020. Morphing and sampling network for dense point cloud completion. In AAAI, Vol. 34. 11596–11603.

27. Kirill Mazur and Victor Lempitsky. 2021. Cloud transformers: A universal approach to point cloud processing tasks. In ICCV. 10715–10724.

28. Lars Mescheder, Michael Oechsle, Michael Niemeyer, Sebastian Nowozin, and Andreas Geiger. 2019. Occupancy networks: Learning 3d reconstruction in function space. In CVPR. 4460–4470.

29. Paritosh Mittal, Yen-Chi Cheng, Maneesh Singh, and Shubham Tulsiani. 2022. Autosdf: Shape priors for 3d completion, reconstruction and generation. In CVPR. 306–315.

30. Luca Morreale, Noam Aigerman, Vladimir G Kim, and Niloy J Mitra. 2021. Neural surface maps. In CVPR. 4639–4648.

31. Jeong Joon Park, Peter Florence, Julian Straub, Richard Newcombe, and Steven Lovegrove. 2019. Deepsdf: Learning continuous signed distance functions for shape representation. In CVPR. 165–174.

32. Niki Parmar, Ashish Vaswani, Jakob Uszkoreit, Lukasz Kaiser, Noam Shazeer, Alexander Ku, and Dustin Tran. 2018. Image transformer. In ICML. PMLR, 4055–4064.

33. Despoina Paschalidou, Angelos Katharopoulos, Andreas Geiger, and Sanja Fidler. 2021. Neural parts: Learning expressive 3d shape abstractions with invertible neural networks. In CVPR. 3204–3215.

34. Songyou Peng, Michael Niemeyer, Lars Mescheder, Marc Pollefeys, and Andreas Geiger. 2020. Convolutional occupancy networks. In ECCV. Springer, 523–540.

35. Charles Ruizhongtai Qi, Li Yi, Hao Su, and Leonidas J Guibas. 2017. Pointnet++: Deep hierarchical feature learning on point sets in a metric space. NIPS 30 (2017).

36. Alec Radford, Jeffrey Wu, Rewon Child, David Luan, Dario Amodei, Ilya Sutskever, 2019. Language models are unsupervised multitask learners. OpenAI blog 1, 8 (2019), 9.

37. Jason Rock, Tanmay Gupta, Justin Thorsen, JunYoung Gwak, Daeyun Shin, and Derek Hoiem. 2015. Completing 3d object shape from one depth image. In CVPR. 2484–2493.

38. Bo Sun, Vladimir G Kim, Noam Aigerman, Qixing Huang, and Siddhartha Chaudhuri. 2022. PatchRD: Detail-Preserving Shape Completion by Learning Patch Retrieval and Deformation. arXiv preprint arXiv:2207.11790 (2022).

39. Towaki Takikawa, Joey Litalien, Kangxue Yin, Karsten Kreis, Charles Loop, Derek Nowrouzezahrai, Alec Jacobson, Morgan McGuire, and Sanja Fidler. 2021. Neural geometric level of detail: Real-time rendering with implicit 3D shapes. In CVPR. 11358–11367.

40. Maxim Tatarchenko, Stephan R Richter, René Ranftl, Zhuwen Li, Vladlen Koltun, and Thomas Brox. 2019. What do single-view 3d reconstruction networks learn?. In CVPR. 3405–3414.

41. Edgar Tretschk, Ayush Tewari, Vladislav Golyanik, Michael Zollhöfer, Carsten Stoll, and Christian Theobalt. 2020. Patchnets: Patch-based generalizable deep implicit 3d shape representations. In ECCV. Springer, 293–309.

42. Greg Turk and James F O’Brien. 2002. Modelling with implicit surfaces that interpolate. TOG 21, 4 (2002), 855–873.

43. Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N Gomez, Łukasz Kaiser, and Illia Polosukhin. 2017. Attention is all you need. NIPS 30 (2017).

44. Rahul Venkatesh, Sarthak Sharma, Aurobrata Ghosh, Laszlo Jeni, and Maneesh Singh. 2020. Dude: Deep unsigned distance embeddings for hi-fidelity representation of complex 3d surfaces. arXiv preprint arXiv:2011.02570 (2020).

45. Christian Walder, Bernhard Schölkopf, and Olivier Chapelle. 2006. Implicit surface modelling with a globally regularised basis of compact support. In CGF, Vol. 25. Amsterdam: North Holland, 1982-, 635–644.

46. Yida Wang, David Joseph Tan, Nassir Navab, and Federico Tombari. 2022. Learning Local Displacements for Point Cloud Completion. In CVPR. 1568–1577.

47. Peng Xiang, Xin Wen, Yu-Shen Liu, Yan-Pei Cao, Pengfei Wan, Wen Zheng, and Zhizhong Han. 2021. Snowflakenet: Point cloud completion by snowflake point deconvolution with skip-transformer. In ICCV. 5499–5509.

48. Yuting Xiao, Jiale Xu, and Shenghua Gao. 2022. TaylorImNet for Fast 3D Shape Reconstruction Based on Implicit Surface Function. arXiv preprint arXiv:2201.06845 (2022).

49. Haozhe Xie, Hongxun Yao, Shangchen Zhou, Jiageng Mao, Shengping Zhang, and Wenxiu Sun. 2020. Grnet: Gridding residual network for dense point cloud completion. In ECCV. Springer, 365–381.

50. Xingguang Yan, Liqiang Lin, Niloy J Mitra, Dani Lischinski, Daniel Cohen-Or, and Hui Huang. 2022. Shapeformer: Transformer-based shape completion via sparse representation. In CVPR. 6239–6249.

51. Shun Yao, Fei Yang, Yongmei Cheng, and Mikhail G Mozerov. 2021. 3d shapes local geometry codes learning with sdf. In ICCV. 2110–2117.

52. Xumin Yu, Yongming Rao, Ziyi Wang, Zuyan Liu, Jiwen Lu, and Jie Zhou. 2021. Pointr: Diverse point cloud completion with geometry-aware transformers. In ICCV. 12498–12507.

53. Wentao Yuan, Tejas Khot, David Held, Christoph Mertz, and Martial Hebert. 2018. Pcn: Point completion network. In 3DV. IEEE, 728–737.

54. Biao Zhang, Matthias Nießner, and Peter Wonka. 2022. 3DILG: Irregular Latent Grids for 3D Generative Modeling. arXiv preprint arXiv:2205.13914 (2022).

55. X Zheng, Yang Liu, P Wang, and Xin Tong. 2022. SDF-StyleGAN: Implicit SDF-Based StyleGAN for 3D Shape Generation. In CGF, Vol. 41. Wiley Online Library, 52–63.

56. Yi Zhou, Connelly Barnes, Jingwan Lu, Jimei Yang, and Hao Li. 2019. On the continuity of rotation representations in neural networks. In CVPR. 5745–5753.

Additional Images: