“Practical motion capture in everyday surroundings” by Vlasic, Adelsberger, Vannucci, Barnwell, Gross, et al. …

Conference:

Type(s):

Title:

- Practical motion capture in everyday surroundings

Presenter(s)/Author(s):

Abstract:

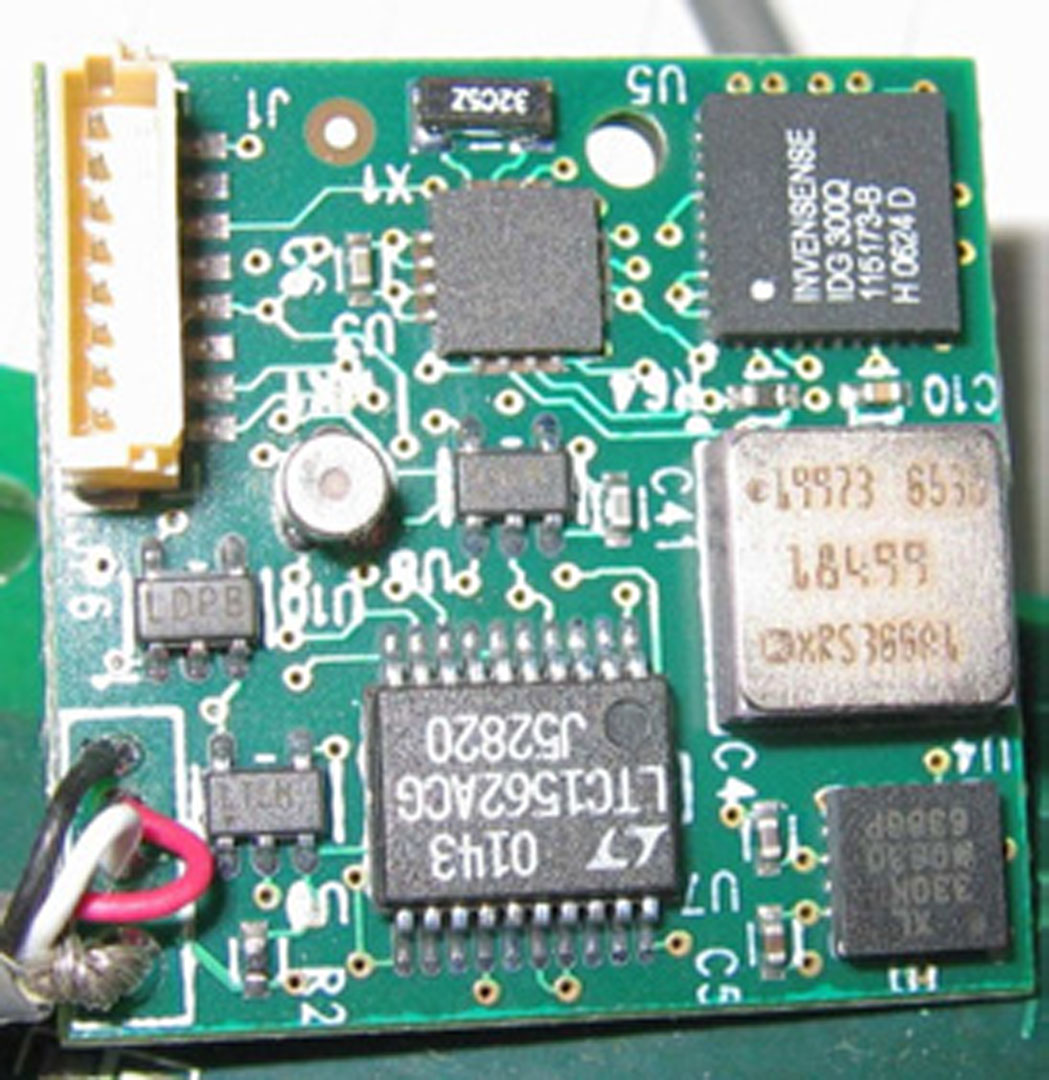

Commercial motion-capture systems produce excellent in-studio reconstructions, but offer no comparable solution for acquisition in everyday environments. We present a system for acquiring motions almost anywhere. This wearable system gathers ultrasonic time-of-flight and inertial measurements with a set of inexpensive miniature sensors worn on the garment. After recording, the information is combined using an Extended Kalman Filter to reconstruct joint configurations of a body. Experimental results show that even motions that are traditionally difficult to acquire are recorded with ease within their natural settings. Although our prototype does not reliably recover the global transformation, we show that the resulting motions are visually similar to the original ones, and that the combined acoustic and intertial system reduces the drift commonly observed in purely inertial systems. Our final results suggest that this system could become a versatile input device for a variety of augmented-reality applications.

References:

1. Adelsberger, R. 2007. Practical Motion Capture in Natural Surroundings. Master’s thesis, ETH Zürich, Zürich, Switzerland.Google Scholar

2. Bachmann, E. R. 2000. Inertial and Magnetic Tracking of Limb Segment Orientation for Inserting Humans Into Synthetic Environments. PhD thesis, Naval Postgraduate School, Monterey, California.Google Scholar

3. Baran, I., and Popović, J. 2007. Automatic rigging and animation of 3d characters. ACM Transactions on Graphics 26, 3. In Press. Google ScholarDigital Library

4. Bishop, T. G. 1984. Self-Tracker: A Smart Optical Sensor on Silicon. PhD thesis, University of North Carolina at Chapel Hill. Google ScholarDigital Library

5. Brashear, H., Starner, T., Lukowicz, P., and Junker, H. 2003. Using multiple sensors for mobile sign language recognition. In International Symposium on Wearable Computers, 45–53. Google ScholarDigital Library

6. Bregler, C., and Malik, J. 1998. Tracking people with twists and exponential maps. In Conference on Computer Vision and Pattern Recognition, 8–15. Google ScholarDigital Library

7. Chen, Y., Lee, J., Parent, R., and Machiraju, R. 2005. Markerless monocular motion capture using image features and physical constraints. In Computer Graphics International, 36–43. Google ScholarDigital Library

8. Davison, A. J., Deutscher, J., and Reid, I. D. 2001. Markerless motion capture of complex full-body movement for character animation. In Computer Animation and Simulation, 3–14. Google ScholarDigital Library

9. Foxlin, E., and Harrington, M. 2000. Weartrack: A self-referenced head and hand tracker for wearable computers and portable VR. In International Symposium on Wearable Computers, 155–162. Google ScholarDigital Library

10. Foxlin, E., Harrington, M., and Pfeifer, G. 1998. Constellation: A wide-range wireless motion-tracking system for augmented reality and virtual set applications. In Proceedings of SIGGRAPH 98, Computer Graphics Proceedings, Annual Conference Series, 371–378. Google ScholarDigital Library

11. Foxlin, E. 2005. Pedestrian tracking with shoe-mounted inertial sensors. Computer Graphics and Applications 25, 6, 38–46. Google ScholarDigital Library

12. Frey, W. 1996. Off-the-shelf, real-time, human body motion capture for synthetic environments. Tech. Rep. NPSCS-96-003, Naval Postgraduate School, Monterey, California.Google Scholar

13. Gelb, A., Ed. 1974. Applied Optimal Estimation. MIT Press, Cambridge, Massachusetts.Google Scholar

14. Hazas, M., and Ward, A. 2002. A novel broadband ultrasonic location system. In International Conference on Ubiquitous Computing, 264–280. Google ScholarDigital Library

15. Hightower, J., and Borriello, G. 2001. Location systems for ubiquitous computing. Computer 34, 8 (Aug.), 57–66. Google ScholarDigital Library

16. Kirk, A. G., OBrien, J. F., and Forsyth, D. A. 2005. Skeletal parameter estimation from optical motion capture data. In Conference on Computer Vision and Pattern Recognition, 782–788. Google ScholarDigital Library

17. Meyer, K., Applewhite, H. L., and Biocca, F. A. 1992. A survey of position-trackers. Presence 1, 2, 173–200. Google ScholarDigital Library

18. Miller, N., Jenkins, O. C., Kallmann, M., and Matrić, M. J. 2004. Motion capture from inertial sensing for untethered humanoid teleoperation. In International Conference of Humanoid Robotics, 547–565.Google Scholar

19. O’Brien, J., Bodenheimer, R., Brostow, G., and Hodgins, J. 2000. Automatic joint parameter estimation from magnetic motion capture data. In Graphics Interface, 53–60.Google Scholar

20. Olson, E., Leonard, J., and Teller, S. 2006. Robust range-only beacon localization. Journal of Oceanic Engineering 31, 4 (Oct.), 949–958.Google ScholarCross Ref

21. Priyantha, N., Chakraborty, A., and Balakrishnan, H. 2000. The cricket location-support system. In International Conference on Mobile Computing and Networking, 32–43. Google ScholarDigital Library

22. Randell, C., and Muller, H. L. 2001. Low cost indoor positioning system. In International Conference on Ubiquitous Computing, 42–48. Google ScholarDigital Library

23. Theobalt, C., de Aguiar, E., Magnor, M., Theisel, H., and Seidel, H.-P. 2004. Marker-free kinematic skeleton estimation from sequences of volume data. In Symposium on Virtual Reality Software and Technology, 57–64. Google ScholarDigital Library

24. Vallidis, N. M. 2002. Whisper: a spread spectrum approach to occlusion in acoustic tracking. PhD thesis, University of North Carolina at Chapel Hill. Google ScholarDigital Library

25. Ward, A., Jones, A., and Hopper, A. 1997. A new location technique for the active office. Personal Communications 4, 5 (Oct.), 42–47.Google ScholarCross Ref

26. Ward, J. A., Lukowicz, P., and Tröster, G. 2005. Gesture spotting using wrist worn microphone and 3-axis accelerometer. In Joint Conference on Smart Objects and Ambient Intelligence, 99–104. Google ScholarDigital Library

27. Welch, G., and Bishop, G. 1997. Scaat: Incremental tracking with incomplete information. In Proceedings of SIGGRAPH 97, Computer Graphics Proceedings, Annual Conference Series, 333–344. Google ScholarDigital Library

28. Welch, G., and Foxlin, E. 2002. Motion tracking: no silver bullet, but a respectable arsenal. Computer Graphics and Applications 22, 6 (Nov./Dec.), 24–38. Google ScholarDigital Library

29. Woltring, H. J. 1974. New possibilities for human motion studies by real-time light spot position measurement. Biotelemetry 1, 3, 132–146.Google Scholar

30. Yokokohji, Y., Kitaoka, Y., and Yoshikawa, T. 2005. Motion capture from demonstrator’s viewpoint and its application to robot teaching. Journal of Robotic Systems 22, 2, 87–97. Google ScholarDigital Library