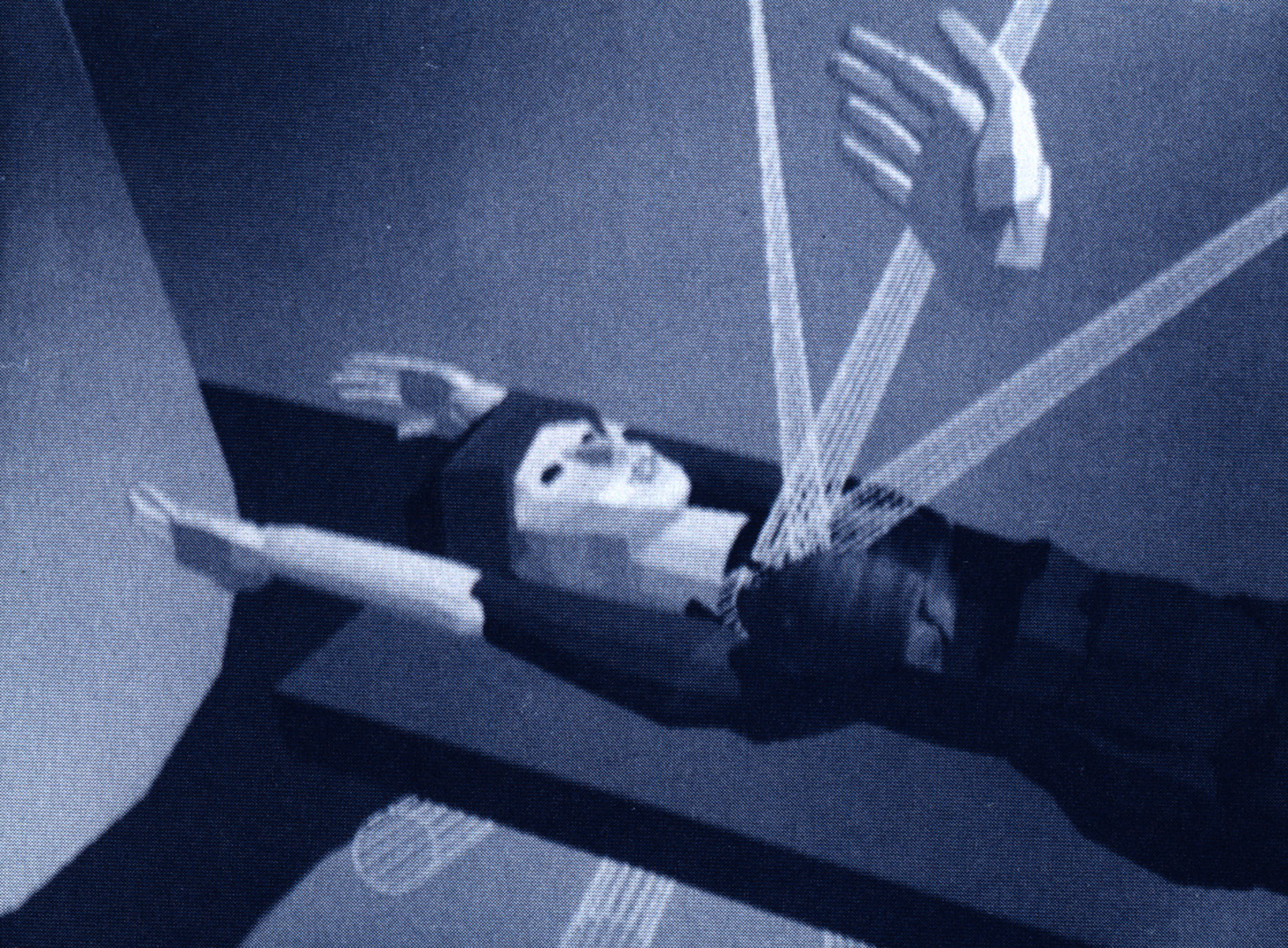

“Radiation Therapy Treatment Planning with a Head-Mounted Display” by Balu, Chung, Crittenden and Yoo

Conference:

Experience Type(s):

Title:

- Radiation Therapy Treatment Planning with a Head-Mounted Display

Program Title:

- Demonstrations and Displays

Presenter(s):

Collaborator(s):

Description:

This application demonstrates a head-mounted, display-based user interface for radiotherapy beam targeting. Head-mounted displays immerse their wearers in a computer-generated virtual world, thereby providing proprioceptive and vestibular cues for steering and navigation.

The basic goal of radiation treatment of a cancerous tumor is to irradiate the tumor with dosage sufficient to kill the malignant cells without further harming the patient. Ideally, only tumor cells would receive radiation, and healthy tissue would receive no radiation and suffer no damage. In practice, however, the use of treatment beams to deliver the radiation makes it impossible to avoid exposing the healthy tissue surrounding the tumor. Some careful planning is required to ensure that the treatment applies uniform lethal dosage to the tumor while delivering tolerable dosage to healthy tissue.

Optimal treatment planning requires thorough comprehension of the three-dimensional arrangement of the patient’s anatomy.Understanding the spatial interrelationships among anatomical structures enables the radiation therapist to find the best treatment configuration (orientations, cross-sectional shapes, and relative strengths of the treatment beams) that delivers the required dosage to the tumor while minimally affecting healthy tissue. Current radiation therapy planning tools are designed to aid the therapist in understanding the spatial arrangement of the patient’s anatomical structures and how proposed beam configurations would affect them.

Three-dimensional treatment planning has been developed in response to the drawbacks of 2D treatment planning using the simulator. As imaging and display technology improved, along with computational power, it became feasible to build interactive svs-tems that helped the radiothera-pist understand the wealth of three-dimensional information available.

Typically, a 3D treatment planning system runs on a high-resolution graphics workstation and comprises three modules: an interactive patient data evaluation module, a module to compute doses using 3D patient and beam geometry data, and an interactive dose display module. The research represented by this demonstration is concerned with the first module – the exploration of the patient anatomy to find the optimal treatment beam configuration.

The simple “walkaround” mode allows users to walk around and study a full-sized virtual patient in much the same manner as they would a real patient. Using a manual input device, the user can grab and orient virtual treatment beams.

Unlike a conventional graphics display, whose image moves out of a user’s field of view if he or she looks away, the head-mounted display’s screens are fixed relative to the user’s head such that its images are always in the wearer’s field of view, regardless of head position and orientation. Input from a position and orientation tracker enables the host computer to generate images that correspond to the user’s current head position and orientation. The real time generation and display of these images on the head-mounted display visually immerses the user in a three-dimensional virtual world.

Using the head-mounted display, navigation and steering within the virtual world are completely intuitive and natural. Because users are free to physically move about the virtual world, they can take full advantage of proprioceptive and vestibular cues to develop an understanding of the spatial relationships present in the virtual world, and to maintain orientation. This contrasts with the screen-based interface used in current state-of-the-art 3D planning tools, which fixes the user in virtual space while the model translates and rotates in virtual space. The user is then able to use only visual cues to maintain orien-tation, which may be difficult in a visually unfamiliar virtual world. Steering through the virtual world becomes more intuitive with the head-mounted display than with conventional displays. Desired changes in view no longer need to be decomposed into a sequence of knob turns and mouse movements. They can be naturally effected by turning one’s head and taking a step.

Other Information:

Sponsors: National Institutes of Health, Defense Advanced Research Projects Agency, National Science Foundation, Office of Naval Research

Hardware: VPL Evephone models 1 and 2, Polhemus Navigational Sciences 3Space magnetic tracker, Pixel-Planes 5, Macintosh IIci

Software: UNC-developed software

Application: Medical: treatment simulation

Type of System: Player, single-user

Interaction Class: Immersive, inclusive