“Perceptually-Based Foveated Virtual Reality” by Patney, Kim, Salvi, Kaplanyan, Wyman, et al. …

Conference:

Type(s):

Entry Number: 17

Title:

- Perceptually-Based Foveated Virtual Reality

Presenter(s):

Description:

Humans have two distinct vision systems: foveal and peripheral vision. Foveal vision is sharp and detailed, while peripheral vision lacks fidelity. The difference in characteristics of the two systems enable recently popular foveated rendering systems, which seek to increase rendering performance by lowering image quality in the periphery.

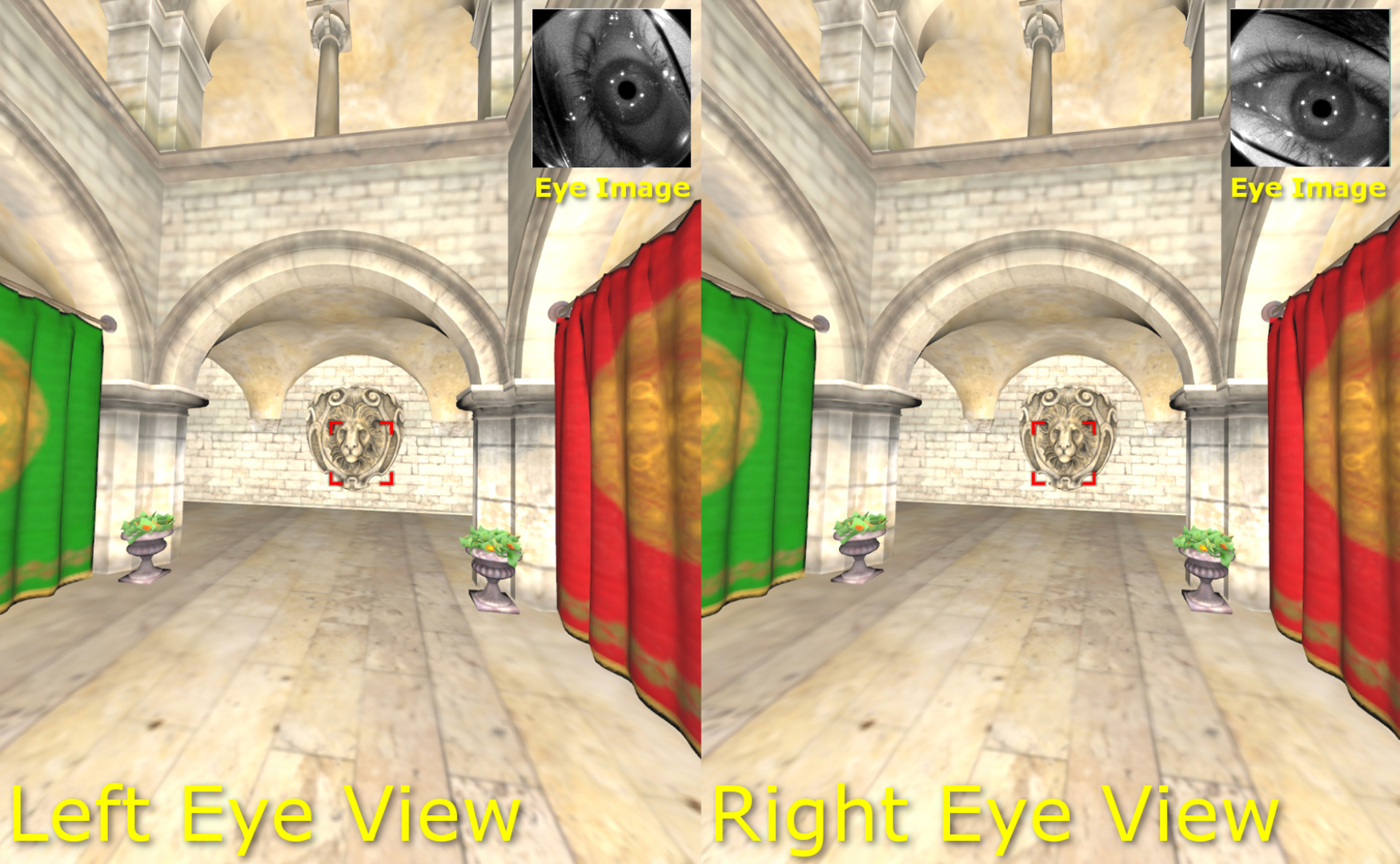

We present a set of perceptually-based methods for improving foveated rendering running on a prototype virtual reality headset with an integrated eye tracker. Foveated rendering has previously been demonstrated in conventional displays, but has recently become an especially attractive prospect in virtual reality (VR) and augmented reality (AR) display settings with a large field-of-view (FOV) and high frame rate requirements. Investigating prior work on foveated rendering, we find that some previous quality-reduction techniques can create objectionable artifacts like temporal instability and contrast loss. Our emerging technologies installation demonstrates these techniques running live in a head-mounted display and we will compare them against our new perceptuallybased foveated techniques. Our new foveation techniques enable significant reduction in rendering cost but have no discernible difference in visual quality. We show how such techniques can fulfill these requirements with potentially large reductions in rendering cost.

References:

Guenter, B., Finch, M., Drucker, S., Tan, D., and Snyder, J. 2012. Foveated 3D graphics. ACM Transactions on Graphics 31, 6, 164:1–164:10.

Karis, B. 2014. High-quality temporal supersampling. In Advances in Real-Time Rendering in Games, SIGGRAPH Courses.

Keyword(s):

- foveated rendering

- perceptually-based

- virtual reality

- Augmented reality

Acknowledgements:

We would like to thank SMI for providing us with Oculus DK2 HMDs integrated with high-speed gaze tracking hardware.