“Time slice video synthesis by robust video alignment” by Cui, Wang, Tan and Wang

Conference:

Type(s):

Title:

- Time slice video synthesis by robust video alignment

Session/Category Title: Video

Presenter(s)/Author(s):

Moderator(s):

Abstract:

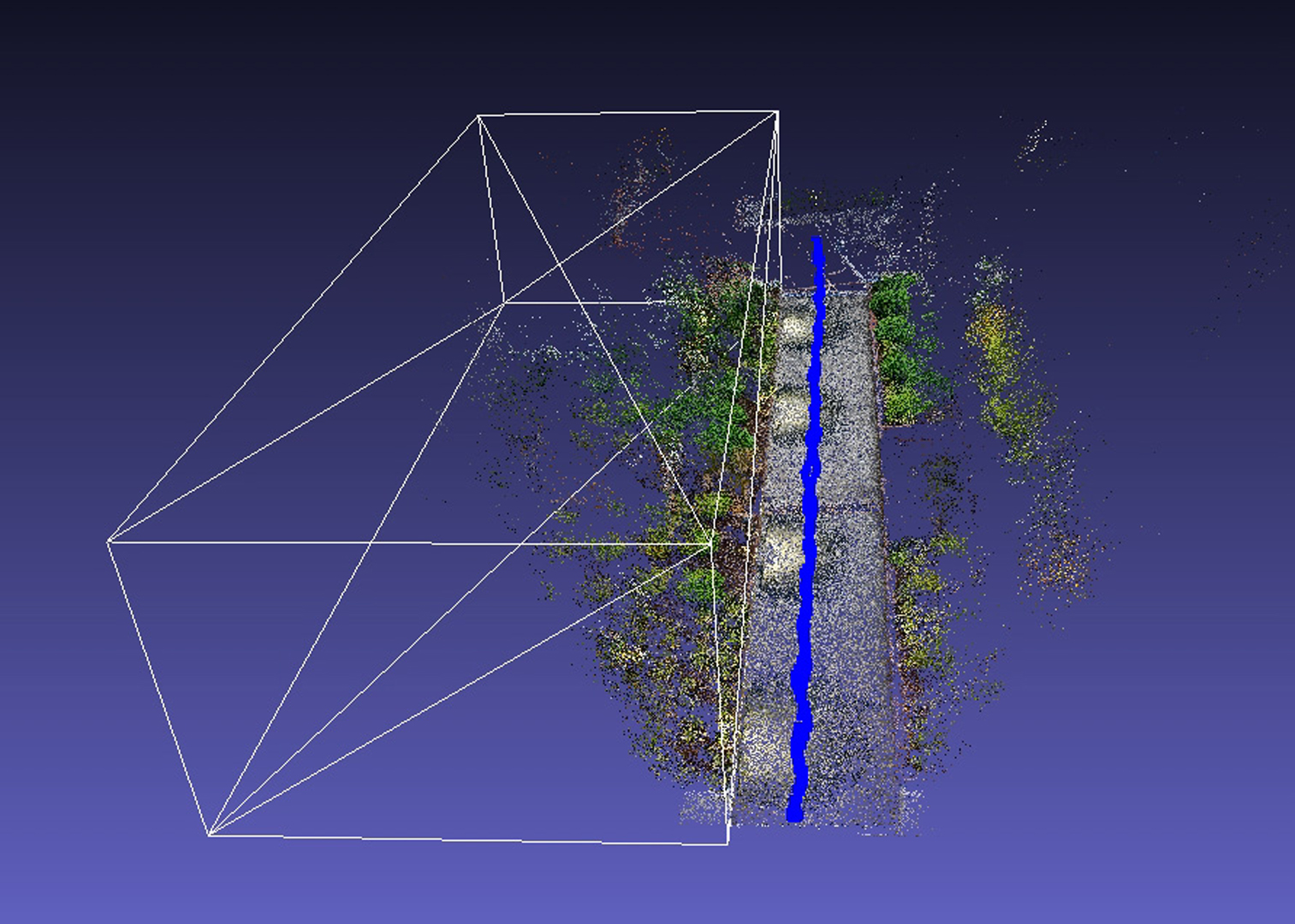

Time slice photography is a popular effect that visualizes the passing of time by aligning and stitching multiple images capturing the same scene at different times together into a single image. Extending this effect to video is a difficult problem, and one where existing solutions have only had limited success. In this paper, we propose an easy-to-use and robust system for creating time slice videos from a wide variety of consumer videos. The main technical challenge we address is how to align videos taken at different times with substantially different appearances, in the presence of moving objects and moving cameras with slightly different trajectories. To achieve a temporally stable alignment, we perform a mixed 2D-3D alignment, where a rough 3D reconstruction is used to generate sparse constraints that are integrated into a pixelwise 2D registration. We apply our method to a number of challenging scenarios, and show that we can achieve a higher quality registration than prior work. We propose a 3D user interface that allows the user to easily specify how multiple videos should be composited in space and time. Finally, we show that our alignment method can be applied in more general video editing and compositing tasks, such as object removal.

References:

1. Robert Anderson, David Gallup, Jonathan T Barron, Janne Kontkanen, Noah Snavely, Carlos Hernández, Sameer Agarwal, and Steven M Seitz. 2016. Jump: virtual reality video. ACM Transactions on Graphics (TOG) 35, 6 (2016), 198.Google ScholarDigital Library

2. Peter J Burt and Edward H Adelson. 1983. A multiresolution spline with application to image mosaics. ACM Transactions on Graphics (TOG) 2, 4 (1983), 217–236. Google ScholarDigital Library

3. Zhaopeng Cui and Ping Tan. 2015. Global Structure-from-Motion by Similarity Averaging. In Proceedings of the IEEE International Conference on Computer Vision. 864–872. Google ScholarDigital Library

4. Ido Freeman, Patrick Wieschollek, and Hendrik Lensch. 2016. Robust Video Synchronization using Unsupervised Deep Learning. arXiv preprint arXiv:1610.05985 (2016).Google Scholar

5. Heng Guo, Shuaicheng Liu, Tong He, Shuyuan Zhu, Bing Zeng, and Moncef Gabbouj. 2016. Joint Video Stitching and Stabilization From Moving Cameras. IEEE Transactions on Image Processing 25, 11 (2016), 5491.Google ScholarCross Ref

6. Berthold KP Horn and Brian G Schunck. 1981. Determining optical flow. Artificial intelligence 17, 1–3 (1981), 185–203.Google Scholar

7. Felix Klose, Oliver Wang, Jean-Charles Bazin, Marcus Magnor, and Alexander Sorkine-Hornung. 2015. Sampling based scene-space video processing. ACM Transactions on Graphics (TOG) 34, 4 (2015), 67.Google ScholarDigital Library

8. Johannes Kopf, Michael F Cohen, and Richard Szeliski. 2014. First-person hyper-lapse videos. ACM Transactions on Graphics (TOG) 33, 4 (2014), 78.Google ScholarDigital Library

9. Till Kroeger, Radu Timofte, Dengxin Dai, and Luc Van Gool. 2016. Fast Optical Flow using Dense Inverse Search. In European Conference on Computer Vision. Springer. Google ScholarCross Ref

10. Pierre-Yves Laffont, Zhile Ren, Xiaofeng Tao, Chao Qian, and James Hays. 2014. Transient attributes for high-level understanding and editing of outdoor scenes. ACM Transactions on Graphics (TOG) 33, 4 (2014), 149.Google ScholarDigital Library

11. Jungjin Lee, Bumki Kim, Kyehyun Kim, Younghui Kim, and Junyong Noh. 2016. Rich360: optimized spherical representation from structured panoramic camera arrays. ACM Transactions on Graphics (TOG) 35, 4 (2016), 63.Google ScholarDigital Library

12. Wenbin Li, Fabio Viola, Jonathan Starck, Gabriel J. Brostow, and Neill D.F. Campbell. 2016. Roto++: Accelerating Professional Rotoscoping using Shape Manifolds. ACM Transactions on Graphics (In proceeding of ACM SIGGRAPH’16) 35, 4 (2016).Google Scholar

13. Kaimo Lin, Shuaicheng Liu, Loong-Fah Cheong, and Bing Zeng. 2016. Seamless Video Stitching from Hand-held Camera Inputs. In Computer Graphics Forum, Vol. 35. Wiley Online Library, 479–487. Google ScholarDigital Library

14. Ce Liu, Jenny Yuen, and Antonio Torralba. 2011. Sift flow: Dense correspondence across scenes and its applications. IEEE transactions on pattern analysis and machine intelligence 33, 5 (2011), 978–994. Google ScholarDigital Library

15. Feng Liu, Michael Gleicher, Hailin Jin, and Aseem Agarwala. 2009. Content-preserving warps for 3D video stabilization. ACM Transactions on Graphics (TOG) 28, 3 (2009), 44.Google ScholarDigital Library

16. Shuaicheng Liu, Lu Yuan, Ping Tan, and Jian Sun. 2013. Bundled camera paths for video stabilization. ACM Transactions on Graphics (TOG) 32, 4 (2013), 78.Google ScholarDigital Library

17. David G Lowe. 1999. Object recognition from local scale-invariant features. In Computer vision, 1999. The proceedings of the seventh IEEE international conference on, Vol. 2. Ieee, 1150–1157.Google ScholarDigital Library

18. Nicolas Märki, Federico Perazzi, Oliver Wang, and Alexander Sorkine-Hornung. 2016. Bilateral space video segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. 743–751. Google ScholarCross Ref

19. Meinard Müller. 2007. Information retrieval for music and motion. Vol. 2. Springer. Google ScholarCross Ref

20. Federico Perazzi, Alexander Sorkine-Hornung, Henning Zimmer, Peter Kaufmann, Oliver Wang, S. Watson, and Markus H. Gross. 2015. Panoramic Video from Unstructured Camera Arrays. Comput. Graph. Forum 34, 2 (2015), 57–68. Google ScholarDigital Library

21. Yael Pritch, Alex Rav-Acha, and Shmuel Peleg. 2008. Nonchronological video synopsis and indexing. IEEE Transactions on Pattern Analysis and Machine Intelligence 30, 11 (2008), 1971–1984. Google ScholarDigital Library

22. Carsten Rother, Vladimir Kolmogorov, and Andrew Blake. 2004. Grabcut: Interactive foreground extraction using iterated graph cuts. In ACM transactions on graphics (TOG), Vol. 23. ACM, 309–314.Google ScholarDigital Library

23. Jan Rüegg, Oliver Wang, Aljoscha Smolic, and Markus Gross. 2013. Ducttake: Spatiotemporal video compositing. In Computer Graphics Forum, Vol. 32. Wiley Online Library, 51–61.Google Scholar

24. Peter Sand and Seth Teller. 2004. Video matching. ACM Transactions on Graphics (TOG) 23, 3 (2004), 592–599. Google ScholarDigital Library

25. Yichang Shih, Sylvain Paris, Frédo Durand, and William T Freeman. 2013. Data-driven hallucination of different times of day from a single outdoor photo. ACM Transactions on Graphics (TOG) 32, 6 (2013), 200.Google ScholarDigital Library

26. Oliver Wang, Christopher Schroers, Henning Zimmer, Markus Gross, and Alexander Sorkine-Hornung. 2014. Videosnapping: Interactive synchronization of multiple videos. ACM Transactions on Graphics (TOG) 33, 4 (2014), 77.Google ScholarDigital Library

27. Guofeng Zhang, Zilong Dong, Jiaya Jia, Liang Wan, Tien-Tsin Wong, and Hujun Bao. 2009. Refilming with depth-inferred videos. IEEE Transactions on Visualization and Computer Graphics 15, 5 (2009), 828–840. Google ScholarDigital Library

28. Fan Zhong, Song Yang, Xueying Qin, Dani Lischinski, Daniel Cohen-Or, and Baoquan Chen. 2014. Slippage-free background replacement for hand-held video. ACM Transactions on Graphics (TOG) 33, 6 (2014), 199.Google ScholarDigital Library

29. Danping Zou and Ping Tan. 2013. Coslam: Collaborative visual slam in dynamic environments. IEEE transactions on pattern analysis and machine intelligence 35, 2 (2013), 354–366. Google ScholarDigital Library