“Perception of Scale with Distance in 3D Visualization” by Cheng and Boulanger

Conference:

Type(s):

Entry Number: 080

Title:

- Perception of Scale with Distance in 3D Visualization

Presenter(s)/Author(s):

Abstract:

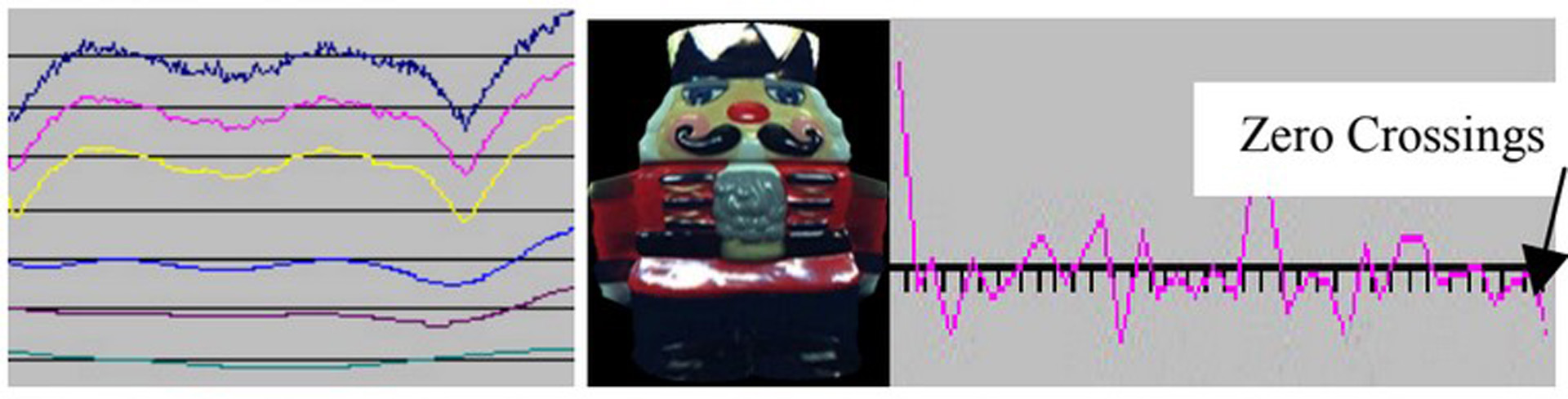

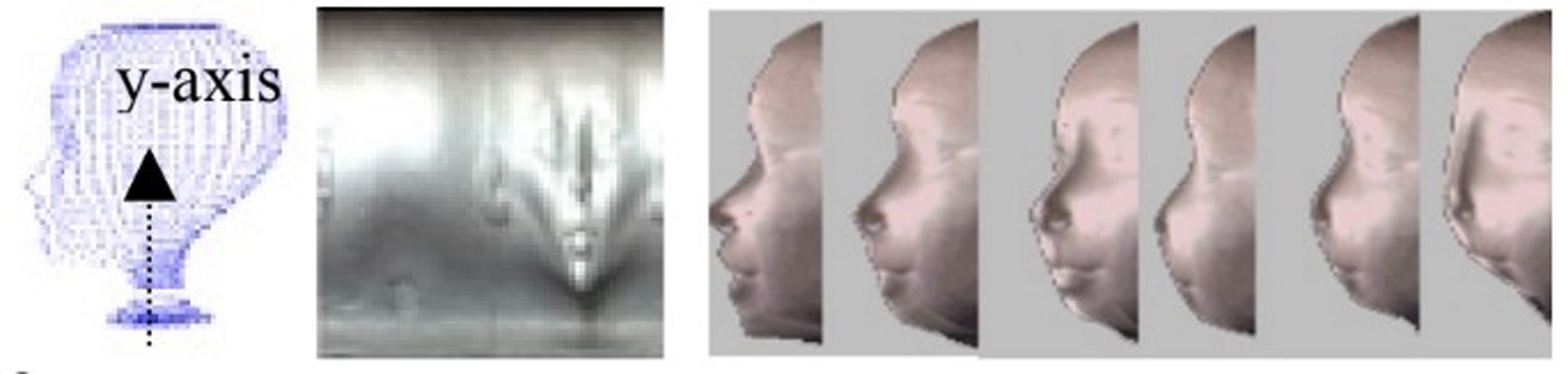

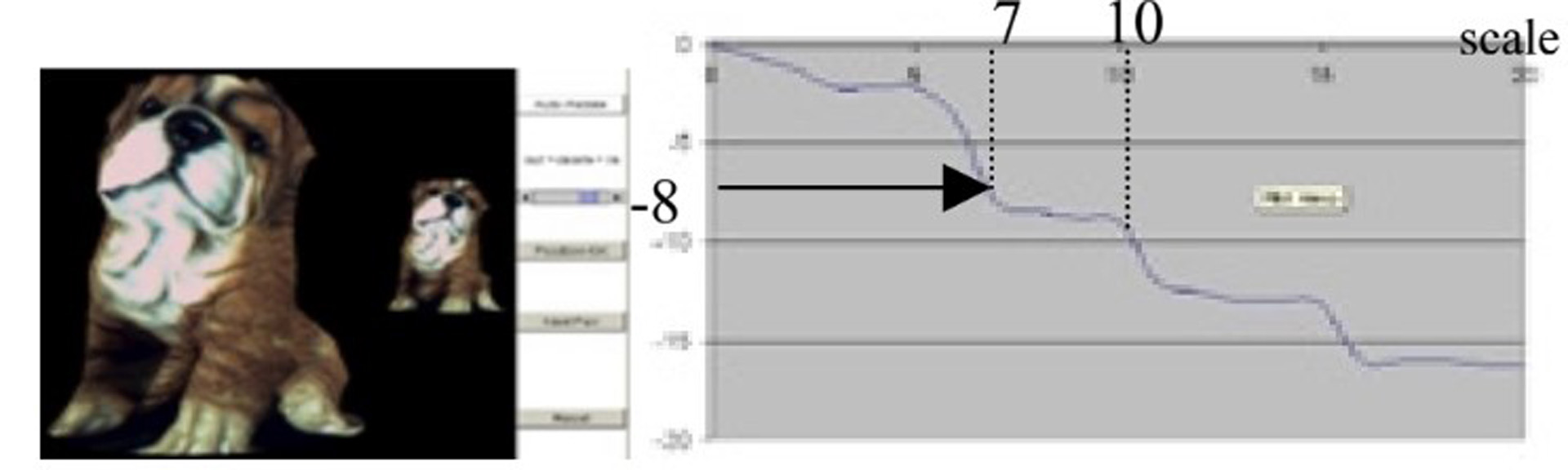

In this poster we describe preliminary work relating the perception of scale (the variance parameter in scale-space filtering) with distance, for applications in 3D online visualization. 3D geometry and texture simplification can be based on scale-space analysis of the surface curvature and feature point distribution, to facilitate online transmission. The scale-space approach differs from other simplification approaches [1,2] in that a few parameters can be used to mathematically control the extent of simplification, and joint texture/mesh simplification is possible with a trade-off between texture and mesh transmission. We will outline our approach to joint TexMesh simplification (differing from purely surface simplification [3]) using scale-space filtering, followed by a description of how scale is perceived by human observers for 3D objects.

References:

1. D. P. Luebke, “A Developer’s Survey of Polygonal Simplification Algorithms”, IEEE Computer Graphics and Applications, 2001.

2. H. Hoppe, “Progressive meshes” Proceedings of SIGGRAPH 1996.

3. M. Pauly et al., “Multi-scale feature extraction on point-sampled surfaces,” Eurographics 2003, Granada, Spain.

4. A. P. Witkin, “Scale-space filtering,” IJCAI, 1983, pp. 1019–1022.

5. P. Boulanger et al., “Intrinsic filtering of range images using a physically based noise model,” Vision Interface 2002, Calgary, Canada.