“Neural best-buddies: sparse cross-domain correspondence” by Aberman, Liao, Shi, Lischinski, Chen, et al. …

Conference:

Type(s):

Entry Number: 69

Title:

- Neural best-buddies: sparse cross-domain correspondence

Session/Category Title: Image & Shape Analysis With CNNs

Presenter(s)/Author(s):

Moderator(s):

Abstract:

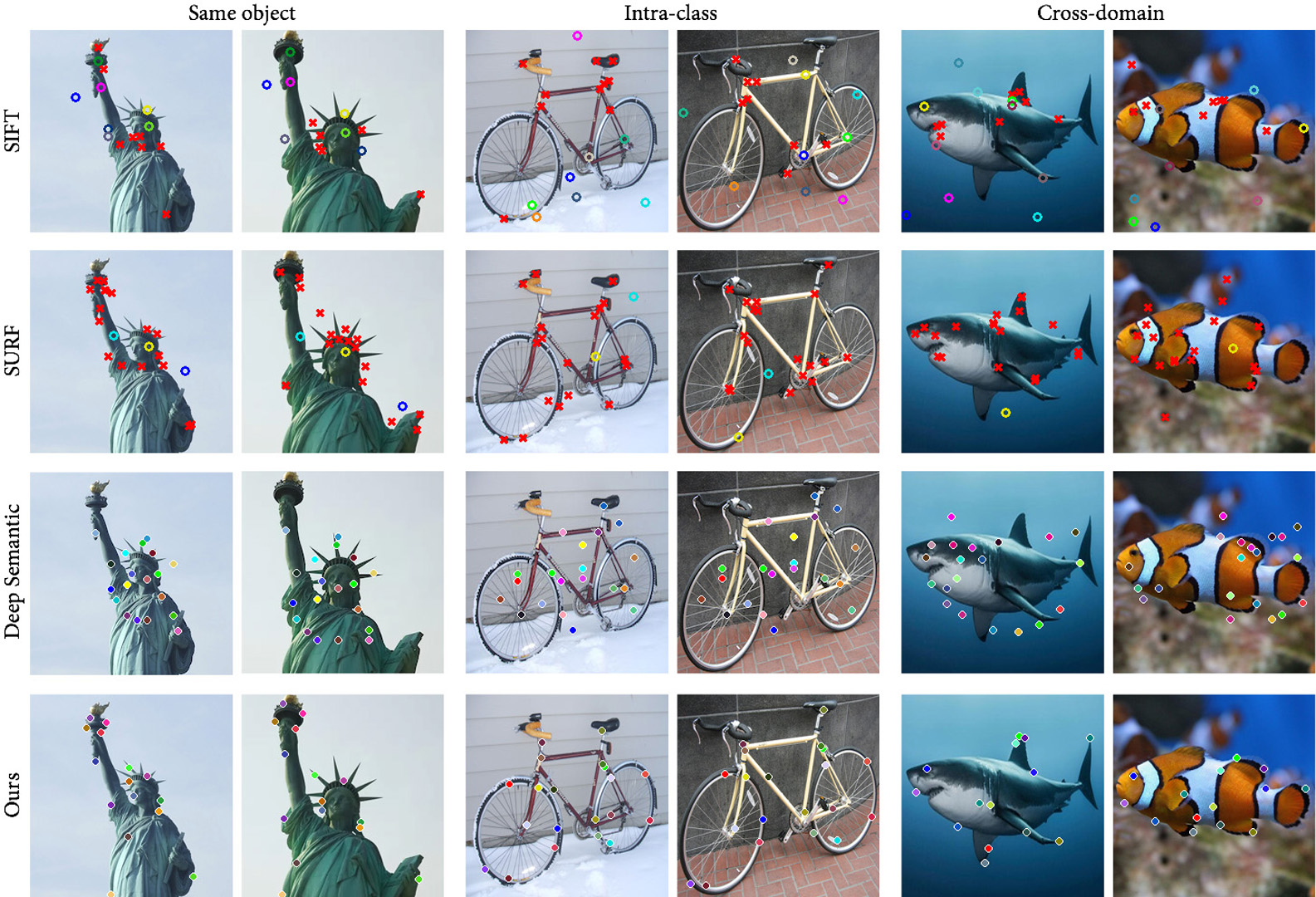

Correspondence between images is a fundamental problem in computer vision, with a variety of graphics applications. This paper presents a novel method for sparse cross-domain correspondence. Our method is designed for pairs of images where the main objects of interest may belong to different semantic categories and differ drastically in shape and appearance, yet still contain semantically related or geometrically similar parts. Our approach operates on hierarchies of deep features, extracted from the input images by a pre-trained CNN. Specifically, starting from the coarsest layer in both hierarchies, we search for Neural Best Buddies (NBB): pairs of neurons that are mutual nearest neighbors. The key idea is then to percolate NBBs through the hierarchy, while narrowing down the search regions at each level and retaining only NBBs with significant activations. Furthermore, in order to overcome differences in appearance, each pair of search regions is transformed into a common appearance.We evaluate our method via a user study, in addition to comparisons with alternative correspondence approaches. The usefulness of our method is demonstrated using a variety of graphics applications, including cross-domain image alignment, creation of hybrid images, automatic image morphing, and more.

References:

1. Connelly Barnes, Eli Shechtman, Adam Finkelstein, and Dan B Goldman. 2009. Patch-Match: A randomized correspondence algorithm for structural image editing. ACM Transactions on Graphics (TOG) 28, 3 (2009), Article no. 24. Google ScholarDigital Library

2. Connelly Barnes, Eli Shechtman, Dan B Goldman, and Adam Finkelstein. 2010. The generalized patchmatch correspondence algorithm. In Proc. ECCV. Springer, 29–43. Google ScholarDigital Library

3. Herbert Bay, Tinne Tuytelaars, and Luc Van Gool. 2006. Surf: Speeded up robust features. Computer vision-ECCV 2006 (2006), 404–417. Google ScholarDigital Library

4. Peter N Belhumeur, David W Jacobs, David J Kriegman, and Neeraj Kumar. 2013. Localizing parts of faces using a consensus of exemplars. IEEE Transactions on Pattern Analysis and Machine Intelligence 35, 12 (2013), 2930–2940. Google ScholarDigital Library

5. Martin Benning, Michael Möller, Raz Z Nossek, Martin Burger, Daniel Cremers, Guy Gilboa, and Carola-Bibiane Schönlieb. 2017. Nonlinear Spectral Image Fusion. In International Conference on Scale Space and Variational Methods in Computer Vision. Springer, 41–53.Google Scholar

6. Martin Bichsel. 1996. Automatic interpolation and recognition of face images by morphing. In Proc. Second International Conference on Automatic Face and Gesture Recognition. IEEE, 128–135. Google ScholarDigital Library

7. Dmitri Bitouk, Neeraj Kumar, Samreen Dhillon, Peter Belhumeur, and Shree K Nayar. 2008. Face swapping: automatically replacing faces in photographs. ACM Transactions on Graphics (TOG) 27, 3 (2008), 39. Google ScholarDigital Library

8. Christopher B Choy, JunYoung Gwak, Silvio Savarese, and Manmohan Chandraker. 2016. Universal correspondence network. In Advances in Neural Information Processing Systems. 2414–2422. Google ScholarDigital Library

9. Tali Dekel, Shaul Oron, Michael Rubinstein, Shai Avidan, and William T Freeman. 2015. Best-buddies similarity for robust template matching. In Proc. CVPR. IEEE, 2021–2029.Google ScholarCross Ref

10. Philipp Fischer, Alexey Dosovitskiy, and Thomas Brox. 2014. Descriptor matching with convolutional neural networks: a comparison to sift. arXiv preprint arXiv:1405.5769 (2014).Google Scholar

11. Leon A Gatys, Alexander S Ecker, and Matthias Bethge. 2015. A neural algorithm of artistic style. arXiv preprint arXiv:1508.06576 (2015).Google Scholar

12. Georgia Gkioxari, Bharath Hariharan, Ross Girshick, and Jitendra Malik. 2014. Using k-poselets for detecting people and localizing their keypoints. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. 3582–3589. Google ScholarDigital Library

13. Yoav HaCohen, Eli Shechtman, Dan B Goldman, and Dani Lischinski. 2011. Non-rigid dense correspondence with applications for image enhancement. ACM transactions on graphics (TOG) 30, 4 (2011), 70. Google ScholarDigital Library

14. Bumsub Ham, Minsu Cho, Cordelia Schmid, and Jean Ponce. 2016. Proposal flow. In Proc. CVPR. IEEE, 3475–3484.Google ScholarCross Ref

15. Chris Harris and Mike Stephens. 1988. A combined corner and edge detector.. In Proc. Alvey Vision Conference, Vol. 15. Manchester, UK, 10–5244.Google ScholarCross Ref

16. Hui Huang, Kangxue Yin, Minglun Gong, Dani Lischinski, Daniel Cohen-Or, Uri M Ascher, and Baoquan Chen. 2013. “Mind the gap”: tele-registration for structure-driven image completion. ACM Trans. Graph. 32, 6 (2013), 174–1. Google ScholarDigital Library

17. Xun Huang and Serge Belongie. 2017. Arbitrary style transfer in real-time with adaptive instance normalization. arXiv preprint arXiv:1703.06868 (2017).Google Scholar

18. Justin Johnson, Alexandre Alahi, and Li Fei-Fei. 2016. Perceptual losses for real-time style transfer and super-resolution. In Proc. ECCV. Springer, 694–711.Google Scholar

19. Seungryong Kim, Dongbo Min, Bumsub Ham, Sangryul Jeon, Stephen Lin, and Kwanghoon Sohn. 2017. FCSS: Fully convolutional self-similarity for dense semantic correspondence. arXiv preprint arXiv:1702.00926 (2017).Google Scholar

20. Iryna Korshunova, Wenzhe Shi, Joni Dambre, and Lucas Theis. 2016. Fast face-swap using convolutional neural networks. arXiv preprint arXiv:1611.09577 (2016).Google Scholar

21. Marek Kowalski, Jacek Naruniec, and Tomasz Trzcinski. 2017. Deep Alignment Network: A convolutional neural network for robust face alignment. arXiv preprint arXiv:1706.01789 (2017).Google Scholar

22. Alex Krizhevsky, Ilya Sutskever, and Geoffrey E Hinton. 2012. Imagenet classification with deep convolutional neural networks. In Advances in neural information processing systems. 1097–1105. Google ScholarDigital Library

23. Ting-ting Li, Bo Jiang, Zheng-zheng Tu, Bin Luo, and Jin Tang. 2015. Image matching using mutual k-nearest neighbor graph. In International Conference of Young Computer Scientists, Engineers and Educators. Springer, 276–283.Google Scholar

24. Jing Liao, Rodolfo S Lima, Diego Nehab, Hugues Hoppe, Pedro V Sander, and Jinhui Yu. 2014. Automating image morphing using structural similarity on a halfway domain. ACM Transactions on Graphics (TOG) 33, 5 (2014), 168. Google ScholarDigital Library

25. Jing Liao, Yuan Yao, Lu Yuan, Gang Hua, and Sing Bing Kang. 2017. Visual Attribute Transfer Through Deep Image Analogy. ACM Trans. Graph. 36, 4, Article 120 (July 2017), 15 pages. Google ScholarDigital Library

26. Tony Lindeberg. 2015. Image matching using generalized scale-space interest points. Journal of Mathematical Imaging and Vision 52, 1 (2015), 3–36. Google ScholarDigital Library

27. Ce Liu, Jenny Yuen, and Antonio Torralba. 2011. Sift flow: Dense correspondence across scenes and its applications. IEEE Transactions on Pattern Analysis and Machine Intelligence 33, 5 (2011), 978–994. Google ScholarDigital Library

28. Jonathan L Long, Ning Zhang, and Trevor Darrell. 2014. Do convnets learn correspondence?. In Advances in Neural Information Processing Systems. 1601–1609. Google ScholarDigital Library

29. David G Lowe. 2004. Distinctive image features from scale-invariant keypoints. International Journal of Computer Vision 60, 2 (2004), 91–110. Google ScholarDigital Library

30. Aravindh Mahendran and Andrea Vedaldi. 2015. Understanding deep image representations by inverting them. In Proceedings of the IEEE conference on computer vision and pattern recognition. 5188–5196.Google ScholarCross Ref

31. Aude Oliva, Antonio Torralba, and Philippe G Schyns. 2006. Hybrid images. In ACM Transactions on Graphics (TOG), Vol. 25. ACM, 527–532. Google ScholarDigital Library

32. Patrick Pérez, Michel Gangnet, and Andrew Blake. 2003. Poisson image editing. In ACM Transactions on graphics (TOG), Vol. 22. ACM, 313–318. Google ScholarDigital Library

33. Olga Russakovsky, Jia Deng, Hao Su, Jonathan Krause, Sanjeev Satheesh, Sean Ma, Zhiheng Huang, Andrej Karpathy, Aditya Khosla, Michael Bernstein, Alexander C. Berg, and Li Fei-Fei. 2015. ImageNet large scale visual recognition challenge. International Journal of Computer Vision 115, 3 (Dec 2015), 211–252. Google ScholarDigital Library

34. Scott Schaefer, Travis McPhail, and Joe Warren. 2006. Image deformation using moving least squares. ACM transactions on graphics (TOG) 25, 3 (2006), 533–540. Google ScholarDigital Library

35. Eli Shechtman, Alex Rav-Acha, Michal Irani, and Steve Seitz. 2010. Regenerative morphing. In Computer Vision and Pattern Recognition (CVPR), 2010 IEEE Conference on. IEEE, 615–622.Google ScholarCross Ref

36. Edgar Simo-Serra, Eduard Trulls, Luis Ferraz, Iasonas Kokkinos, Pascal Fua, and Francesc Moreno-Noguer. 2015. Discriminative learning of deep convolutional feature point descriptors. In Proc. ICCV. IEEE, 118–126. Google ScholarDigital Library

37. Karen Simonyan, Andrea Vedaldi, and Andrew Zisserman. 2014. Learning local feature descriptors using convex optimisation. IEEE Transactions on Pattern Analysis and Machine Intelligence 36, 8 (2014), 1573–1585. Google ScholarDigital Library

38. Karen Simonyan and Andrew Zisserman. 2014. Very deep convolutional networks for large-scale image recognition. arXiv preprint arXiv:1409.1556 (2014).Google Scholar

39. Itamar Talmi, Roey Mechrez, and Lihi Zelnik-Manor. 2017. Template Matching with Deformable Diversity Similarity. In Proc. CVPR. IEEE.Google ScholarCross Ref

40. Engin Tola, Vincent Lepetit, and Pascal Fua. 2010. Daisy: An efficient dense descriptor applied to wide-baseline stereo. IEEE transactions on pattern analysis and machine intelligence 32, 5 (2010), 815–830. Google ScholarDigital Library

41. Nikolai Ufer and Bjorn Ommer. 2017. Deep semantic feature matching. In Proc. CVPR. IEEE, 5929–5938.Google ScholarCross Ref

42. Zhou Wang, Alan C Bovik, Hamid R Sheikh, and Eero P Simoncelli. 2004. Image quality assessment: from error visibility to structural similarity. IEEE Transactions on Image Processing 13, 4 (2004), 600–612. Google ScholarDigital Library

43. Philippe Weinzaepfel, Jerome Revaud, Zaid Harchaoui, and Cordelia Schmid. 2013. DeepFlow: Large displacement optical flow with deep matching. In Proc. ICCV. 1385–1392. Google ScholarDigital Library

44. George Wolberg. 1998. Image morphing: a survey. The Visual Computer 14, 8 (1998), 360–372.Google ScholarCross Ref

45. Yu Xiang, Roozbeh Mottaghi, and Silvio Savarese. 2014. Beyond pascal: A benchmark for 3d object detection in the wild. In Proc. WACV. IEEE, 75–82.Google ScholarCross Ref

46. Hongsheng Yang, Wen-Yan Lin, and Jiangbo Lu. 2014. Daisy filter flow: A generalized discrete approach to dense correspondences. In Proc. CVPR. 3406–3413. Google ScholarDigital Library

47. Jason Yosinski, Jeff Clune, Anh Mai Nguyen, Thomas J. Fuchs, and Hod Lipson. 2015. Understanding Neural Networks Through Deep Visualization. CoRR abs/1506.06579 (2015). arXiv: 1506.06579 http://arxiv.org/abs/1506.06579Google Scholar

48. Matthew D. Zeiler and Rob Fergus. 2013. Visualizing and Understanding Convolutional Networks. CoRR abs/1311.2901 (2013). arXiv:1311.2901 http://arxiv.org/abs/1311.2901Google Scholar

49. Tinghui Zhou, Yong Jae Lee, Stella X Yu, and Alyosha A Efros. 2015. Flowweb: Joint image set alignment by weaving consistent, pixel-wise correspondences. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. 1191–1200.Google Scholar

50. Tinghui Zhou, Philipp Krahenbuhl, Mathieu Aubry, Qixing Huang, and Alexei A Efros. 2016. Learning dense correspondence via 3d-guided cycle consistency. In Proc. CVPR. 117–126.Google ScholarCross Ref

51. Shizhan Zhu, Cheng Li, Chen Change Loy, and Xiaoou Tang. 2015. Face alignment by coarse-to-fine shape searching. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. 4998–5006.Google Scholar