“Exploiting the limitations of spatio-temporal vision for more efficient VR rendering” by Denes, Maruszczyk and Mantiuk

Conference:

Type(s):

Entry Number: 21

Title:

- Exploiting the limitations of spatio-temporal vision for more efficient VR rendering

Presenter(s)/Author(s):

Abstract:

INTRODUCTION

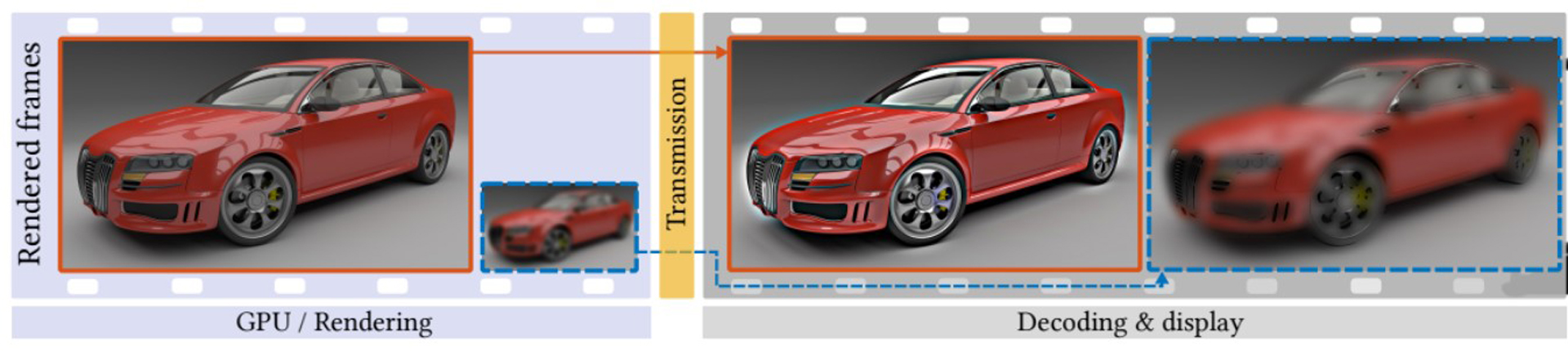

Increasingly higher virtual reality (VR) display resolutions and good-quality anti-aliasing make rendering in VR prohibitively expensive. The generation of these complex frames 90 times per second in a binocular setup demands substantial computational power. Wireless transmission of the frames from the GPU to the VR headset poses another challenge, requiring high-bandwidth dedicated links. Current approaches of rendering in VR with limited computational resources often utilize reprojection techniques [Beeler et al. 2016; Didyk et al. 2010; Vlachos 2016]. The assumption of these techniques is that the rendering cost can be reduced by drawing only every k-th frame, and generating in-between frames by transforming the previous frame. However, as reprojection often produces unexpected distortions and artifacts when encountering rapid brightness change, occlusions and repeated patterns, these techniques should only be used as a last resort. Vlachos [2016] suggests that displaying a lower resolution frame is often preferred.

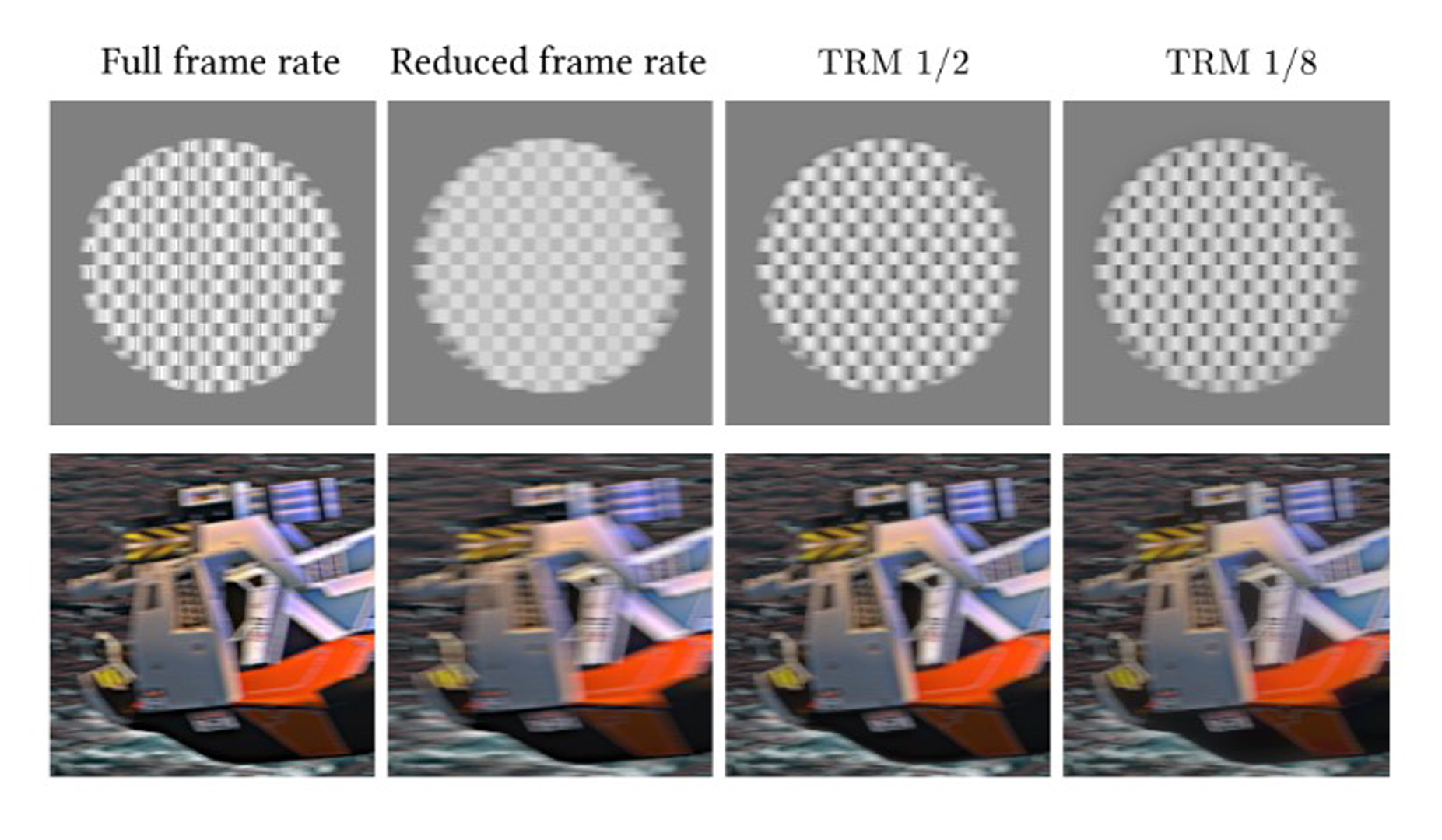

We propose a conceptually simple and robust technique for reducing both bandwidth and rendering cost for high-frame-rate displays by 25–49% with only marginal computational overhead and small impact on image quality. Our technique, Temporal Resolution Multiplexing (TRM), can also be applied to future high-refresh-rate desktop displays and television sets to improve motion quality.

References:

- Dean Beeler, Ed Hutchins, and Paul Pedriana. 2016. Asynchronous Spacewarp. https: //developer.oculus.com/blog/asynchronous-spacewarp/. (2016). Accessed: 2018-05- 02.

- Hanfeng Chen, Sung-soo Kim, Sung-hee Lee, Oh-jae Kwon, and Jun-ho Sung. 2005. Nonlinearity compensated smooth frame insertion for motion-blur reduction in LCD. In 2005 IEEE 7th Workshop on Multimedia Signal Processing. IEEE, 1–4. https: //doi.org/10.1109/MMSP.2005.248646

- Piotr Didyk, Elmar Eisemann, Tobias Ritschel, Karol Myszkowski, and Hans Peter Seidel. 2010. Perceptually-motivated real-time temporal upsampling of 3D content for high-refresh-rate displays. Computer Graphics Forum 29, 2 (2010), 713–722. https://doi.org/10.1111/j.1467-8659.2009.01641.x

- Alex Vlachos. 2016. Advanced VR Rendering Performance. In Game Developers Conference (GDC).