“Code Replicability in Computer Graphics” by Bonneel, Coeurjolly, Digne and Mellado

Conference:

Type(s):

Title:

- Code Replicability in Computer Graphics

Session/Category Title: Systems and Software

Presenter(s)/Author(s):

Abstract:

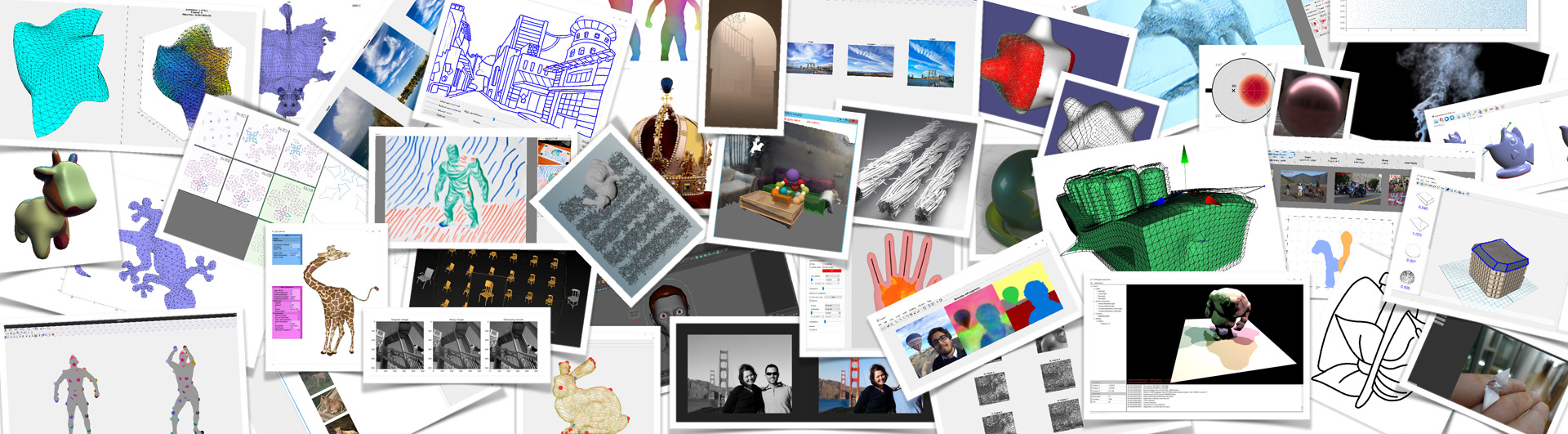

Being able to duplicate published research results is an important process of conducting research whether to build upon these findings or to compare with them. This process is called “replicability” when using the original authors’ artifacts (e.g., code), or “reproducibility” otherwise (e.g., re-implementing algorithms). Reproducibility and replicability of research results have gained a lot of interest recently with assessment studies being led in various fields, and they are often seen as a trigger for better result diffusion and transparency. In this work, we assess replicability in Computer Graphics, by evaluating whether the code is available and whether it works properly. As a proxy for this field we compiled, ran and analyzed 151 codes out of 374 papers from 2014, 2016 and 2018 SIGGRAPH conferences. This analysis shows a clear increase in the number of papers with available and operational research codes with a dependency on the subfields, and indicates a correlation between code replicability and citation count. We further provide an interactive tool to explore our results and evaluation data.

References:

1. ACM. 2016. Artifact Review and Badging. https://www.acm.org/publications/policies/artifact-review-badging (accessed Dec. 2019).

2. ACM. 2020. Open Access to ACM SIGGRAPH-Sponsored Content. https://www.siggraph.org/learn/conference-content/ (accessed Apr. 2020).

3. Monya Baker. 2016. 1,500 scientists lift the lid on reproducibility. Nature News 533, 7604 (2016), 452.

4. Monya Baker and Elie Dolgin. 2017. Cancer reproducibility project releases first results. Nature News 541, 7637 (2017), 269.

5. Lorena A Barba. 2018. Terminologies for reproducible research. arXiv preprint arXiv:1802.03311 (2018).

6. C Glenn Begley. 2013. Reproducibility: Six red flags for suspect work. Nature 497, 7450 (2013), 433.

7. C Glenn Begley and Lee M Ellis. 2012. Drug development: Raise standards for preclinical cancer research. Nature 483, 7391 (2012), 531.

8. Daniel J Benjamin, James O Berger, Magnus Johannesson, Brian A Nosek, E-J Wagenmakers, Richard Berk, Kenneth A Bollen, Björn Brembs, Lawrence Brown, Colin Camerer, et al. 2018. Redefine statistical significance. Nature Human Behaviour 2, 1 (2018), 6.

9. Yoav Benjamini and Yosef Hochberg. 1995. Controlling the false discovery rate: a practical and powerful approach to multiple testing. Journal of the Royal statistical society: series B (Methodological) 57, 1 (1995), 289–300.

10. Colin F Camerer, Anna Dreber, Felix Holzmeister, Teck-Hua Ho, Jürgen Huber, Magnus Johannesson, Michael Kirchler, Gideon Nave, Brian A Nosek, Thomas Pfeiffer, et al. 2018. Evaluating the replicability of social science experiments in Nature and Science between 2010 and 2015. Nature Human Behaviour 2, 9 (2018), 637.

11. Center for Open Science. 2015. Open Science Framework. https://www.osf.io (accessed Dec. 2019).

12. Xiaoli Chen, Sünje Dallmeier-Tiessen, Robin Dasler, Sebastian Feger, Pamfilos Fokianos, Jose Benito Gonzalez, Harri Hirvonsalo, Dinos Kousidis, Artemis Lavasa, Salvatore Mele, et al. 2019. Open is not enough. Nature Physics 15, 2 (2019), 113–119.

13. Jon F Claerbout and Martin Karrenbach. 1992. Electronic documents give reproducible research a new meaning. In SEG Technical Program Expanded Abstracts 1992. Society of Exploration Geophysicists, 601–604.

14. Jacob Cohen. 2016. The earth is round (p<. 05). In What if there were no significance tests? Routledge, 69–82.

15. Miguel Colom, Bertrand Kerautret, and Adrien Krähenbühl. 2018. An Overview of Platforms for Reproducible Research and Augmented Publications. In International Workshop on Reproducible Research in Pattern Recognition. Springer, 25–39.

16. Miguel Colom, Bertrand Kerautret, Nicolas Limare, Pascal Monasse, and Jean-Michel Morel. 2015. IPOL: a new journal for fully reproducible research; analysis of four years development. In 2015 7th International Conference on New Technologies, Mobility and Security (NTMS). IEEE, 1–5.

17. Roberto Di Cosmo and Stefano Zacchiroli. 2017. Software Heritage: Why and How to Preserve Software Source Code. In iPRES 2017: 14th International Conference on Digital Preservation (2017-09-25). Kyoto, Japan.

18. Emmanuel Didier and Catherine Guaspare-Cartron. 2018. The new watchdogs’ vision of science: A roundtable with Ivan Oransky (Retraction Watch) and Brandon Stell (PubPeer). Social studies of science 48, 1 (2018), 165–167.

19. Estelle Dumas-Mallet, Andy Smith, Thomas Boraud, and François Gonon. 2017. Poor replication validity of biomedical association studies reported by newspapers. PloS one 12, 2 (2017), e0172650.

20. Anders Eklund, Thomas E Nichols, and Hans Knutsson. 2016. Cluster failure: Why fMRI inferences for spatial extent have inflated false-positive rates. Proceedings of the national academy of sciences 113, 28 (2016), 7900–7905.

21. Github. 2016. Call for replication repository. https://github.com/ReScience/call-for-replication (accessed Dec. 2019).

22. Github. 2020. Github Archive Program. https://archiveprogram.github.com/ (accessed Jan. 2020).

23. Steven N Goodman, Daniele Fanelli, and John PA Ioannidis. 2016. What does research reproducibility mean? Science translational medicine 8, 341 (2016).

24. Odd Erik Gundersen and Sigbjørn Kjensmo. 2018. State of the art: Reproducibility in artificial intelligence. In Thirty-Second AAAI Conference on Artificial Intelligence.

25. Matthew Hutson. 2018. Artificial intelligence faces reproducibility crisis.

26. Bertrand Kerautret, Miguel Colom, Daniel Lopresti, Pascal Monasse, and Hugues Talbot. 2019. Reproducible Research in Pattern Recognition. Springer.

27. Bertrand Kerautret, Miguel Colom, and Pascal Monasse. 2017. Reproducible Research in Pattern Recognition. Springer.

28. Richard A Klein, Michelangelo Vianello, Fred Hasselman, Byron G Adams, Reginald B Adams Jr, Sinan Alper, Mark Aveyard, Jordan R Axt, Mayowa T Babalola, Štěpán Bahník, et al. 2018. Many Labs 2: Investigating variation in replicability across samples and settings. Advances in Methods and Practices in Psychol. Sci. 1, 4 (2018).

29. Shriram Krishnamurthi. 2020. About Artifact Evaluation. https://www.artifact-eval.org/about.html (accessed Apr. 2020).

30. Neil D. Lawrence. 2014. Managing Microsoft’s Conference Management Toolkit with Python for NIPS 2014. https://github.com/sods/conference (accessed Apr. 2020).

31. Matthew C Makel, Jonathan A Plucker, and Boyd Hegarty. 2012. Replications in psychology research: How often do they really occur? Perspectives on Psychological Science 7, 6 (2012), 537–542.

32. Blakeley B McShane, David Gal, Andrew Gelman, Christian Robert, and Jennifer L Tackett. 2019. Abandon statistical significance. The American Statistician 73, sup1 (2019), 235–245.

33. Engineering National Academies of Sciences, Medicine, et al. 2019. Reproducibility and replicability in science. National Academies Press.

34. Nature. 2018. Reproducibility: let’s get it right from the start. Nature Communications 9, 1 (2018), 3716.

35. Geoff Norman. 2010. Likert scales, levels of measurement and the “laws” of statistics. Advances in health sciences education 15, 5 (2010), 625–632.

36. Open Science Collaboration et al. 2015. Estimating the reproducibility of psychological science. Science 349, 6251 (2015), aac4716.

37. Daniele Panozzo. 2016. Graphics replicability stamp initiative. http://www.replicabilitystamp.org/ (accessed Dec. 2019).

38. Harold Pashler and Christine R Harris. 2012. Is the replicability crisis overblown? Three arguments examined. Perspectives on Psychological Science 7, 6 (2012), 531–536.

39. Joelle Pineau, Koustuv Sinha, Genevieve Fried, Rosemary Nan Ke, and Hugo Larochelle. 2019. ICLR Reproducibility Challenge 2019. ReScience C (2019).

40. Hans E Plesser. 2018. Reproducibility vs. replicability: a brief history of a confused terminology. Frontiers in neuroinformatics 11 (2018), 76.

41. Matthias Schwab, N Karrenbach, and Jon Claerbout. 2000. Making scientific computations reproducible. Computing in Science & Engineering 2, 6 (2000), 61–67.

42. Scientific Data (Nature Research). 2014. Recommended Data Repositories. https://www.nature.com/sdata/policies/repositories (accessed Dec. 2019).

43. David Soergel, Adam Saunders, and Andrew McCallum. 2013. Open Scholarship and Peer Review: a Time for Experimentation. ICML 2013 Peer Review Workshop (2013). https://openreview.net/ (accessed Dec. 2019).

44. James H Stagge, David E Rosenberg, Adel M Abdallah, Hadia Akbar, Nour A Attallah, and Ryan James. 2019. Assessing data availability and research reproducibility in hydrology and water resources. Scientific data 6 (2019).

45. The Royal Society Publishing. 2020. Open Peer Review. https://royalsocietypublishing.org/rspa/open-peer-review (accessed Jan. 2020).

46. Brad Tofel. 2007. Wayback’ for accessing web archives. In Proceedings of the 7th International Web Archiving Workshop. 27–37.

47. Patrick Vandewalle. 2019. Code availability for image processing papers: a status update. https://lirias.kuleuven.be/retrieve/541895$$DVandewalle_onlinecode_TIP_SITB19.pdf

48. Mark Ziemann, Yotam Eren, and Assam El-Osta. 2016. Gene name errors are widespread in the scientific literature. Genome biology 17, 1 (2016), 177.