“Regeneration of Real Objects in the Real World” by Matsuoka, Onozawa, Sato and Nojima

Conference:

Experience Type(s):

E-Tech Type(s):

Title:

- Regeneration of Real Objects in the Real World

Entry Number: 77

Organizer(s)/Presenter(s):

Description:

1 Introduction

The vision of this work is to regenerate real objects that had existed at some moment in the past and/or at some remote location as if they have been transferred to the present across space and time. The objects could be museum pieces or items in stores, for example. For this work, we have developed a quick and fully automated system that can capture a three-dimensional image of real objects. This success brought us close to our goal of “regeneration of real objects in the real world.”

2 Technological Innovations

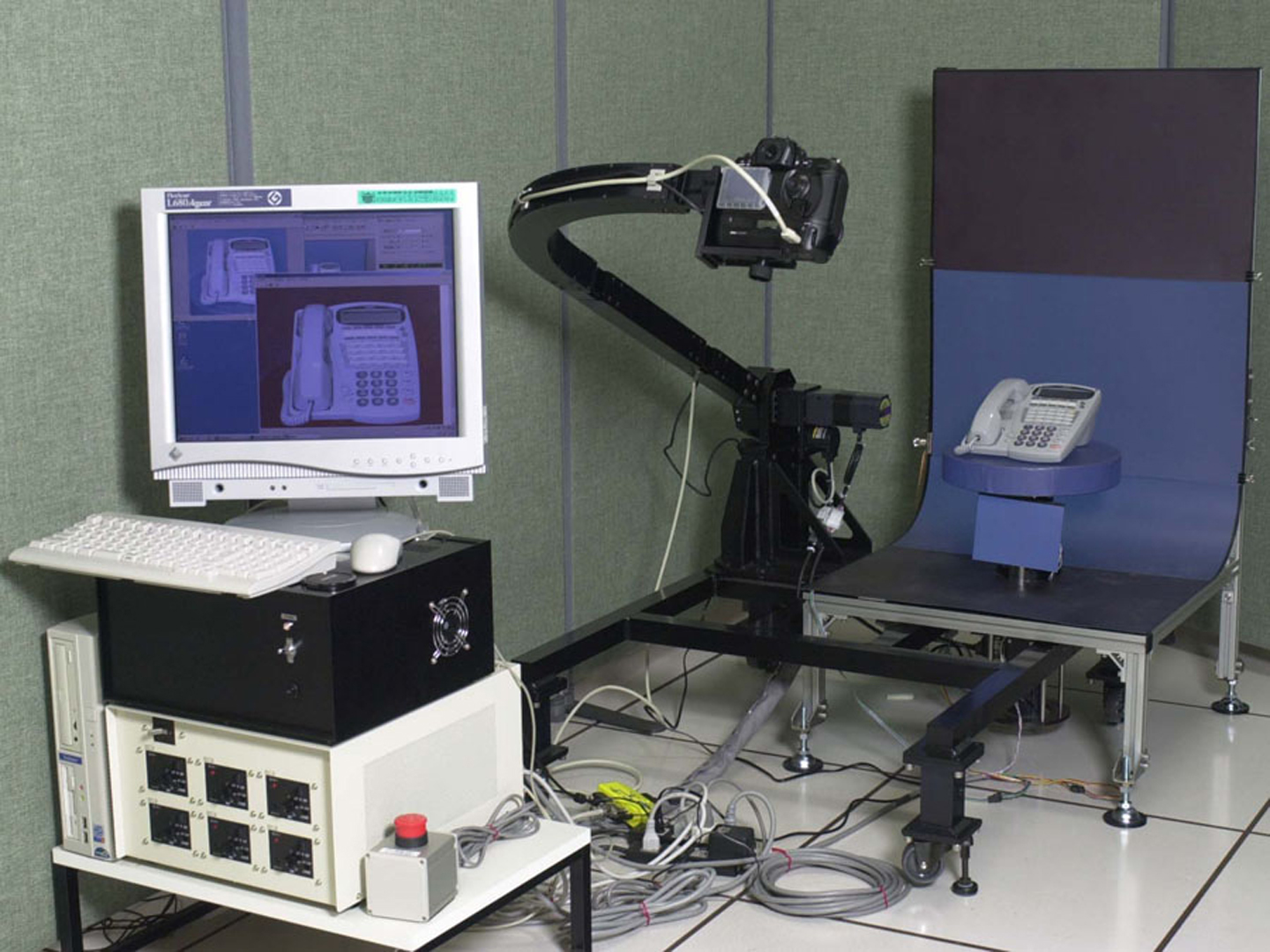

The system we have developed provides a scene in real time, where transferred real objects can exist naturally in the present real space. The system consists of functions for capturing, registration & sensing, and regeneration. The real objects are first captured by using our original 3D capturing system [Fig. 1]. A special feature of the system is its ability to capture 3D CG data from various real objects, such as plastic and porcelain items strong inter-reflection, translucent glass and acrylic resin objects, and so on. Before regeneration of the captured 3D CG data as virtual objects in real space, a sensor called the sensor cube measures environmental information about the location of the virtual objects. We have developed two kinds of sensor cubes, a geometry sensor and a light sensor [Fig. 2]. A viewer [Fig. 3] works to regenerate real objects in the real world by rendering them in the space at the “sensor cube” with ARToolKit [Schmalstieg et al. 2001] provided by the University of Washington. Since views of the virtual objects are generated using the sensor information, harmonization between the real world and virtual objects is achieved.

3 Demonstration

The installation we hope to introduce at SIGGRAPH2002 features “Noh” as the motif. “Noh” is a form of traditional Japanese drama in which the actors wear various kinds of mysterious masks. It is well-known that a single Noh mask can express various emotions depending on the viewing angle and the lighting. We captured images of Noh masks from various angles and in various lighting conditions over the period of the carving process. The captured images will be regenerated in the presence of the SIGGRAPH audience in real time anywhere the sensor cube is located as naturally as possible by sensing the light in the environment. In the exhibition, the carving process unfolds as distance between the audience and the cube changes. That is, a completed mask gradually reverts to a chunk of wood as the distance increases, and vice versa [Fig. 4]. We also hope to demonstrate the capturing of real objects using our system, and how the captured data are shown in other spaces using our viewer.

4 Conclusions

“Regeneration of real objects in the real world” is our ultimate goal. We want to “transfer” real objects into our presence beyond space and time and to let us interact with them in real time. The proposed system can make objects presented in different places and different times appear to be ‘real’ and in the real space. The proposed system consolidates an extremely realistic three-dimensional CG, the reflection of environmental information of the actual space, and advanced Augmented Reality technology. This system, which can be applied and developed in versatile ways and means, will open a new dimension to our senses.

Video:

References

SCHMALSTIEG, D., BILLINGHURST, M., AZUMA, R., HOLLERER, T.,

KATO, H., AND POUPYREV, I. 2001. “Augmented Reality: The

Interface is Everywhere.” Course Notes of SIGGRAPH 2001

Additional Images:

- 2002 Etech Matsuoka: Regeneration of Real Objects in the Real World

- 2002 Etech Matsuoka: Regeneration of Real Objects in the Real World