“Sketch-based Fluid Video Generation Using Motion-Guided Diffusion Models in Still Landscape Images” by Jin and Xie

Conference:

Type(s):

Title:

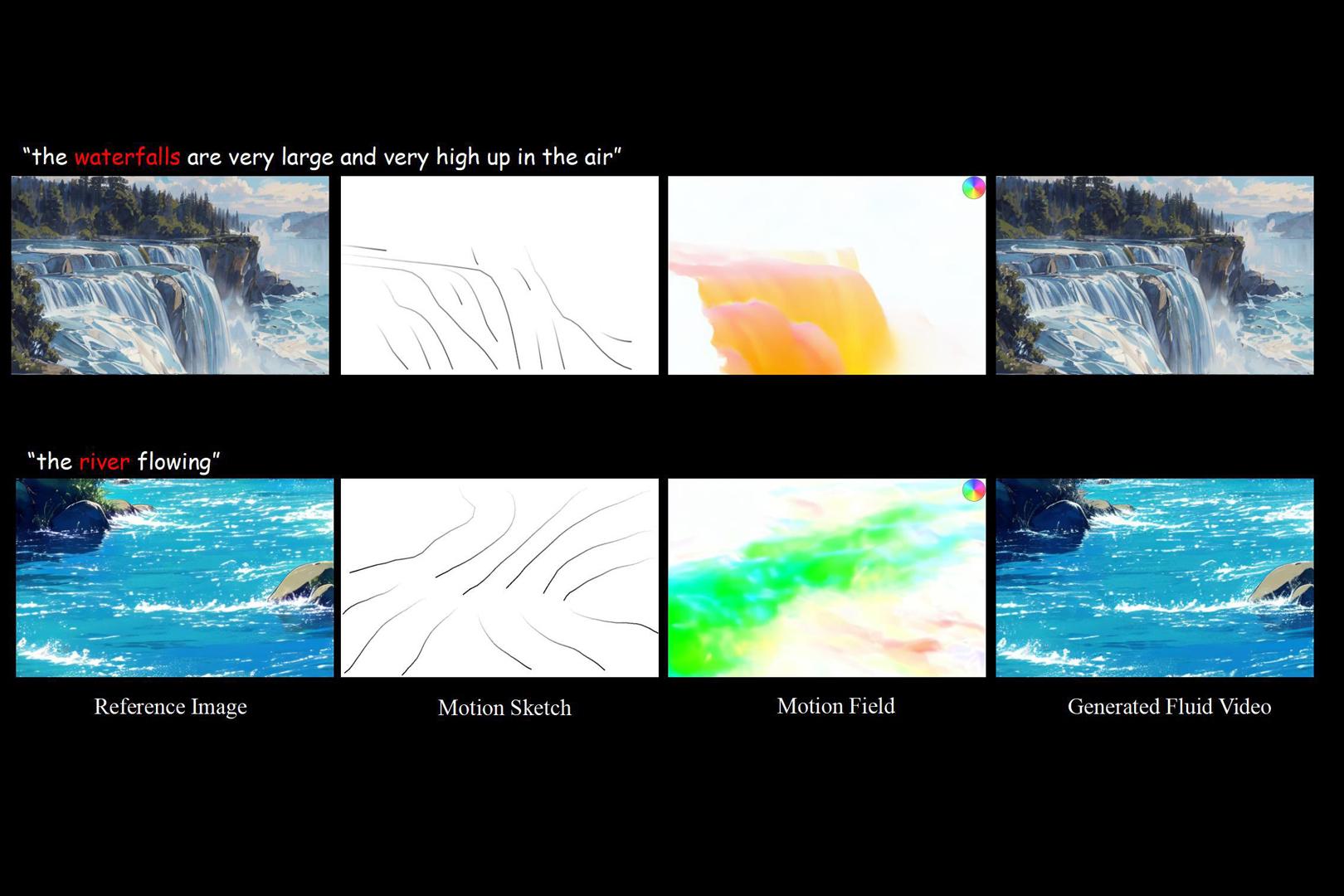

- Sketch-based Fluid Video Generation Using Motion-Guided Diffusion Models in Still Landscape Images

Session/Category Title:

- Images, Video & Computer Vision

Presenter(s)/Author(s):

Abstract:

We propose a finetuned conditional latent diffusion model for generating motion field from user-provided sketches, which are subsequently integrated into a latent video diffusion model via a motion adapter to precisely control the fluid movement.

References:

[1] Andreas Blattmann, Tim Dockhorn, Sumith Kulal, Daniel Mendelevitch, Maciej Kilian, Dominik Lorenz, Yam Levi, Zion English, Vikram Voleti, Adam Letts, et al. 2023. Stable video diffusion: Scaling latent video diffusion models to large datasets. arXiv preprint arXiv:https://arXiv.org/abs/2311.15127 (2023).

[2] Aleksander Holynski, Brian L Curless, Steven M Seitz, and Richard Szeliski. 2021. Animating pictures with eulerian motion fields. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 5810–5819.

[3] Hao Jin, Hengyuan Chang, Xiaoxuan Xie, Zhengyang Wang, Xusheng Du, Shaojun Hu, and Haoran Xie. 2024. Sketch-Guided Motion Diffusion for Stylized Cinemagraph Synthesis. arXiv preprint arXiv:https://arXiv.org/abs/2412.00638 (2024).

[4] Zhouxia Wang, Ziyang Yuan, Xintao Wang, Yaowei Li, Tianshui Chen, Menghan Xia, Ping Luo, and Ying Shan. 2024. Motionctrl: A unified and flexible motion controller for video generation. In ACM SIGGRAPH 2024 Conference Papers. 1–11.