“Robust Motion In-betweening” by Harvey, Yurick, Nowrouzezahrai and Pal

Conference:

Type(s):

Title:

- Robust Motion In-betweening

Session/Category Title:

- Motion Matching and Retargeting

Presenter(s)/Author(s):

Abstract:

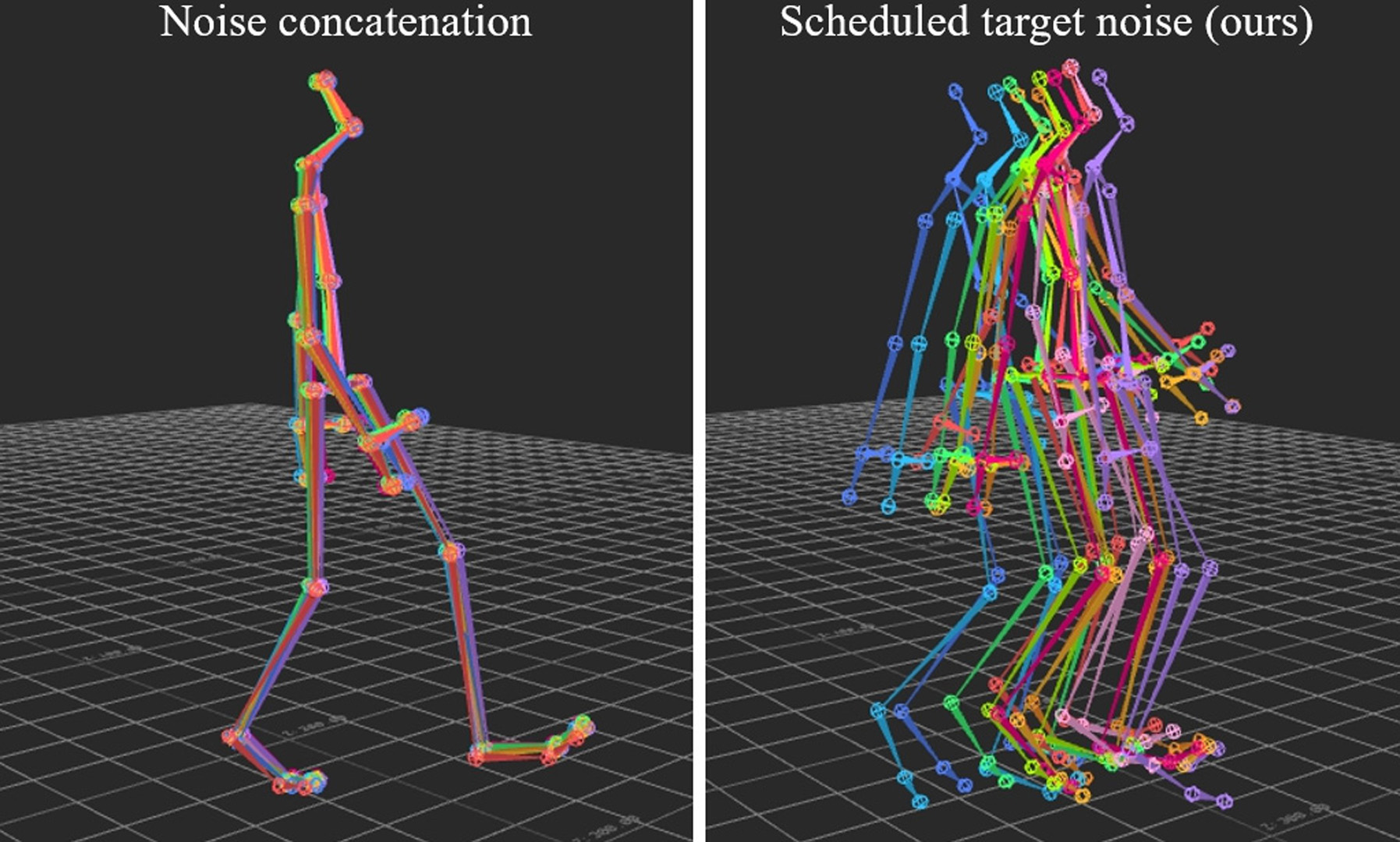

In this work we present a novel, robust transition generation technique that can serve as a new tool for 3D animators, based on adversarial recur- rent neural networks. The system synthesises high-quality motions that use temporally-sparse keyframes as animation constraints. This is remi- niscent of the job of in-betweening in traditional animation pipelines, in which an animator draws motion frames between provided keyframes. We first show that a state-of-the-art motion prediction model cannot be easily converted into a robust transition generator when only adding condition- ing information about future keyframes. To solve this problem, we then propose two novel additive embedding modifiers that are applied at each timestep to latent representations encoded inside the network’s architecture. One modifier is a time-to-arrival embedding that allows variations of the transition length with a single model. The other is a scheduled target noise vector that allows the system to be robust to target distortions and to sample different transitions given fixed keyframes. To qualitatively evaluate our method, we present a custom MotionBuilder plugin that uses our trained model to perform in-betweening in production scenarios. To quantitatively evaluate performance on transitions and generalizations to longer time hori- zons, we present well-defined in-betweening benchmarks on a subset of the widely used Human3.6M dataset and on LaFAN1, a novel high quality motion capture dataset that is more appropriate for transition generation. We are releasing this new dataset along with this work, with accompanying code for reproducing our baseline results.