“G-FED: G-Buffer Guided Frame Extrapolation in Video Diffusion Models” by Pena, Kumar, Andrysiak and Harihara

Conference:

Type(s):

Title:

- G-FED: G-Buffer Guided Frame Extrapolation in Video Diffusion Models

Session/Category Title:

- Images, Video & Computer Vision

Presenter(s)/Author(s):

Abstract:

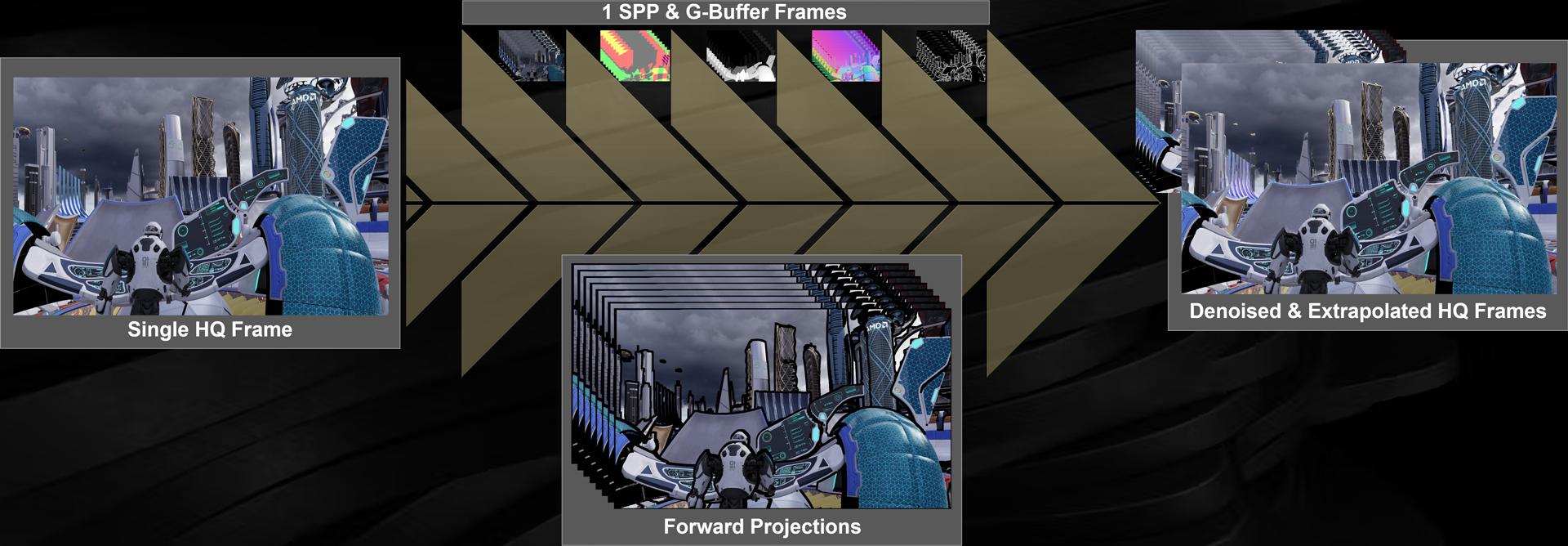

To generate previews with near-final render quality in VFX and enable faster iteration, we propose G-FED, G-Buffer Guided Frame Extrapolation in Video Diffusion Models. G-FED denoises 1spp frames, guided by G-buffer data, to infill masked forward projections and generate high-quality images that are spatially and temporally coherent.

References:

[1] Patrick Esser, Johnathan Chiu, Parmida Atighehchian, Jonathan Granskog, and Anastasis Germanidis. 2023. Structure and Content-Guided Video Synthesis with Diffusion Models. arxiv:https://arXiv.org/abs/2302.03011 [cs.CV] https://arxiv.org/abs/2302.03011

[2] Li Hu, Xin Gao, Peng Zhang, Ke Sun, Bang Zhang, and Liefeng Bo. 2024. Animate Anyone: Consistent and Controllable Image-to-Video Synthesis for Character Animation. arxiv:https://arXiv.org/abs/2311.17117 [cs.CV] https://arxiv.org/abs/2311.17117

[3] Norman Müller, Katja Schwarz, Barbara Roessle, Lorenzo Porzi, Samuel Rota Bulò, Matthias Nießner, and Peter Kontschieder. 2024. MultiDiff: Consistent Novel View Synthesis from a Single Image. arxiv:https://arXiv.org/abs/2406.18524 [cs.CV] https://arxiv.org/abs/2406.18524

[4] Wenqi Ouyang, Yi Dong, Lei Yang, Jianlou Si, and Xingang Pan. 2024. I2VEdit: First-Frame-Guided Video Editing via Image-to-Video Diffusion Models. arxiv:https://arXiv.org/abs/2405.16537 [cs.CV] https://arxiv.org/abs/2405.16537

[5] Bohao Peng, Jian Wang, Yuechen Zhang, Wenbo Li, Ming-Chang Yang, and Jiaya Jia. 2025. ControlNeXt: Powerful and Efficient Control for Image and Video Generation. arxiv:https://arXiv.org/abs/2408.06070 [cs.CV] https://arxiv.org/abs/2408.06070

[6] Dustin Podell, Zion English, Kyle Lacey, Andreas Blattmann, Tim Dockhorn, Jonas Müller, Joe Penna, and Robin Rombach. 2023. SDXL: Improving Latent Diffusion Models for High-Resolution Image Synthesis. arxiv:https://arXiv.org/abs/2307.01952 [cs.CV] https://arxiv.org/abs/2307.01952

[7] Vaibhav Vavilala, Rahul Vasanth, and David Forsyth. 2024. Denoising Monte Carlo Renders with Diffusion Models. arxiv:https://arXiv.org/abs/2404.00491 [cs.CV] https://arxiv.org/abs/2404.00491

[8] Jinbo Xing, Menghan Xia, Yong Zhang, Haoxin Chen, Wangbo Yu, Hanyuan Liu, Xintao Wang, Tien-Tsin Wong, and Ying Shan. 2023. DynamiCrafter: Animating Open-domain Images with Video Diffusion Priors. arxiv:https://arXiv.org/abs/2310.12190 [cs.CV] https://arxiv.org/abs/2310.12190

[9] Zheng Zeng, Valentin Deschaintre, Iliyan Georgiev, Yannick Hold-Geoffroy, Yiwei Hu, Fujun Luan, Ling-Qi Yan, and Miloš Hašan. 2024. RGB<->X: Image decomposition and synthesis using material- and lighting-aware diffusion models. In Special Interest Group on Computer Graphics and Interactive Techniques Conference Conference Papers(SIGGRAPH ’24). ACM, 1–11.