“AniDepth : Anime In-between Diffusion using Depth-guided Warped Line-art” by Koga, Kubo, Shinagawa, Fujimura, Kitano, et al. …

Conference:

Type(s):

Title:

- AniDepth : Anime In-between Diffusion using Depth-guided Warped Line-art

Session/Category Title:

- ML in Production

Presenter(s)/Author(s):

- Sosui Koga

- Hiroyuki Kubo

- Seitaro Shinagawa

- Yuki Fujimura

- Kazuya Kitano

- Akinobu Maejima

- Takuya Funatomi

- Yasuhiro Mukaigawa

Moderator(s):

Abstract:

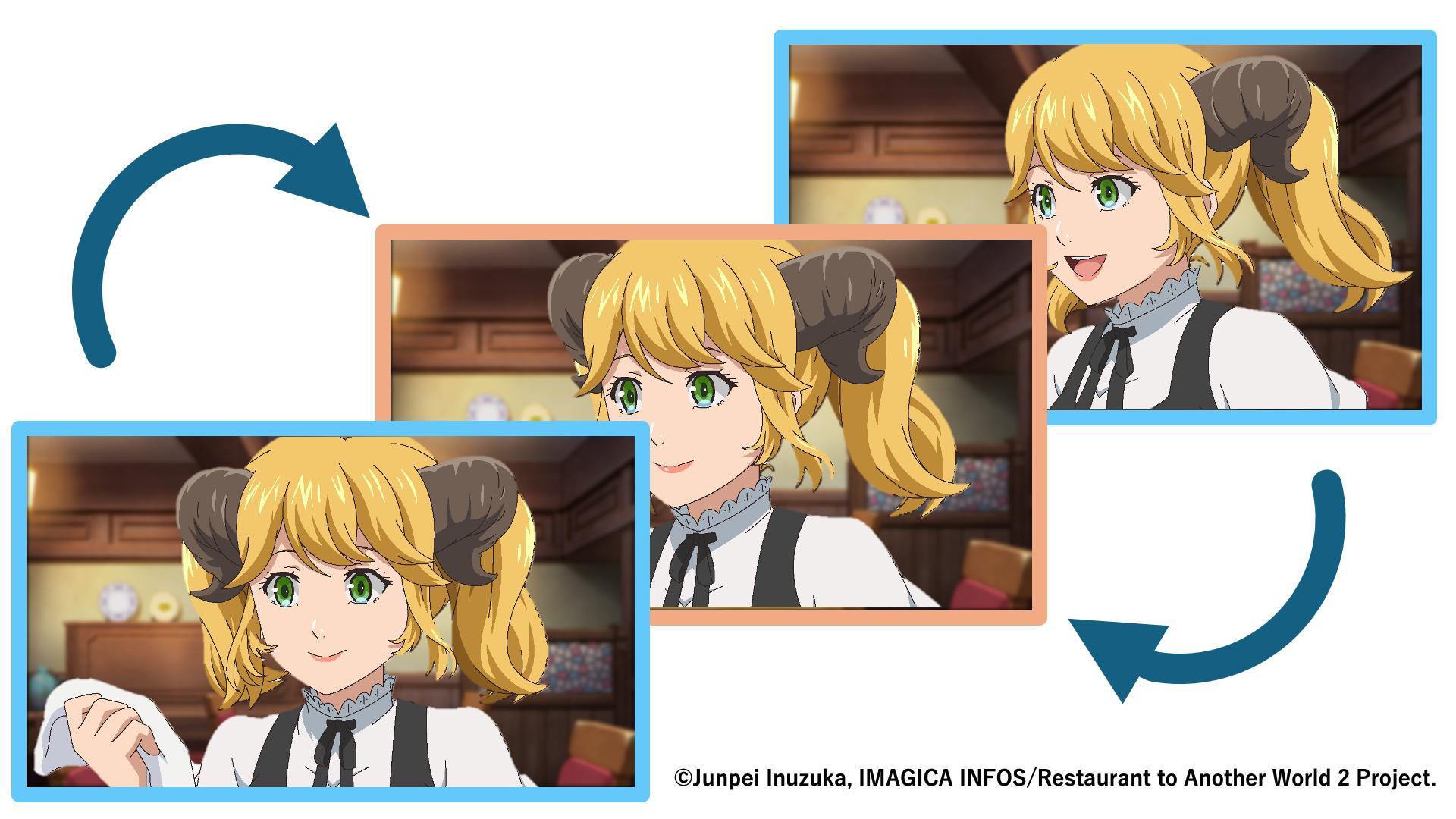

We propose AniDepth, a novel anime in-betweening method using a video diffusion model. Even if the model is fine-tuned on an anime dataset, it still suffers from the domain gap between anime and the natural image domain, due to the strong priors of the base model. Therefore, we convert anime illustration into depth map, which is a modality filling the gap between anime and realistic domains to fully exploit the model’s prior knowledge. In addition, by using line-arts as guide during the in-betweening, we enhance the fidelity of the generated line-arts details. Our approach first interpolates converted depth maps, warps line-arts based on the depth maps, then interpolates colored images with using the line-arts as the conditions, preserving complex line-art and flat coloring even under large movements. Experiments show that our method outperforms the existing diffusion method in quantitative evaluation, improves temporal smoothness, and reduces line-art distortion. Moreover, because it requires no extra training, it can be easily integrated into current production pipelines at low operational cost.

References:

[1] G. L. Moing, J. Ponce, and C. Schmid. 2024. Dense Optical Tracking: Connecting the Dots. In 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). IEEE/CVF, 19187–19197.

[2] Jinbo Xing, Hanyuan Liu, Menghan Xia, Yong Zhang, Xintao Wang, Ying Shan, and Tien-Tsin Wong. 2024. ToonCrafter: Generative Cartoon Interpolation. ACM Trans. Graph. 43, 6, Article 245 (2024), 11 pages.

[3] Lihe Yang, Bingyi Kang, Zilong Huang, Zhen Zhao, Xiaogang Xu, Jiashi Feng, and Hengshuang Zhao. 2024. Depth Anything V2. 37 (2024), 21875–21911.