“A Mobile Scanning Solution to Reconstruct Strand-Based Hairstyles” by Grassal, Hormann, Hamlaoui, Leistner and Ardizzone

Conference:

Type(s):

Title:

- A Mobile Scanning Solution to Reconstruct Strand-Based Hairstyles

Session/Category Title:

- Real-Time and Mobile Techniques

Presenter(s)/Author(s):

Moderator(s):

Abstract:

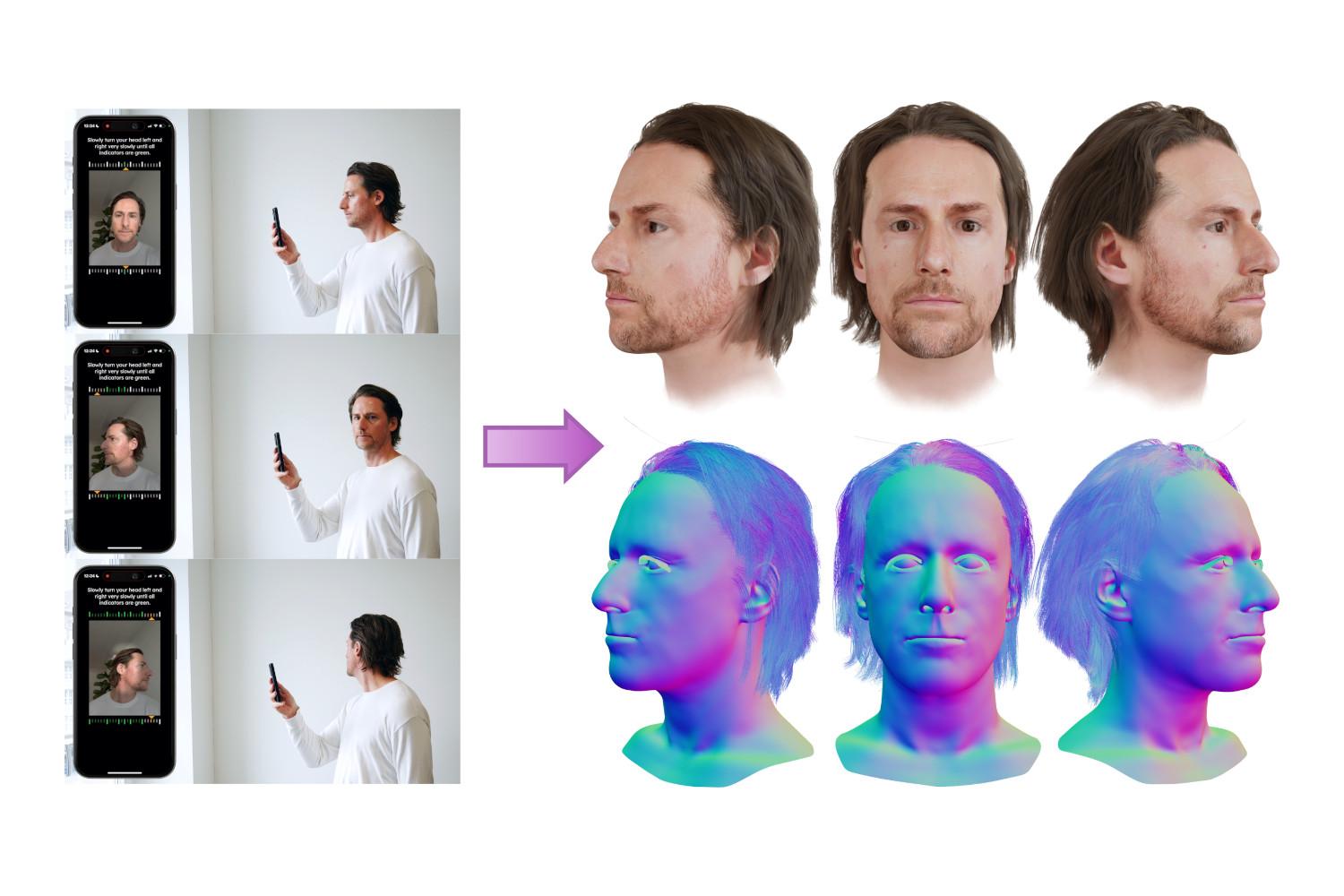

Representing hairstyles as 3D strands is the most realistic and flexible hair modeling technique in computer graphics. However, reconstructing strands from 3D head scans has proven to be extremely challenging in practice as even advanced multi-camera studios just capture the surface geometry of a hairstyle and thus still require artist work to create the final groom. The problem has gained traction in recent research demonstrating that individual strands can actually be derived from large multi-camera capture data and can even be estimated from single-view videos and photos. Still, none of the methods presented has proven suitable for end-user scanning in terms of execution time, accuracy, accessibility, and resulting asset quality. In our work, we have addressed these issues with a fully automated mobile capturing solution on end-user phones to reconstruct strand-based hairstyles as part of a head scan. The hairstyle reconstruction takes two minutes to complete and provides the user with a clean hairstyle asset usable in any 3D editor, game engine, or other downstream task. Our reconstruction approach leverages deep neural networks to regress an initial hairstyle and density from a learned prior distribution. A subsequent fitting step ensures that the reconstructed strands match the recorded images correctly. This solution is built on a strong data foundation that comprises hairstyles crafted by artists, reconstructed through fitting, and generated from very diverse but controlled augmentations. As the first to offer strand-based scanning, we are proud to present our system to a broader audience. In our talk, we will give insights into our dataset, our method, and showcase the scanning quality and downstream editing. We believe that our technology will not only inspire but also support future research in the field as it allows us to easily acquire new hairstyle data, which has always been a limiting factor.

References:

[1] Sylvain Paris, Hector M Briceno, and François X Sillion. 2004. Capture of hair geometry from multiple images. ACM transactions on graphics (TOG) 23, 3 (2004), 712–719.

[2] Radu Alexandru Rosu, Shunsuke Saito, Ziyan Wang, Chenglei Wu, Sven Behnke, and Giljoo Nam. 2022. Neural strands: Learning hair geometry and appearance from multi-view images. In European Conference on Computer Vision. Springer, 73–89.

[3] Vanessa Sklyarova, Jenya Chelishev, Andreea Dogaru, Igor Medvedev, Victor Lempitsky, and Egor Zakharov. 2023. Neural haircut: Prior-guided strand-based hair reconstruction. In Proceedings of the IEEE/CVF International Conference on Computer Vision. 19762–19773.

[4] Erroll Wood, Tadas Baltrušaitis, Charlie Hewitt, Sebastian Dziadzio, Thomas J Cashman, and Jamie Shotton. 2021. Fake it till you make it: face analysis in the wild using synthetic data alone. In Proceedings of the IEEE/CVF international conference on computer vision. 3681–3691.

[5] Yi Zhou, Liwen Hu, Jun Xing, Weikai Chen, Han-Wei Kung, Xin Tong, and Hao Li. 2018. Hairnet: Single-view hair reconstruction using convolutional neural networks. In Proceedings of the European Conference on Computer Vision (ECCV). 235–251.