“PAAP: Performer-Aware Automatic Panning System” by Lee and Kim

Conference:

Type(s):

Title:

- PAAP: Performer-Aware Automatic Panning System

Session/Category Title:

- Interactive Techniques

Presenter(s)/Author(s):

Abstract:

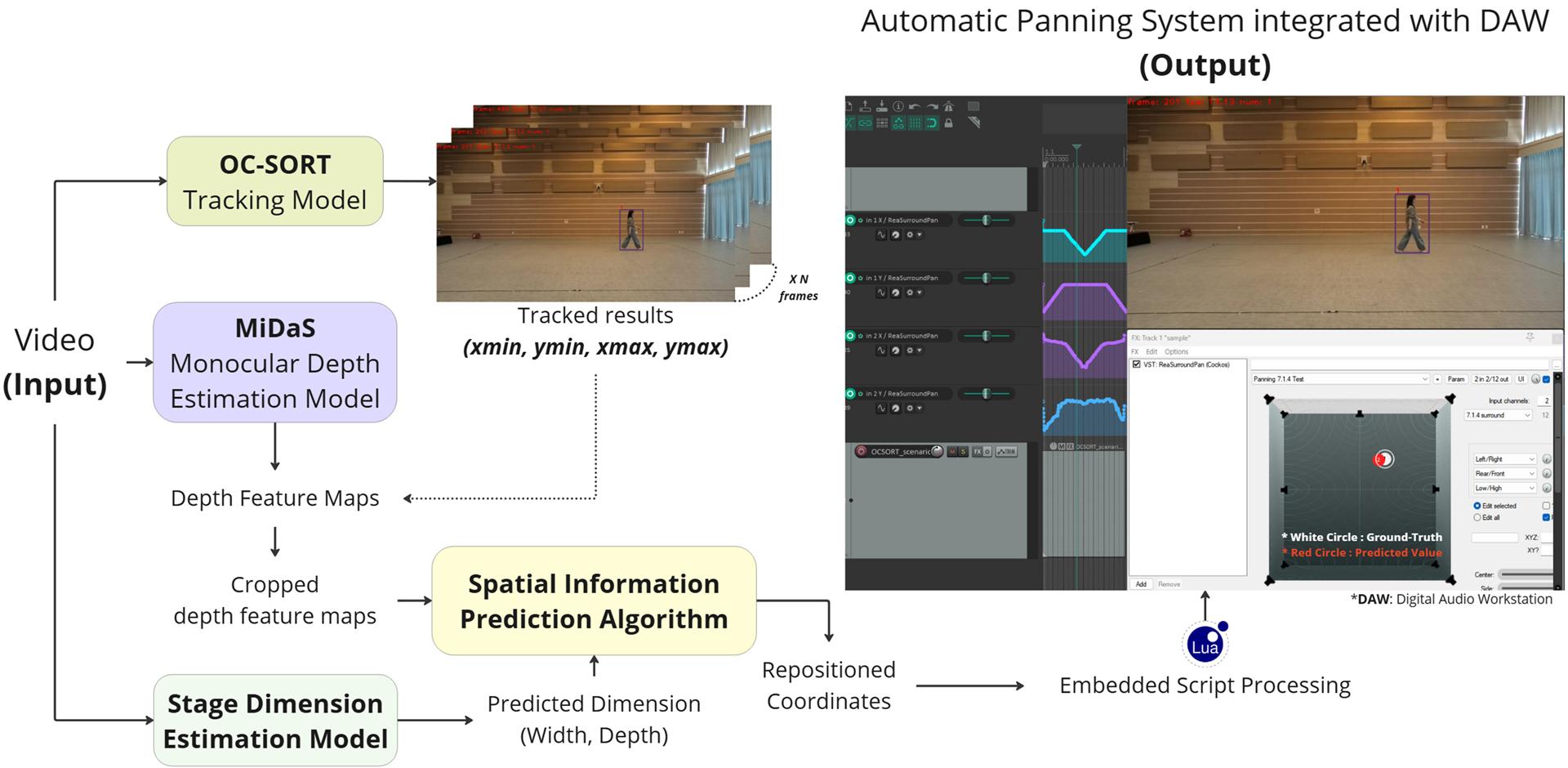

This paper proposes PAAP (Performer-Aware Automatic Panning System), the first system to automatically track performer(s) and generate spatial audio panning data integrated with a Digital Audio Workstation (DAW). Real-time processing of PAAP via Open Sound Control (OSC) confirms its readiness for deployment in professional music production.

References:

[1] CambridgeMusicTechnology. 2025. Free Multitrack Downloads. https://cambridge-mt.com/ms/mtk/ Accessed: 2025-04-25.

[2] Jinkun Cao, Jiangmiao Pang, Xinshuo Weng, Rawal Khirodkar, and Kris Kitani. 2023. Observation-centric sort: Rethinking sort for robust multi-object tracking. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). IEEE, New York, NY, 9686–9696.

[3] Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. 2016. Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). 770–778.

[4] Hyunkook Lee, Acoustic Perception, and Listening (APL) Lab. 2023. APL Lab Website. https://apl-hud.com/ Accessed: 2025-04-25.

[5] Kangeun Lee and Sungyoung Kim. 2024. Development of Automatic Audio Panning System for Immersive Sound through Stage Size-Aware Model and Object-Tracking. In INTER-NOISE and NOISE-CON Congress and Conference Proceedings, Vol. 270. Institute of Noise Control Engineering, 5216–5222.

[6] Martin Neukom. 2007. Ambisonic Panning. In Audio Engineering Society Convention 123. Audio Engineering Society.

[7] René Ranftl, Alexey Bochkovskiy, and Vladlen Koltun. 2021. Vision Transformers for Dense Prediction. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV).

[8] René Ranftl, Katrin Lasinger, David Hafner, Konrad Schindler, and Vladlen Koltun. 2022. Towards Robust Monocular Depth Estimation: Mixing Datasets for Zero-Shot Cross-Dataset Transfer. IEEE Transactions on Pattern Analysis and Machine Intelligence 44, 3 (2022).

[9] Jason Yosinski, Jeff Clune, Yoshua Bengio, and Hod Lipson. 2014. How Transferable Are Features in Deep Neural Networks? Advances in Neural Information Processing Systems 27 (2014).