“Spatial Sensing: Augmenting Human Understanding in Data-Driven Exploration” by Humml

Conference:

Type(s):

Title:

- Spatial Sensing: Augmenting Human Understanding in Data-Driven Exploration

Session/Category Title:

- Seeing Space and Time

Presenter(s)/Author(s):

Moderator(s):

Abstract:

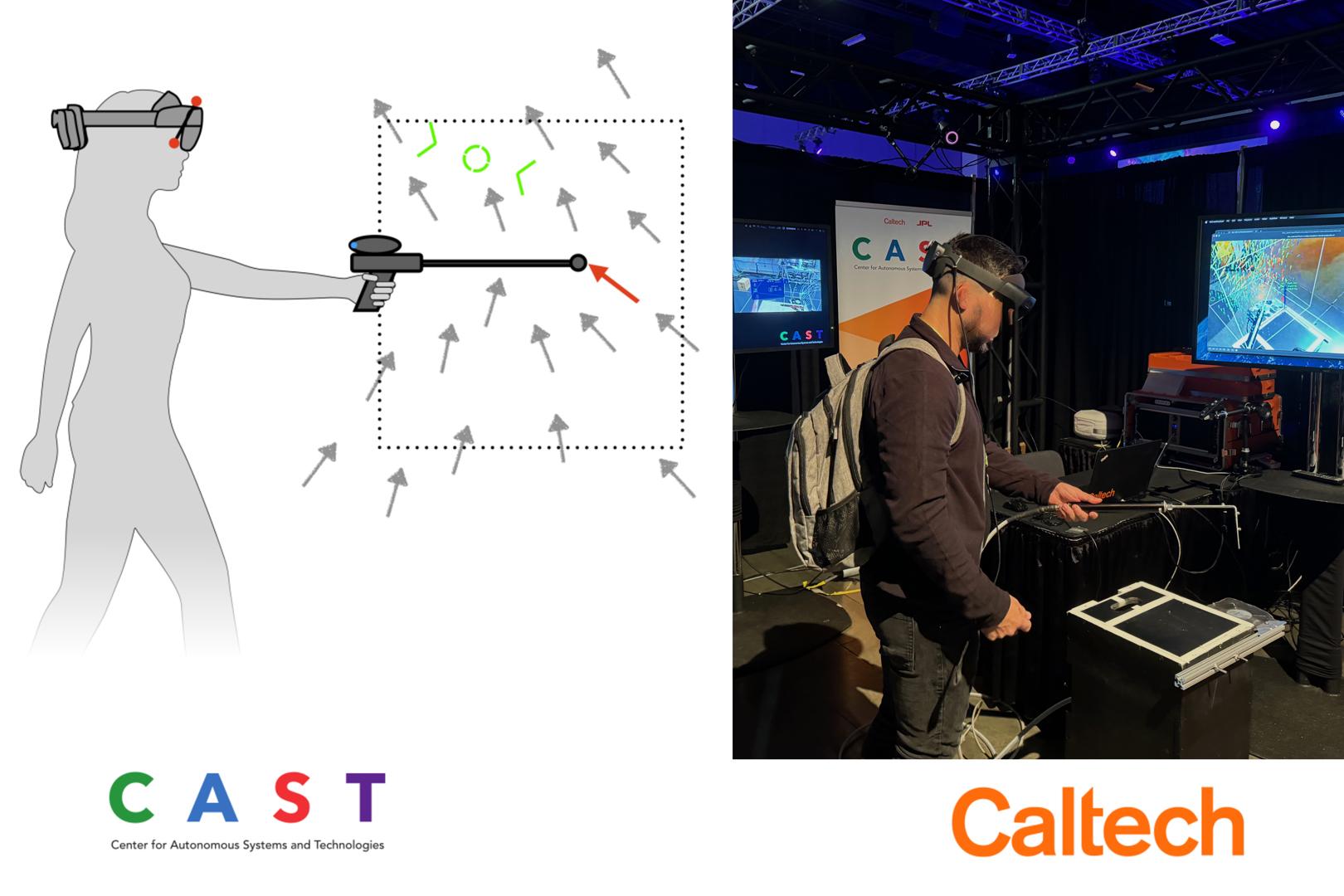

Machine-Guided Spatial Sensing is a novel measurement technique that combines augmented reality (AR), active learning, and human-in-the-loop interaction to measure environmental fields with high accuracy and efficiency. This system employs a head-mounted display (HMD) and a handheld sensor to capture various physical quantities, such as flow fields and gas concentrations, in real-time. A central data model processes the collected measurements continuously and updates predictions of the environmental field. Using active learning, the system identifies regions of high uncertainty and guides the operator to optimal sampling locations through intuitive AR visualizations. This closed-loop framework effectively transfers the sampling expertise from the operator to the machine learning algorithm, enabling efficient and accurate field estimation. Experimental evaluations demonstrate that the proposed method achieves high accuracy and reduces measurement times significantly compared to traditional sampling techniques. The system’s flexibility allows for integration with various environmental sensors, making it suitable for applications in engineering, scientific research, and environmental protection. By leveraging real-time data analysis and human-machine collaboration, Machine-Guided Spatial Sensing provides a robust, user-friendly solution for complex spatial measurement challenges. Future research will focus on enhancing sensor fusion and adapting the system to dynamic environmental conditions. These promising results indicate that the approach reduces setup complexity, lowers costs, and enhances data reliability across diverse environments.

References:

[1] Lonni Besançon, Anders Ynnerman, Daniel F Keefe, Lingyun Yu, and Tobias Isenberg. 2021. The state of the art of spatial interfaces for 3D visualization. In Computer Graphics Forum, Vol. 40. Wiley Online Library, 293–326.

[2] Steve Bryson. 1996. Virtual reality in scientific visualization. Commun. ACM 39, 5 (1996), 62–71.

[3] Steve Bryson, Sandy Johan, and Leslie Schlecht. 1997. An extensible interactive visualization framework for the virtual windtunnel. In Proceedings of IEEE 1997 Annual International Symposium on Virtual Reality. IEEE, 106–113.

[4] Steve Bryson, Creon Levit, et al. 1992. The virtual wind tunnel. IEEE Computer graphics and Applications 12, 4 (1992), 25–34.

[5] Julian Humml. 2023. Self-guided Machine Learning Algorithm for Real-Time Assimilation, Interpolation and Rendering of Aerodynamic Measurements. Ph. D. Dissertation. ETH Zurich.

[6] Julian Humml, Thomas Rösgen, and Morteza Gharib. 2024. Unveiling the Invisible: Interactive Spatial Sensing Transforms Air Flow Measurement. In ACM SIGGRAPH 2024 Immersive Pavilion. 1–2.

[7] Benjamin Lee, Michael Sedlmair, and Dieter Schmalstieg. 2023. Design patterns for situated visualization in augmented reality. IEEE Transactions on Visualization and Computer Graphics (2023).

[8] Peter Mohr, Markus Tatzgern, Tobias Langlotz, Andreas Lang, Dieter Schmalstieg, and Denis Kalkofen. 2019. Trackcap: Enabling smartphones for 3d interaction on mobile head-mounted displays. In Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems. 1–11.

[9] Andreas Mueller. 2017. Real-Time 3D Flow Visualization Technique with Large Scale Capability. Ph. D. Dissertation. ETH Zurich.

[10] Steven Schkolne. 2002. Drawing with the hand in free space: Creating 3d shapes with gesture in a semi-immersive environment. Leonardo 35, 4 (2002), 371–375.