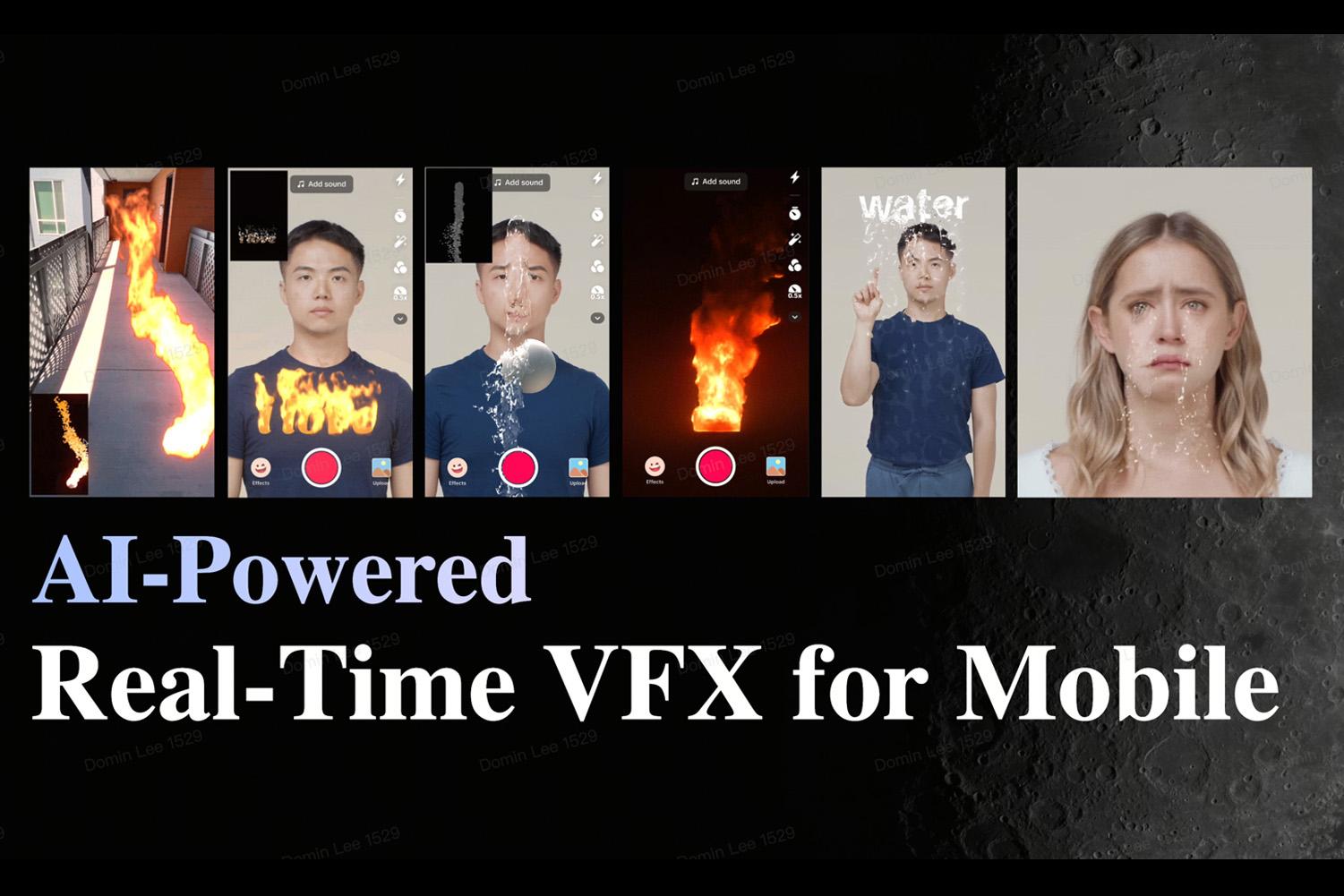

“AI-Powered Real-Time VFX for Mobile” by Lee, Li and Lin

Conference:

Type(s):

Title:

- AI-Powered Real-Time VFX for Mobile

Session/Category Title:

- Real-Time and Mobile Techniques

Presenter(s)/Author(s):

Moderator(s):

Abstract:

Cinematic visual effects have long been the domain of high-end hardware, reserved for movie studios and powerful gaming rigs. But what if we could bring that same level of quality to mobile devices—without sacrificing performance? AI VFX was born out of this challenge: to push the boundaries of real-time visual effects on mobile by merging Generative Adversarial Networks (GANs) with the VFX Graph. In this talk, we’ll take you behind the scenes of AI VFX, a technology that enables stunning, real-time fire and water effects that run seamlessly across the full spectrum of mobile devices—from six-year-old Android phones to the latest iPhones. By integrating AI-powered enhancements into the VFX Graph (GPU particle system), we’ve developed an approach that balances visual fidelity with efficiency, ensuring cinematic-quality effects can exist in mobile gaming, social media, and beyond. We’ll dive into the technical breakthroughs that made this possible, including synthetic data generation, real-time inference optimization, and workflows for combining GAN and VFX Graph—all while overcoming the hardware constraints of mobile platforms. Through recorded demos and real-world examples from TikTok, you’ll see firsthand how AI is revolutionizing mobile VFX, unlocking new creative possibilities for developers, artists, and storytellers.

References:

[1] Ian Goodfellow, Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David Warde-Farley, Sherjil Ozair, Aaron Courville, and Yoshua Bengio. 2014. Generative adversarial nets. In Advances in neural information processing systems. 2672–2680.

[2] Effect House. 2024. Visual Effects Editor Overview. https://effecthouse.tiktok.com/learn/guides/visual-effects-editor/

[3] Phillip Isola, Jun-Yan Zhu, Tinghui Zhou, and Alexei A. Efros. 2016. Image-to-Image Translation with Conditional Adversarial Networks. CoRR abs/1611.07004 (2016). arXiv:1611.07004http://arxiv.org/abs/1611.07004

[4] Yujun Shen, Zhiyi Zhang, Dingdong Yang, Yinghao Xu, Ceyuan Yang, and Jiapeng Zhu. 2022. Hammer: An Efficient Toolkit for Training Deep Models. https://github.com/bytedance/Hammer.