“Spatial Design and CoreML for the Apple Vision Pro” by Ramos and Yuen

Conference:

Experience Type(s):

Labs Type(s):

Title:

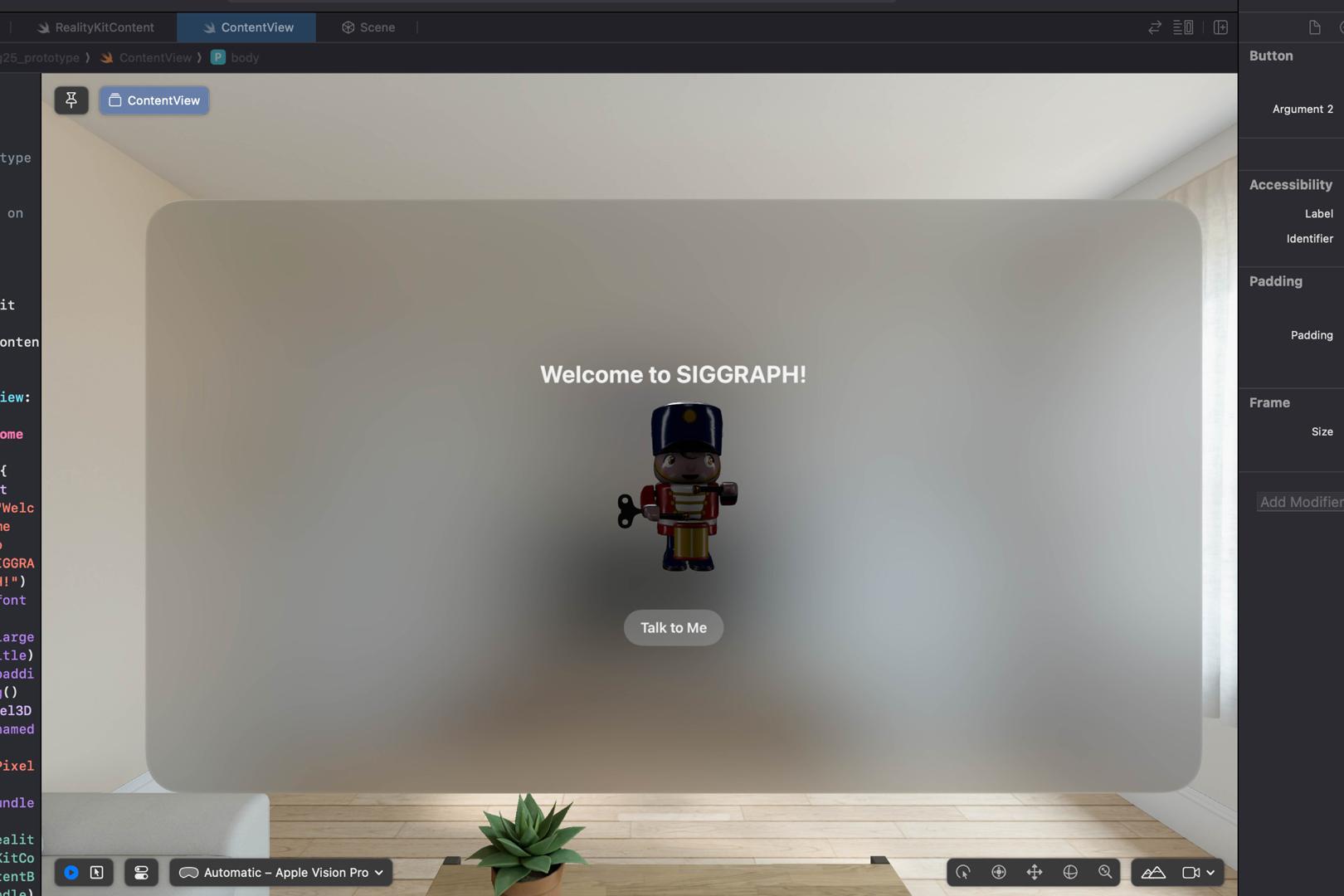

- Spatial Design and CoreML for the Apple Vision Pro

Organizer(s)/Presenter(s):

Description:

Join us in learning how to create 3D and 2D interfaces and graphics with RealityKit, CoreML, and SwiftUI for visionOS applications. Together, we will cover the core design principles of 2D/3D UI and dive into depth perception, spatial awareness, and natural gestures.

References:

[1] Apple. 2023. Introducing Apple Vision Pro. https://www.apple.com/newsroom/2023/06/introducing-apple-vision-pro/

[2] Apple CoreML. 2025a. MNIST. https://developer.apple.com/machine-learning/models/

[3] Apple CoreML. 2025b. Vision Framework. https://developer.apple.com/documentation/vision/

[4] Apple Developer. 2024. XCode. https://developer.apple.com/xcode/

[5] Hugging Face. 2025. CoreML: BERT-SQuD. https://github.com/huggingface/transformers