“Nano-Optics for Depth Sensing” by Xu, Li, Wu, Zhu, Zhang, et al. …

Conference:

Experience Type(s):

Title:

- Nano-Optics for Depth Sensing

Organizer(s)/Presenter(s):

Interest Areas(s):

- New Technologies

Description:

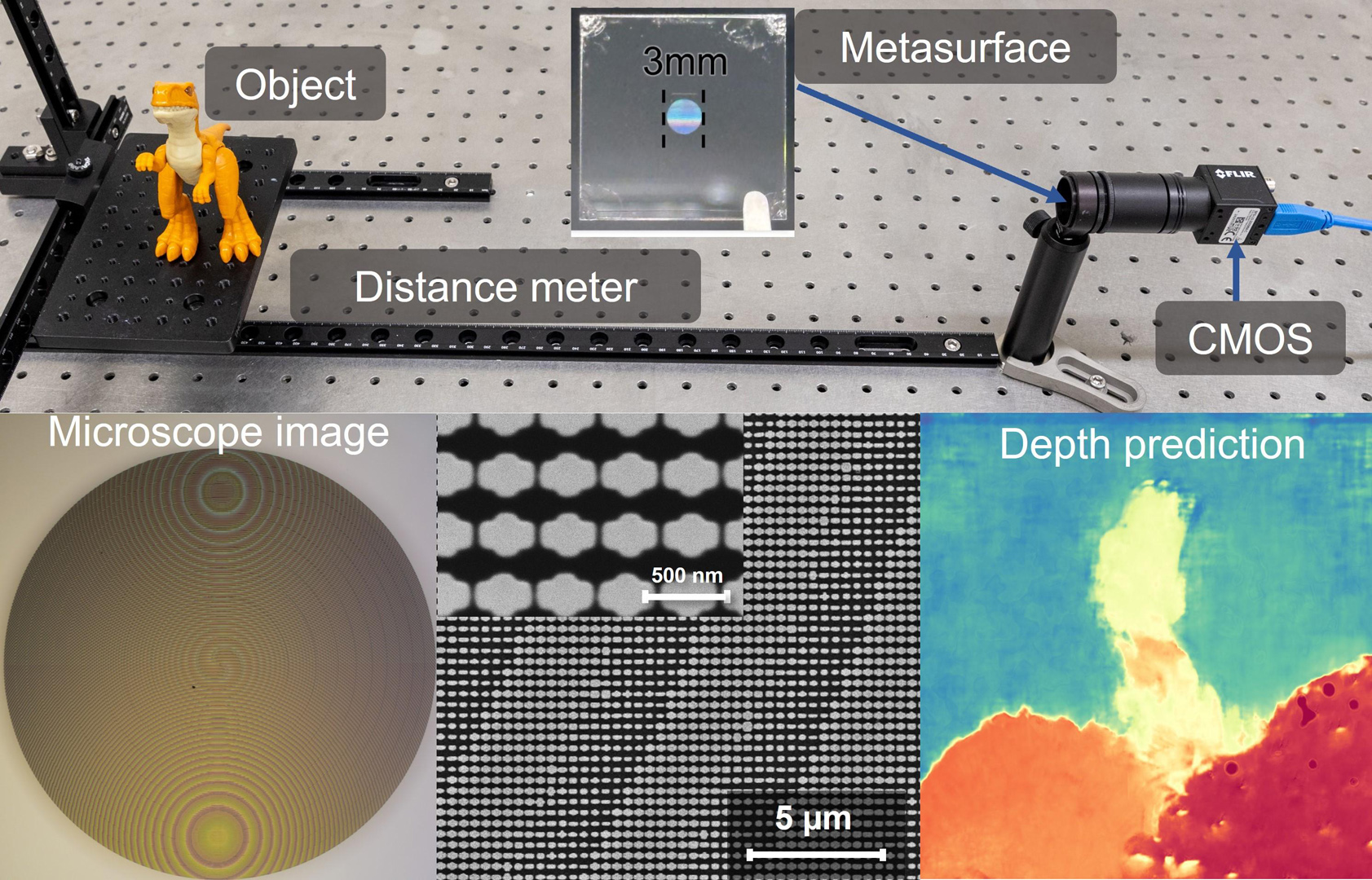

Depth imaging powers applications from robotics to immersive VR/AR, yet conventional solutions often rely on bulky hardware or inaccurate approximations. We present Nano-3D, an ultra-compact metasurface-based neural depth sensor that captures single-shot, orthogonally polarized image pairs and reconstructs metric depth in real-time. At SIGGRAPH 2025, participants can place 3D-printed objects of various shapes and sizes before Nano-3D to watch live updates of robust depth maps, even under challenging conditions. A parallel display comparing a state-of-the-art monocular depth estimation model highlights Nano-3D’s superior accuracy. Participants can also inspect the 700-nm-thick TiO2 metasurface chip under a microscope, discovering the nanophotonic structures that power Nano-3D.

References:

[1] Arseniy I Kuznetsov, Mark L Brongersma, Jin Yao, Mu Ku Chen, Uriel Levy, Din Ping Tsai, Nikolay I Zheludev, Andrei Faraon, Amir Arbabi, Nanfang Yu, et al. 2024. Roadmap for optical metasurfaces. ACS photonics 11, 3 (2024), 816–865.

[2] David B Lindell, Matthew O’Toole, and Gordon Wetzstein. 2018. Single-photon 3D imaging with deep sensor fusion.ACM Trans. Graph. 37, 4 (2018), 113.

[3] Christopher Rogers, Alexander Y Piggott, David J Thomson, Robert F Wiser, Ion E Opris, Steven A Fortune, Andrew J Compston, Alexander Gondarenko, Fanfan Meng, Xia Chen, et al. 2021. A universal 3D imaging sensor on a silicon photonics platform. Nature 590, 7845 (2021), 256–261.

[4] Nanfang Yu and Federico Capasso. 2014. Flat optics with designer metasurfaces. Nature materials 13, 2 (2014), 139–150.