“Deep appearance models for face rendering” by Lombardi, Saragih, Simon and Sheikh

Conference:

Type:

Entry Number: 68

Title:

- Deep appearance models for face rendering

Session/Category Title: Interaction/VR

Presenter(s)/Author(s):

Moderator(s):

Abstract:

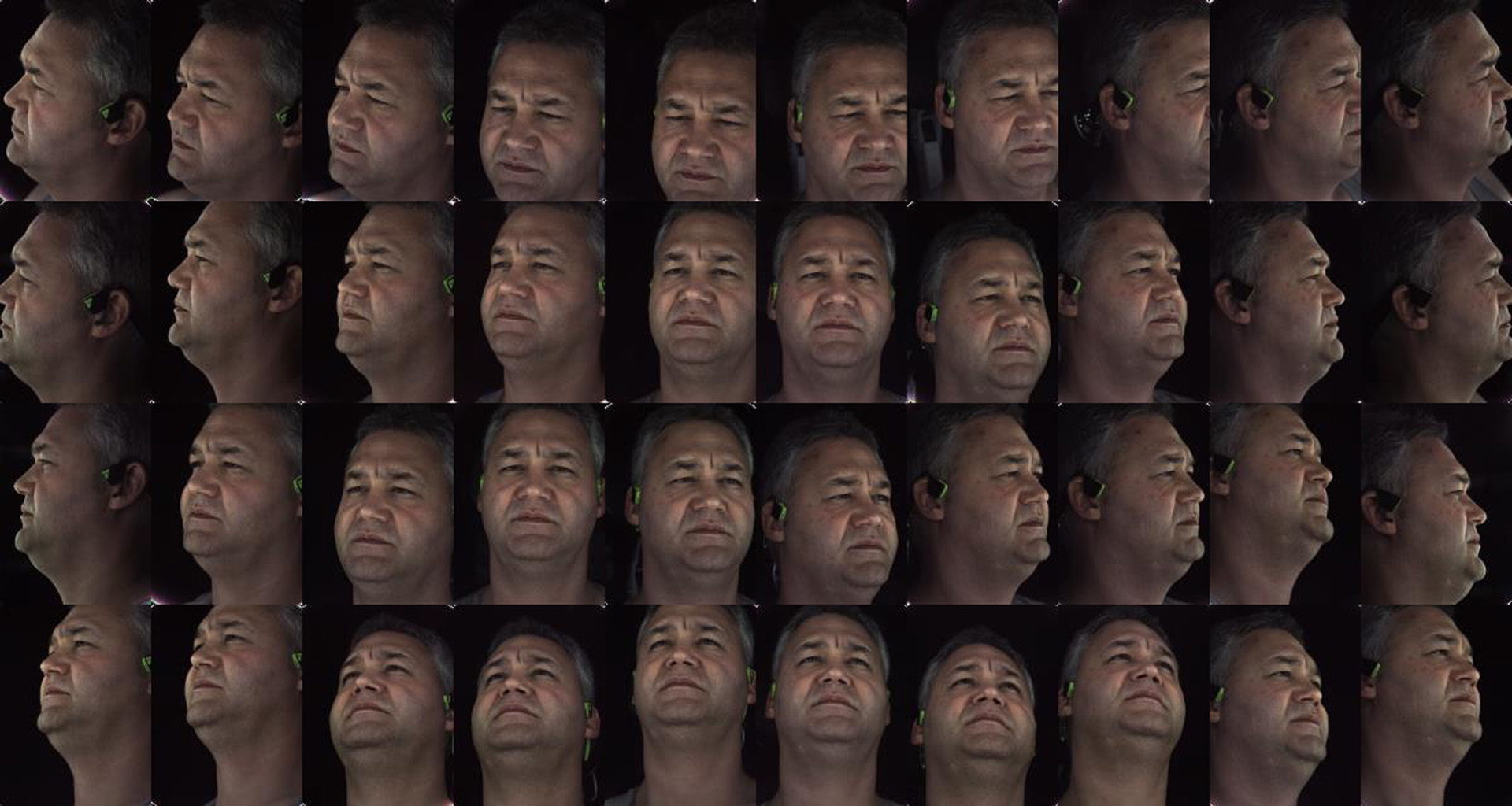

We introduce a deep appearance model for rendering the human face. Inspired by Active Appearance Models, we develop a data-driven rendering pipeline that learns a joint representation of facial geometry and appearance from a multiview capture setup. Vertex positions and view-specific textures are modeled using a deep variational autoencoder that captures complex nonlinear effects while producing a smooth and compact latent representation. View-specific texture enables the modeling of view-dependent effects such as specularity. In addition, it can also correct for imperfect geometry stemming from biased or low resolution estimates. This is a significant departure from the traditional graphics pipeline, which requires highly accurate geometry as well as all elements of the shading model to achieve realism through physically-inspired light transport. Acquiring such a high level of accuracy is difficult in practice, especially for complex and intricate parts of the face, such as eyelashes and the oral cavity. These are handled naturally by our approach, which does not rely on precise estimates of geometry. Instead, the shading model accommodates deficiencies in geometry though the flexibility afforded by the neural network employed. At inference time, we condition the decoding network on the viewpoint of the camera in order to generate the appropriate texture for rendering. The resulting system can be implemented simply using existing rendering engines through dynamic textures with flat lighting. This representation, together with a novel unsupervised technique for mapping images to facial states, results in a system that is naturally suited to real-time interactive settings such as Virtual Reality (VR).

References:

1. Volker Blanz and Thomas Vetter. 1999. A Morphable Model for the Synthesis of 3D Faces. In Proceedings of the 26th Annual Conference on Computer Graphics and Interactive Techniques (SIGGRAPH ’99). ACM Press/Addison-Wesley Publishing Co., New York, NY, USA, 187–194. Google ScholarDigital Library

2. S. M. Boker, J. F. Cohn, B. J. Theobald, I. Matthews, M. Mangini, J. R. Spies, Z Ambadar, and T. R. Brick. 2011. Motion Dynamics, Not Perceived Sex, Influence Head Movements in Conversation. J. Exp. Psychol. Hum. Percept. Perform. 37 (2011). 874–891.Google ScholarCross Ref

3. Konstantinos Bousmalis, Nathan Silberman, David Dohan, Dumitru Erhan, and Dilip Krishnan. 2017. Unsupervised Pixel-Level Domain Adaptation with Generative Adversarial Networks. (07 2017), 95–104.Google Scholar

4. Chen Cao, Hongzhi Wu, Yanlin Weng, Tianjia Shao, and Kun Zhou. 2016. Real-time Facial Animation with Image-based Dynamic Avatars. ACM Trans. Graph. 35, 4, Article 126 (July 2016), 12 pages. Google ScholarDigital Library

5. Dan Casas, Andrew Feng, Oleg Alexander, Graham Fyffe, Paul Debevec, Ryosuke Ichikari, Hao Li, Kyle Olszewski, Evan Suma, and Ari Shapiro. 2016. Rapid Photorealistic Blendshape Modeling from RGB-D Sensors. In Proceedings of the 29th International Conference on Computer Animation and Social Agents (CASA ’16). ACM, New York, NY, USA, 121–129. Google ScholarDigital Library

6. S. A. Cassidy, B. Stenger, K. Yanagisawa, R. Cipolla, R. Anderson, V. Wan, S. Baron-Cohen, and L Van Dongen. 2016. Expressive Visual Text-to-Speech as an Assistive Technology for Individuals with Autism Spectrum Conditions. Computer Vision and Image Understanding 148 (2016), 193–200. Google ScholarDigital Library

7. Xi Chen, Diederik P. Kingma, Tim Salimans, Yan Duan, Prafulla Dhariwal, John Schulman, Ilya Sutskever, and Pieter Abbeel. 2016. Variational Lossy Autoencoder. CoRR abs/1611.02731 (2016). arXiv:1611.02731 http://arxiv.org/abs/1611.02731Google Scholar

8. Timothy F. Cootes, Gareth J. Edwards, and Christopher J. Taylor. 2001. Active Appearance Models. IEEE Transactions on Pattern Analysis and Machine Intelligence 23, 6 (June 2001), 681–685. Google ScholarDigital Library

9. Kristin J. Dana, Bram van Ginneken, Shree K. Nayar, and Jan J. Koenderink. 1999. Reflectance and Texture of Real-world Surfaces. ACM Transactions on Graphics 18, 1 (Jan. 1999), 1–34. Google ScholarDigital Library

10. G. J. Edwards, C. J. Taylor, and T. F. Cootes. 1998. Interpreting Face Images Using Active Appearance Models. In Proceedings of the 3rd. International Conference on Face & Gesture Recognition (FG ’98). IEEE Computer Society, Washington, DC, USA, 300–. Google ScholarDigital Library

11. P. Ekman. 1980. The Face of Man: Expressions of Universal Emotions in a New Guinea Village. Garland Publishing, Incorporated.Google Scholar

12. Steven J. Gortler, Radek Grzeszczuk, Richard Szeliski, and Michael F. Cohen. 1996. The Lumigraph. In Proceedings of the 23rd Annual Conference on Computer Graphics and Interactive Techniques (SIGGRAPH 1996). ACM, New York, NY, USA, 43–54. Google ScholarDigital Library

13. X. Hou, L. Shen, K. Sun, and G. Qiu. 2017. Deep Feature Consistent Variational Autoencoder. In 2017 IEEE Winter Conference on Applications of Computer Vision (WACV). 1133–1141.Google Scholar

14. Wei-Ning Hsu, Yu Zhang, and James R. Glass. 2017. Unsupervised Learning of Disentangled and Interpretable Representations from Sequential Data. In NIPS. 1876–1887.Google Scholar

15. Liwen Hu, Shunsuke Saito, Lingyu Wei, Koki Nagano, Jaewoo Seo, Jens Fursund, Iman Sadeghi, Carrie Sun, Yen-Chun Chen, and Hao Li. 2017. Avatar Digitization from a Single Image for Real-time Rendering. ACM Trans. Graph. 36, 6, Article 195 (Nov. 2017), 14 pages. Google ScholarDigital Library

16. Alexandra Eugen Ichim, Sofien Bouaziz, and Mark Pauly. 2015. Dynamic 3D Avatar Creation from Hand-held Video Input. ACM Transactions on Graphics 34, 4, Article 45 (July 2015), 14 pages. Google ScholarDigital Library

17. Sergey Ioffe and Christian Szegedy. 2015. Batch Normalization: Accelerating Deep Network Training by Reducing Internal Covariate Shift.. In ICML (JMLR Workshop and Conference Proceedings), Francis R. Bach and David M. Blei (Eds.), Vol. 37. JMLR.org, 448–456. http://dblp.uni-trier.de/db/conf/icml/icml2015.html#IoffeS15 Google ScholarDigital Library

18. Sing Bing Kang, R. Szeliski, and P. Anandan. 2000. The geometry-image representation tradeoff for rendering. In Proceedings 2000 International Conference on Image Processing, Vol. 2. 13–16 vol.2.Google Scholar

19. Vahid Kazemi and Josephine Sullivan. 2014. One Millisecond Face Alignment with an Ensemble of Regression Trees. In IEEE International Conference on Computer Vision and Pattern Recognition. Google ScholarDigital Library

20. Taeksoo Kim, Moonsu Cha, Hyunsoo Kim, Jung Kwon Lee, and Jiwon Kim. 2017. Learning to Discover Cross-Domain Relations with Generative Adversarial Networks. In ICML.Google Scholar

21. Diederik P. Kingma and Jimmy Ba. 2014. Adam: A Method for Stochastic Optimization. Proceedings of the 3rd International Conference on Learning Representations abs/1412.6980 (2014).Google Scholar

22. Diederik P. Kingma and Max Welling. 2013. Auto-Encoding Variational Bayes. In Proceedings of the 2nd International Conference on Learning Representations.Google Scholar

23. Oliver Klehm, Fabrice Rousselle, Marios Papas, Derek Bradley, Christophe Hery, Bernd Bickel, Wojciech Jarosz, and Thabo Beeler. 2015. Recent Advances in Facial Appearance Capture. Computer Graphics Forum (Proceedings of Eurographics) 34, 2 (May 2015), 709–733. Google ScholarDigital Library

24. Reinhard Knothe, Brian Amberg, Sami Romdhani, Volker Blanz, and Thomas Vetter. 2011. Morphable Models of Faces. Springer London, London, 137–168.Google Scholar

25. Tejas D. Kulkarni, William F. Whitney, Pushmeet Kohli, and Joshua B. Tenenbaum. 2015. Deep Convolutional Inverse Graphics Network. In Proceedings of the 28th International Conference on Neural Information Processing Systems (NIPS’15). MIT Press, Cambridge, MA, USA, 2539–2547. Google ScholarDigital Library

26. Samuli Laine, Tero Karras, Timo Aila, Antti Herva, Shunsuke Saito, Ronald Yu, Hao Li, and Jaakko Lehtinen. 2017. Production-level Facial Performance Capture Using Deep Convolutional Neural Networks. In Proceedings of the ACM SIGGRAPH/Eurographics Symposium on Computer Animation (SCA ’17). ACM, New York, NY, USA, Article 10, 10 pages. Google ScholarDigital Library

27. J.P. Lewis and Ken Anjyo. 2010. Direct Manipulation Blendshapes. IEEE Computer Graphics and Applications 30, 4 (2010), 42–50. Google ScholarDigital Library

28. John P. Lewis, Ken ichi Anjyo, Taehyun Rhee, Mengjie Zhang, Frédéric H. Pighin, and Zhigang Deng. 2014. Practice and Theory of Blendshape Facial Models. In Proc. Eurographics State of The Art Report.Google Scholar

29. Ming-Yu Liu, Thomas Breuel, and Jan Kautz. 2017. Unsupervised Image-to-image Translation Networks. In NIPS.Google Scholar

30. Iain Matthews and Simon Baker. 2004. Active Appearance Models Revisited. International Journal of Computer Vision 60, 2 (Nov. 2004), 135–164. Google ScholarDigital Library

31. Kyle Olszewski, Joseph J. Lim, Shunsuke Saito, and Hao Li. 2016. High-Fidelity Facial and Speech Animation for VR HMDs. Proceedings of ACM SIGGRAPH Asia 2016 35, 6 (December 2016). Google ScholarDigital Library

32. Alec Radford, Luke Metz, and Soumith Chintala. 2015. Unsupervised Representation Learning with Deep Convolutional Generative Adversarial Networks. CoRR abs/1511.06434 (2015). http://arxiv.org/abs/1511.06434Google Scholar

33. Tim Salimans and Diederik P Kingma. 2016. Weight Normalization: A Simple Reparameterization to Accelerate Training of Deep Neural Networks. In Advances in Neural Information Processing Systems 29, D. D. Lee, M. Sugiyama, U. V. Luxburg, I. Guyon, and R. Garnett (Eds.). Curran Associates, Inc., 901–909. Google ScholarDigital Library

34. Yaniv Taigman, Ming Yang, Marc’Aurelio Ranzato, and Lior Wolf. 2014. DeepFace: Closing the Gap to Human-Level Performance in Face Verification. In Proceedings of the 2014 IEEE Conference on Computer Vision and Pattern Recognition (CVPR ’14). IEEE Computer Society, Washington, DC, USA, 1701–1708. Google ScholarDigital Library

35. G. Taubin. 1995. Curve and Surface Smoothing Without Shrinkage. In Proceedings of the Fifth International Conference on Computer Vision (ICCV ’95). IEEE Computer Society, Washington, DC, USA, 852–. Google ScholarDigital Library

36. J. Thies, M. Zollhöfer, M. Stamminger, C. Theobalt, and M. Nießner. 2018. FaceVR: Real-Time Gaze-Aware Facial Reenactment in Virtual Reality. ACM Transactions on Graphics 2018 (TOG) (2018). Google ScholarDigital Library

37. Georgios Tzimiropoulos, Joan Alabort-i Medina, Stefanos Zafeiriou, and Maja Pantic. 2013. Generic Active Appearance Models Revisited. Springer Berlin Heidelberg, Berlin, Heidelberg, 650–663. Google ScholarDigital Library

38. Xuehan Xiong and Fernando De la Torre Frade. 2013. Supervised Descent Method and its Applications to Face Alignment. In IEEE International Conference on Computer Vision and Pattern Recognition. Pittsburgh, PA. Google ScholarDigital Library

39. Jun-Yan Zhu, Taesung Park, Phillip Isola, and Alexei A Efros. 2017. Unpaired Image-to-image Translation using Cycle-Consistent Adversarial Networks. (December 2017).Google Scholar