“Creative Use of Signal Processing and MARF in ISSv2 and Beyond” by Song, Mokhov, Song and Mudur

Conference:

Type:

Entry Number: 04

Title:

- Creative Use of Signal Processing and MARF in ISSv2 and Beyond

Presenter(s)/Author(s):

Abstract:

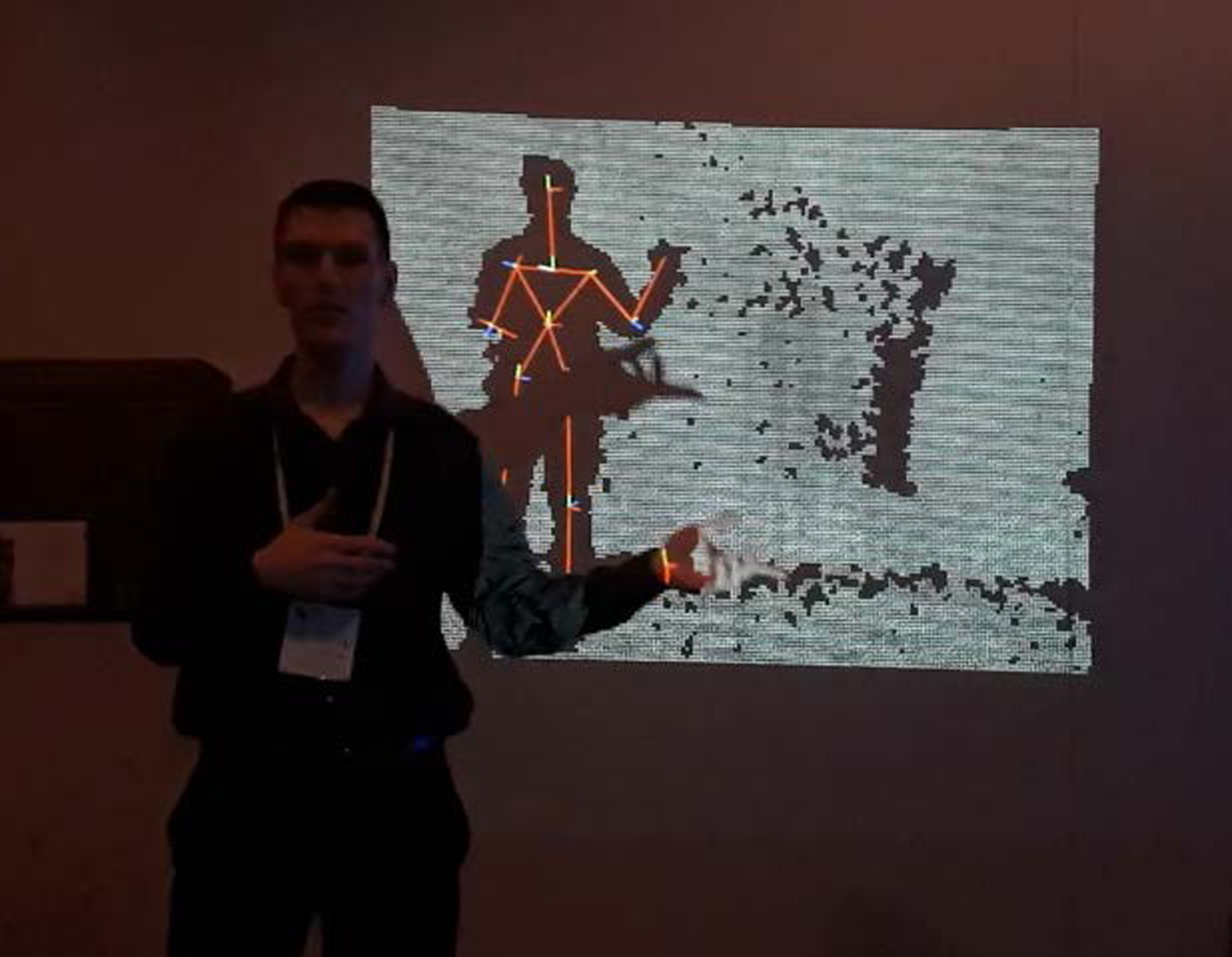

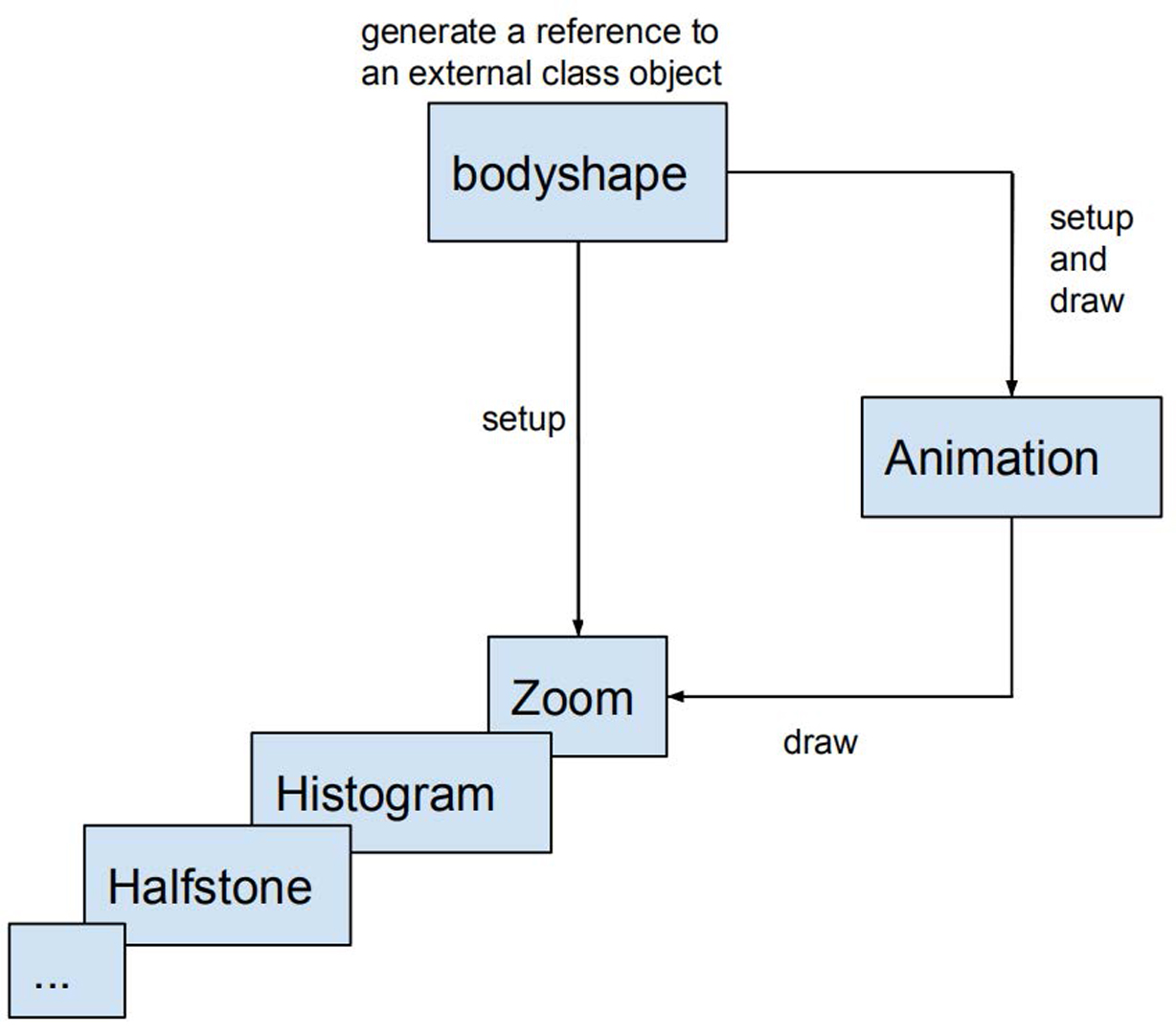

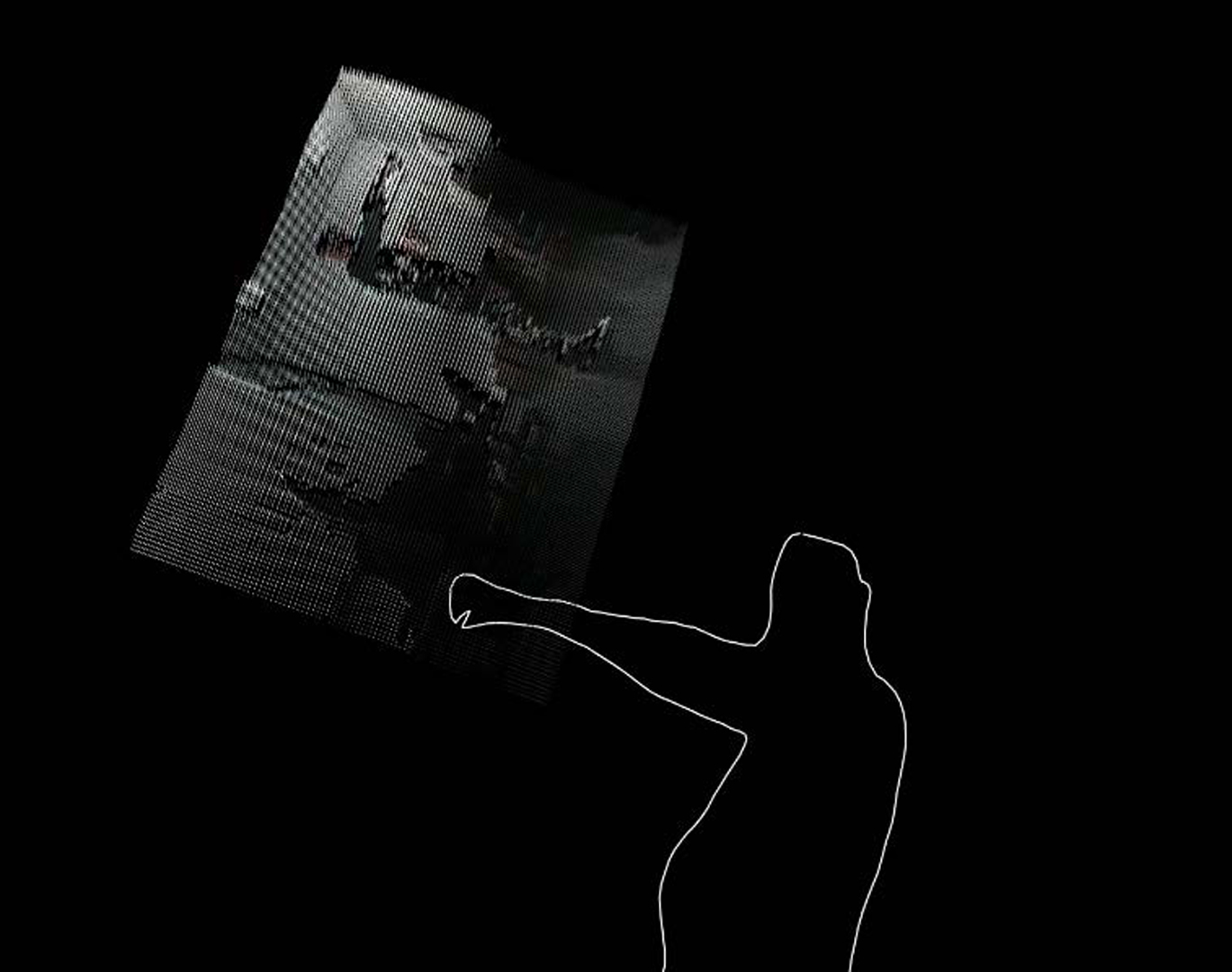

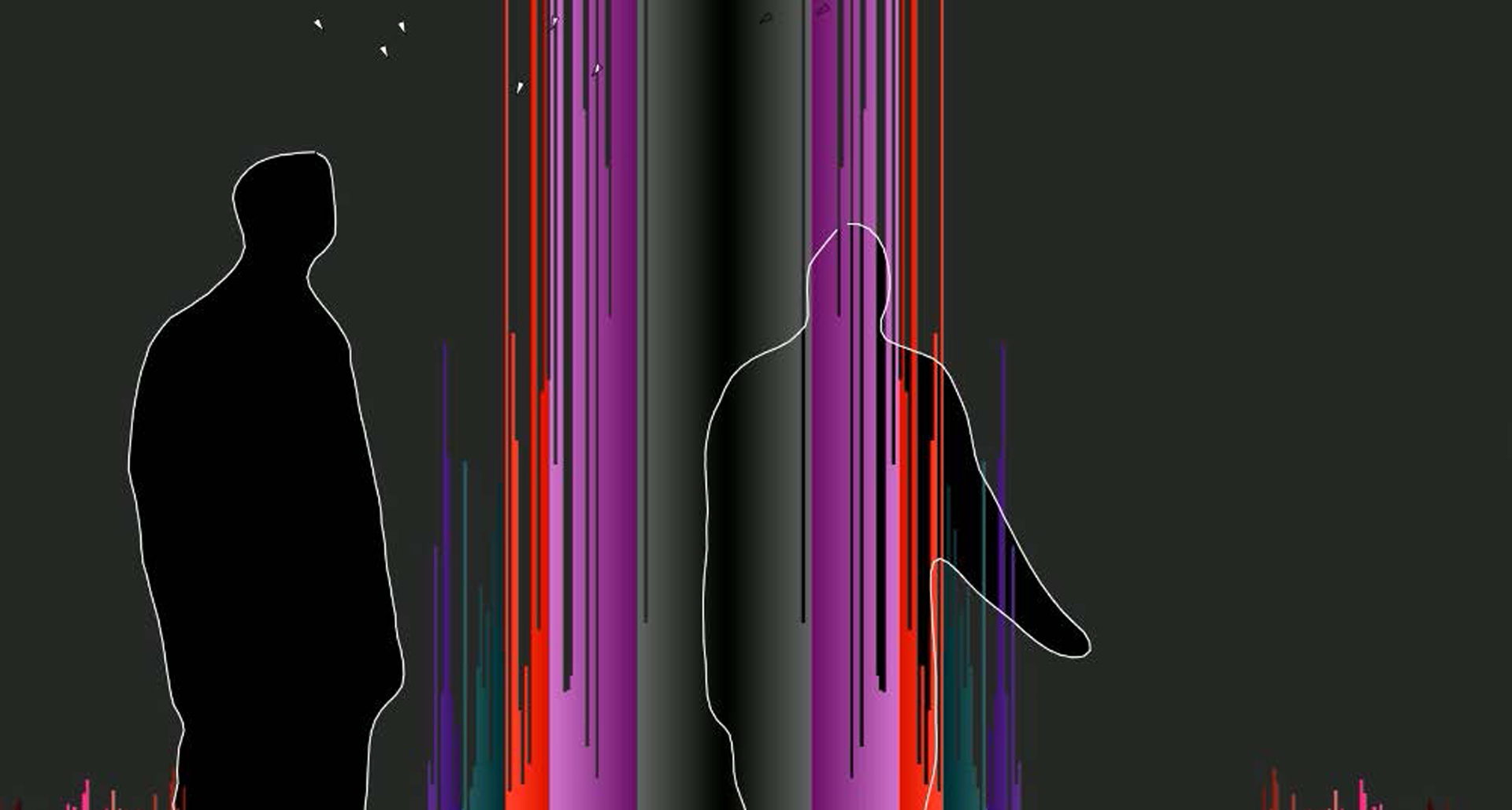

Illimitable Space System (ISS) is a real-time interactive configurable toolbox for use by artists to create interactive visual effects in theatre performances and in documentaries through user inputs such as gestures and voice. Kinect has been the primary input device for motion and video data capture. In this work in addition to the existing motion based visual and geometric data processing facilities present in ISSv2, we describe our efforts to incorporate audio processing with the help of Modular Audio Recognition Framework (MARF). The combination of computer vision and audio processing to interpret both music and human motion to create imagery in real time is both artistically interesting and technically challenging. With these additional modules, ISSv2 can help interactive performance authoring that employs visual tracking and signal processing in order to create trackable human-shaped animations in real time. These new modules are incorporated into the Processing software sketchbook and language framework used by ISSv2.We verify the effects of these modules, through live demonstrations which are briefly described.

References:

- Ben Fry and Casey Reas. 2001–2018. Processing – a programming language, development environment, and online community. [online]. (2001–2018). http://www.processing.org/.

- W. Scott Meador, Timothy J. Rogers, Kevin O’Neal, Eric Kurt, and Carol Cunningham. 2004. Mixing Dance Realities: Collaborative Development of Live-motion Capture in a Performing Arts Environment. Comput. Entertain. 2, 2 (April 2004), 12–26. https://doi.org/10.1145/1008213.1008233

- Serguei A. Mokhov. 2015. A MARFCLEF Approach to LifeCLEF 2015 Tasks. In Proceedings of LifeCLEF 2015. CEUR-WS.

- Serguei A. Mokhov, Miao Song, Satish Chilkaka, Zinia Das, Jie Zhang, Jonathan Llewellyn, and Sudhir P. Mudur. 2016. Agile Forward-Reverse Requirements Elicitation as a Creative Design Process: A Case Study of llimitable Space System v2. Journal of Integrated Design and Process Science 20, 3 (Sept. 2016), 3–37. https://doi.org/10.3233/jid-2016-0026 \

- Private School Entertainment. 2013. Making Film and Art with the Xbox Kinect–An Exist Elsewhere Behind the Scenes Featurette. [online]. (2013). http://vimeo.com/73163837.

- Miao Song, Serguei A. Mokhov, Jilson Thomas, and Sudhir P. Mudur. 2015. Applications of the Illimitable Space System in the Context of Media Technology and On-Stage Performance: a Collaborative Interdisciplinary Experience. In Proceedings of the 2015 IEEE Games Entertainment Media Conference (GEM 2015), Elena G. Bertozzi, Bill Kapralos, Nahum D. Gershon, and Jim R. Parker (Eds.). IEEE, 73–80. https://doi.org/10.1109/GEM.2015.7377204

- Mehdi Tayoubi. 2013. Technological backstage–Mr & Ms Dream a performance by Pietragalla Derouault Company & Dassault Systèmes. [online]. (2013). http://vimeo.com/68406063.

- Le Thanh Tung. 2013. Kinect test .2 for Emily’s dance show. [online]. (16 Oct. 2013). http://www.youtube.com/watch?v=Vbxf4xRLtrQ.

- Chris Vik. 2012. Dance Controlled Kinect Music (Part 1). [online]. (5 March 2012). http://www.youtube.com/watch?v=qXnLxi2nzrY.