“Layered Telepresence: Simultaneous Multi Presence Experience using Eye Gaze based Perceptual Awareness Blending” by Saraiji, Sugimoto, Fernando, Minamizawa and Tachi

Conference:

- SIGGRAPH 2016

-

More from SIGGRAPH 2016:

Type(s):

Entry Number: 14

Title:

- Layered Telepresence: Simultaneous Multi Presence Experience using Eye Gaze based Perceptual Awareness Blending

Presenter(s):

Description:

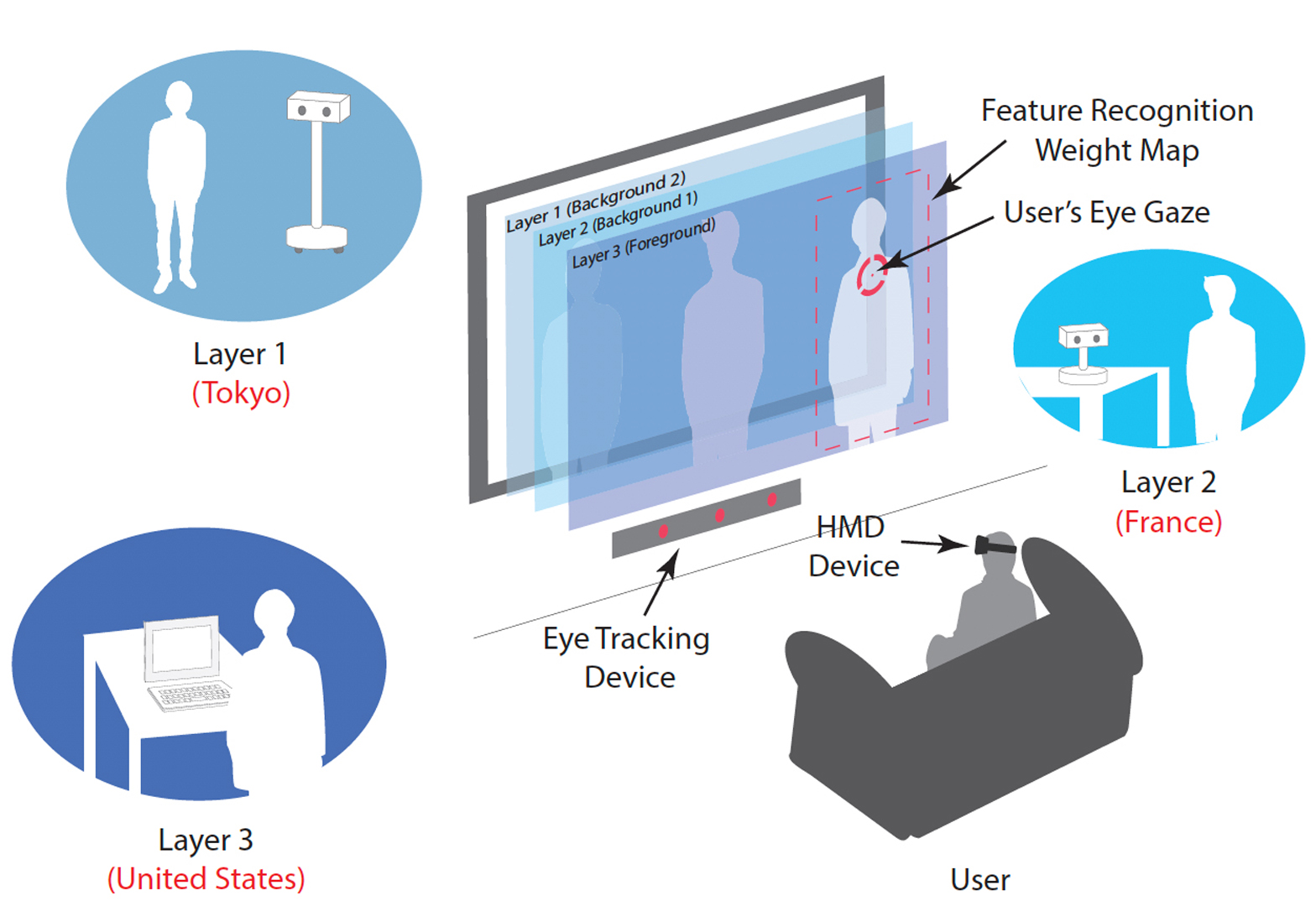

We propose “Layered Telepresence”, a novel method of experiencing simultaneous multi-presence. Users eye gaze and perceptual awareness are blended with real-time audio-visual information received from multiple telepresence robots. The system arranges audio-visual information received through multiple robots into a priority-driven layered stack. A weighted feature map was created based on the objects recognized for each layer, using imageprocessing techniques, and pushes the most weighted layer around the users gaze in to the foreground. All other layers are pushed back to the background providing an artificial depth-of-field effect. The proposed method not only works with robots, but also each layer could represent any audio-visual content, such as video seethrough HMD, television screen or even your PC screen enabling true multitasking.

References:

BENFORD, S., BOWERS, J., FAHL´EN, L. E., AND GREENHALGH, C. 1994. Managing mutual awareness in collaborative virtual environments. In Proceedings of the conference on Virtual reality software and technology, 223–236.

BOWMAN, D. A., AND MCMAHAN, R. P. 2007. Virtual reality: how much immersion is enough? Computer 40, 7, 36–43.

FAN, K., HUBER, J., NANAYAKKARA, S., AND INAMI, M. 2014. Spidervision: extending the human field of view for augmented awareness. In Proceedings of the 5th Augmented Human International Conference, ACM, 49.

FERNANDO, C. L., FURUKAWA, M., KUROGI, T., HIROTA, K., KAMURO, S., SATO, K., MINAMIZAWA, K., AND TACHI, S. 2012. Telesar v: Telexistence surrogate anthropomorphic robot. In ACM SIGGRAPH 2012 Emerging Technologies, ACM, Los Angeles, CA, USA, SIGGRAPH ’12, 23:1–23:1.

KOSARA, R. 2004. Semantic Depth of Field-Using Blur for Focus+ Context Visualization. PhD thesis, Kosara.

LINDLBAUER, D., AOKI, T., H¨OCHTL, A., UEMA, Y., HALLER, M., INAMI, M., AND M¨ULLER, J. 2014. A collaborative seethrough display supporting on-demand privacy. In ACM SIGGRAPH 2014 Emerging Technologies, ACM, 1.

MCNERNEY, M., AND YANG, R. Y., 1999. System for implementing multiple simultaneous meetings in a virtual reality mixed media meeting room, Dec. 7. US Patent 5,999,208.

TACHI, S. 2010. Telexistence. World Scientific.

YAO, L., DEVINCENZI, A., PEREIRA, A., AND ISHII, H. 2013. Focalspace: multimodal activity tracking, synthetic blur and adaptive presentation for video conferencing. In Proceedings of the 1st symposium on Spatial user interaction, ACM, 73–76.

Keyword(s):

- Simultaneous Multi Presence

- Perceptual Awareness Blending

- Eye Gaze

- Peripheral Vision

- Depth of field

Additional Images:

Acknowledgements:

This research is supported by the JST-ACCEL Embodied Media Project.